Mini desktops are a growing market, but so far it’s a market that Intel has had the run of. The company’s own “Next Unit of Computing” (NUC) and efforts like Gigabyte’s Brix Pro are diminutive but much more capable than the wimpy “nettops” of yesteryear.

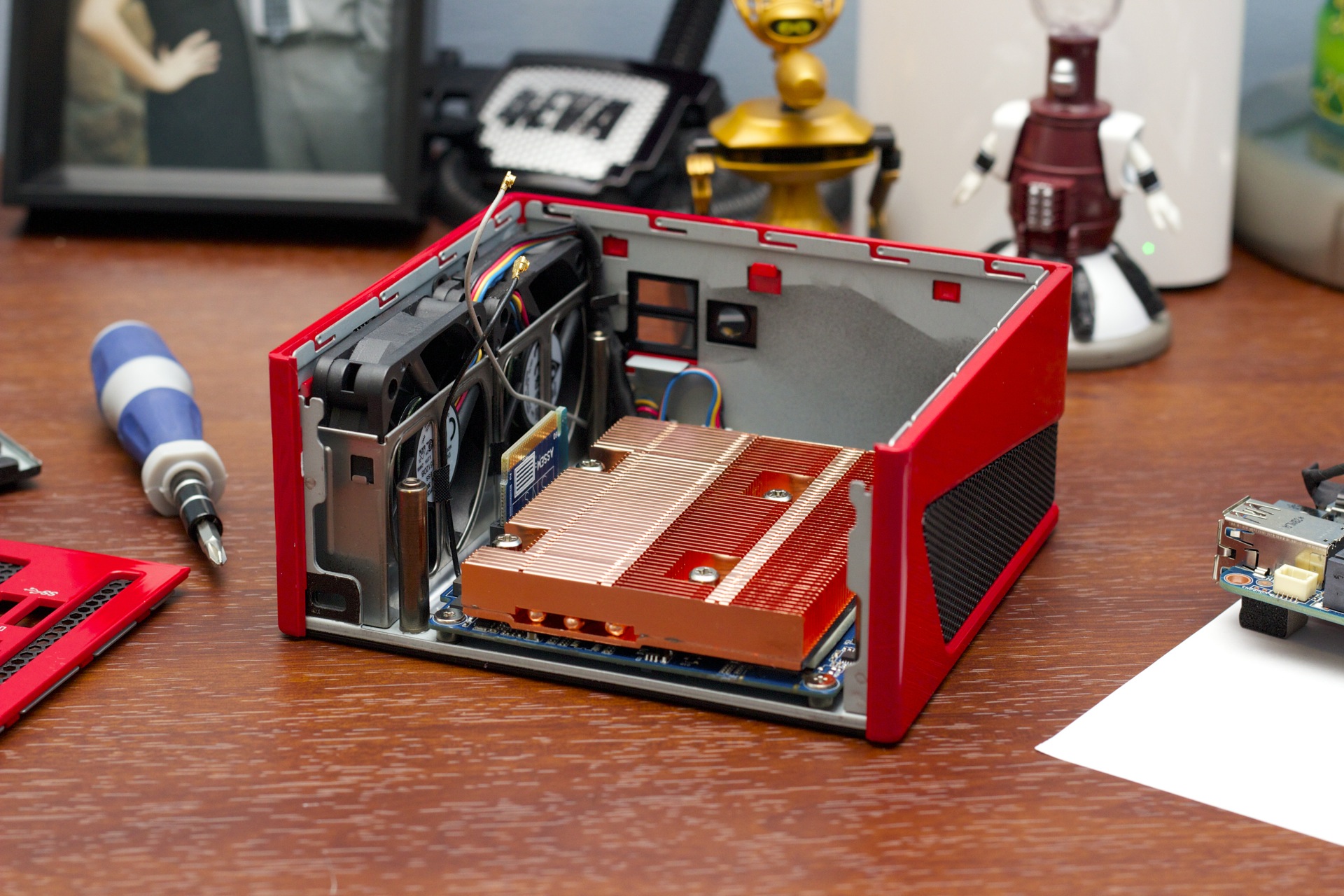

Now it’s time for AMD to get in on the fun. The company sent us two Gigabyte mini PCs that are roughly the same size as the Intel versions, but these machines use AMD A8 chips instead of Intel ones. While the smaller, cheaper GB-BXA8-5545 (which we’ll be reviewing in full in a separate piece) is basically just an AMD version of the NUC, the Brix Gaming (yes, that’s the device’s full name) is something else altogether. The race-car-red machine combines AMD’s CPU with a true dedicated AMD GPU, promising a level of graphics performance that we haven’t yet seen in a mini desktop.

Unfortunately, this is one of those times when reality doesn’t quite match expectations. The Brix Gaming does have a much faster GPU than any mini PC we’ve seen, but it has to make a few too many compromises to get there.

If you’ve seen one, you’ve seen them all

| Specs at a glance: Gigabyte Brix Gaming GB-BXA8G-8890 | |

|---|---|

| OS | Windows 8.1 x64 |

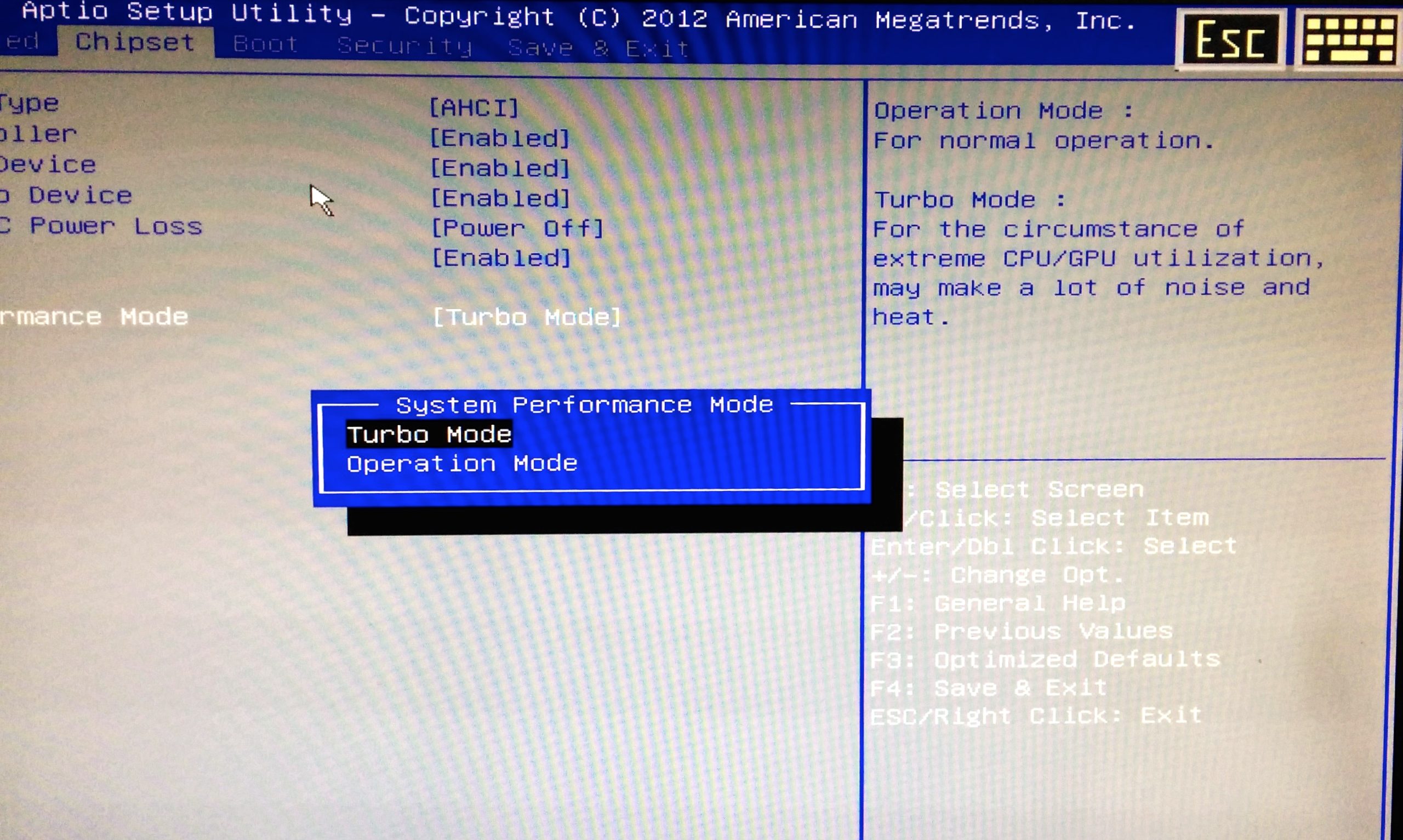

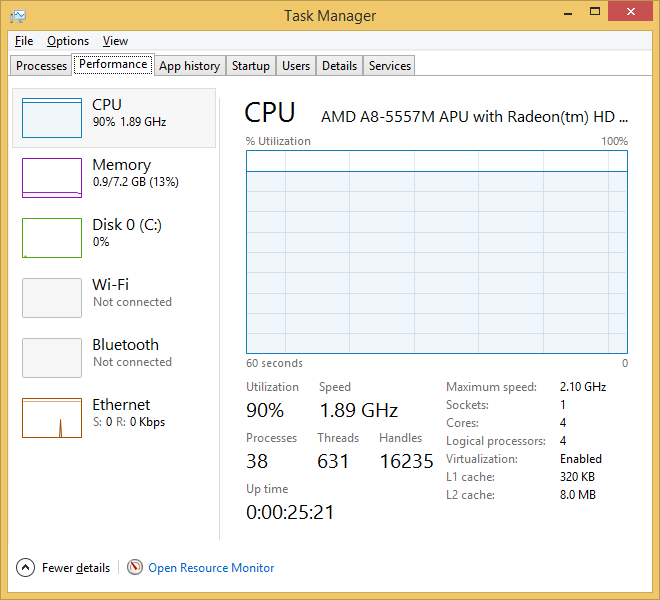

| CPU | 2.1GHz AMD A8-5557M capped at about 1.9GHz by default, Turbo Boost up to 3.1GHz available with proper BIOS settings |

| RAM | 8GB 1600MHz DDR3 (supports up to 16GB) |

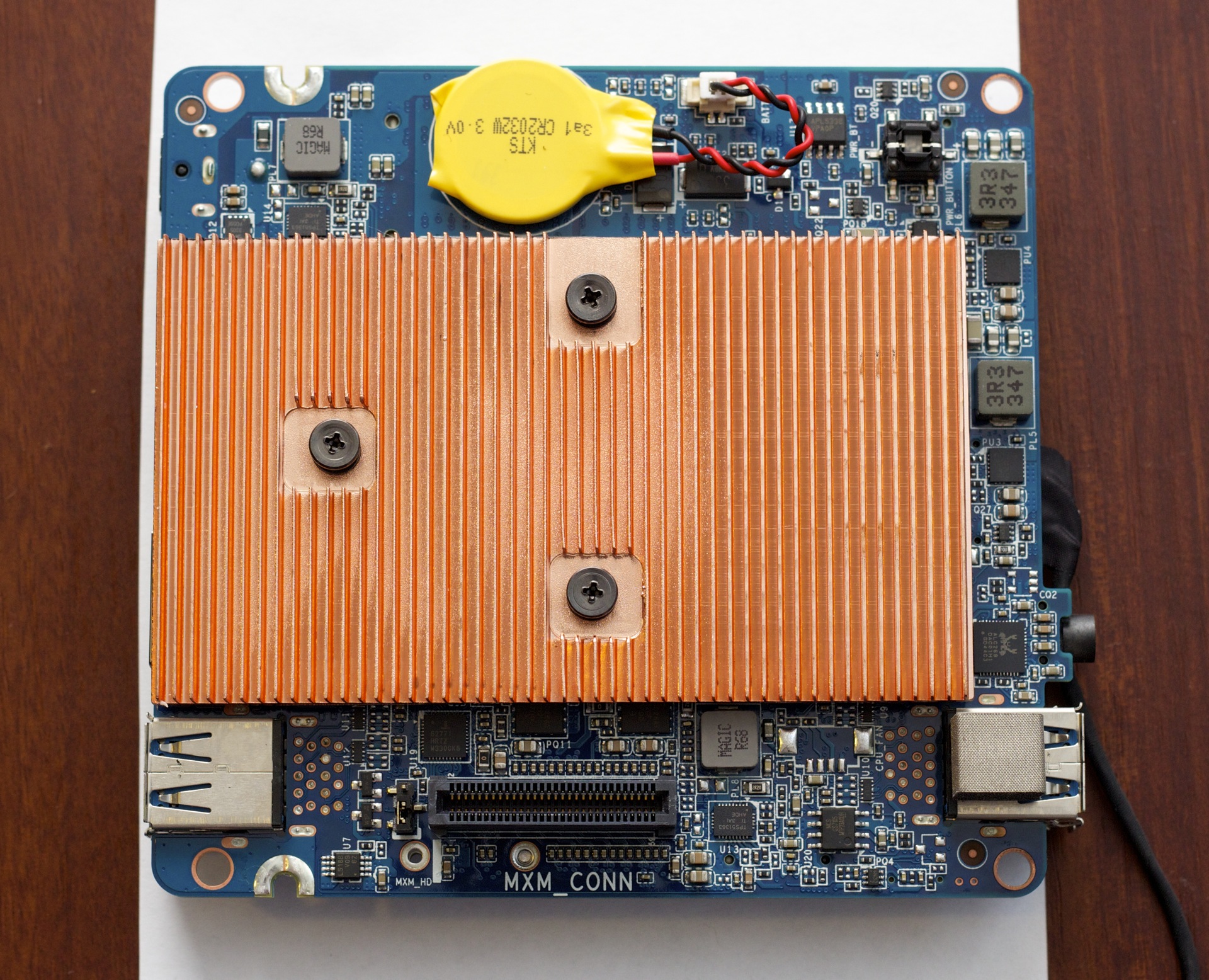

| GPU | Integrated: AMD Radeon 8550G (256 shaders) Dedicated: AMD Radeon R9 M275X (680 shaders) with 2GB 1125MHz GDDR5 |

| HDD | 128GB Crucial M500 mSATA SSD |

| Networking | 433Mbps 802.11ac Wi-Fi, Bluetooth 4.0, Gigabit Ethernet |

| Ports | 4x USB 3.0, 1x mini DisplayPort 1.2, 1x HDMI 1.4a, audio |

| Size | 5.04” x 4.54” x 2.35” (128 x 115.4 59.6 mm) |

| Other perks | Kensington lock, space for 2.5-inch laptop hard drive, VESA mounting bracket |

| Warranty | 1 year |

| Price | $569.99 (barebones), $814.97 with listed components and software |

The Brix Gaming is on the chunkier end of the mini PC spectrum—it’s imperceptibly shorter and about half an inch wider than the GB-BXA8-5545. The two of them are in the same ballpark, though, and both use the same chunky external power brick. The Brix Gaming is constructed primarily of red and black plastic, with black metal mesh used on the sides and back to improve airflow.

The port layout is identical to just about every mini PC we’ve seen this year. It has two USB 3.0 ports on the front and two more on the back, plus gigabit Ethernet, a mini-DisplayPort, an HDMI port, and a Kensington lock slot. A standard headphone jack on the front of the system rounds it all out.

Loading comments...

Loading comments...