You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Claude Code can now take over your computer to complete tasks

- Thread starter JournalBot

- Start date

This is going to get a bunch of over-confident devs and enthusiasts in a bad place.

Basic system security and guardrails exist for a reason. I wouldn't let a user run with root / sudo, why would I let any program?

Restricted service users exist for a reason. RO mounts, chroot, etc.

Basic system security and guardrails exist for a reason. I wouldn't let a user run with root / sudo, why would I let any program?

Restricted service users exist for a reason. RO mounts, chroot, etc.

Upvote

7

(7

/

0)

I love these companies constantly admitting don't actually know how their LLMs work, because they cannot stop it from doing stupid things. "It's trained not to do this but it might anyways! Good luck everyone!"Anthropic also notes on a support page that the model is trained to avoid “risky operations” such as moving or investing money, modifying files, scraping facial images, or inputting “sensitive data.” But the company also warns that such training safeguards “aren’t perfect” and “aren’t absolute,” meaning that “Claude may occasionally act outside these boundaries.”

Much has also been written about users become beta testers more often. It's one thing to get a free video game demo to help report bugs, but if I'm paying a company up to $100-200/mo (Claude Max), I expect to get FULLY FUNCTIONAL software/hardware.

Upvote

15

(15

/

0)

Unless you are Ted-Kaczynski-level removed from regular society, off the grid in a shack way out in the woods, someone somewhere is soon going to let the slopbot operate on data that is important to you. You may find the consequences of this careless usage to be... unpleasant.as long as its an optional feature, IDGAF.

Upvote

15

(15

/

0)

Is it me, or is quoting those two apps as the important "off limits" categories the most rich-white-guy thing?Anthropic says it has safeguards in place to prevent common risks like prompt injection, and it will limit access to certain “off limits” apps (e.g., “investment and trading platforms, cryptocurrency”) by default.

What about private browsing windows where people might be looking up abortion or LGBTQ+ info? What about chat/social apps? Even Recall had more sensible defaults and could have apps opted into not being recordable.

Upvote

8

(9

/

-1)

No worries, you don't need to give your SSN and CC numbers to Claude. It already has them by courtesy of DOGEI’m all in. How do I give it my social and credit card numbers?

Upvote

8

(9

/

-1)

My cybersecurity past is screaming at this like... no - why - why would you ever?!

Upvote

1

(1

/

0)

socialmischief

Ars Centurion

We have people opening tickets to install Python on their Macs because AI told them to do so in order to complete a simple task. They aren’t technical enough to know why that’s unnecessary when their computer has tools that can already do what they want (like Excel, Numbers, or Google Sheets).

I can’t wait for all the tickets that are going to come about from random incompatibilities and collisions between actions when people turn over full control of their computers.

Yah, it “saved you time” a few times, and then it cost you all your work once.

I can’t wait for all the tickets that are going to come about from random incompatibilities and collisions between actions when people turn over full control of their computers.

Yah, it “saved you time” a few times, and then it cost you all your work once.

Upvote

8

(8

/

0)

socialmischief

Ars Centurion

You know why. It begins with “L” and ends with “Aziness”.My cybersecurity past is screaming at this like... no - why - why would you ever?!

Upvote

5

(6

/

-1)

My first level IT got outsourced to India, so things that used to taker minutes now take days. So those tickets you're talking about will be very interesting to see being resolved.We have people opening tickets to install Python on their Macs because AI told them to do so in order to complete a simple task. They aren’t technical enough to know why that’s unnecessary when their computer has tools that can already do what they want (like Excel, Numbers, or Google Sheets).

I can’t wait for all the tickets that are going to come about from random incompatibilities and collisions between actions when people turn over full control of their computers.

Yah, it “saved you time” a few times, and then it cost you all your work once.

Upvote

5

(5

/

0)

NullSignal

Ars Scholae Palatinae

Claude Code can now take over your computer

You know what else Claude Code can do? It can f*ck right off. That's what it can do.

F*ck this AI bullshit. Who asked for this stupid future?

You're welcome.

Upvote

14

(14

/

0)

It'd make for a hell of an art installation- watch as AI agent goes awry and destroys this users computer/finances/personal life/etc. Maybe let passersby enter prompts to do it. Or maybe new fodder for Fear Factor.

Upvote

4

(4

/

0)

Never fear. There will always be a worse idea. it is a corollary of stupidity and about as limited.And here I thought Microsoft Recall was the worst idea.

Upvote

6

(6

/

0)

TBF, I bailed on Windows because it seemed to me that Microsoft was turning itself into Google, where your shit isn't yours but theirs and all your data belonged to them.I mean, Microsoft already took over my windows installation with unwanted AI slop. It's why I converted to a Linux installation.

I'm surprised Microsoft isn't suing Anthropic and all these other agentic AI companies for attempting to bypass Copilot on W11.

Either way, I'm not interested in giving any control to an automated theft machine to steal my data and poorly impersonate me on my own device.

So I pulled the Linux trigger several years go, before AI was more than a curiosity. What's more, I've personally moved a dozen people off of Windows into Linux and probably helped twice that with questions and tips - in just the last year.

My circle of acquaintances is only about 1000 people, so its a tiny sample size. But it is telling that most of them were young people (20's-30's), and all of them hated Win11 (which, knowing what was coming I conspired with the Penguin to never experience).

So while it's a different experience, Linux is a perfectly cromulent OS for anyone who hasn't sold their computing soul to Redmond. The change in OS from 10 to 11 is far more radical than the change in OS from Win10 to Linux. I recommend Mint Cinnamon for those who want a more "Windows-like" OS feel without Redmond demanding you make accounts with microsoft.com just to use the fucking thing.

The scary thing about Linux is that it will happily slit its own throat if you tell it to, so it gives you FAR more control over your system than Windows typically does and expects you to know what you're doing at least to some degree. But the support is largely good and I've personally yet to find any need or want that can't be adequately fulfilled in some fashion. That might require learning a different program that does the same thing, but that's a small cost to pay for the privacy and control gains you get.

And while it wasn't a thought at the time, since it was even a thing at the time, there's no fucking AI on my system, or being forced on me by the OS maker. THESE DAYS, that's a feature, not a flaw.

Upvote

4

(5

/

-1)

No, you don't. Not figuratively at all.I can't wait until my coworker who is in love with Claude* runs it and it shits his bed.

* only figuratively (...I hope)

'Lie down with pigs, get up smelling like garbage' from 'Bring Me the Head of Alfredo Garcia'.

Upvote

-1

(0

/

-1)

You've sortof nailed something on the head here;Is it me, or is quoting those two apps as the important "off limits" categories the most rich-white-guy thing?

What about private browsing windows where people might be looking up abortion or LGBTQ+ info? What about chat/social apps? Even Recall had more sensible defaults and could have apps opted into not being recordable.

The "influencers" who are breathlessly and dogmatically pushing "Agentic AI" are the same kinds of people (and in some circumstances, the same exact people) who pushed things like Gamestop/WallStreetBets, Crypto, NFTs, and now "trading markets" like Kalshi and Polymarket. And AI, of course.

So Anthropic stressing that “investment and trading platforms, cryptocurrency” are safe from their agent is actually extremely important to their target audience, because if Claude fucked up one of these guys' crypto wallet they might lose a considerable amount of support.

Upvote

8

(8

/

0)

Laziness confirmed.The top use case I can see (and have used) is setting up kiosk systems for temporary installs of non-networked (when deployed, isolated when being configured), non-sensitive exhibits. I would never trust it with free reign of my personal computer, but helping me get the environment set up for something I'm throwing away after a week saves me a lot of time with minimal risk. Step 1 is always determining the risk of your activity and the threshold of risk you're willing to accept for a given task.

do your job.

Upvote

-1

(1

/

-2)

MobiusPizza

Ars Scholae Palatinae

If we can run it in a sandbox I don't see privacy being an issue. This could be useful for GUI app development. AI coding tools have issue with making GUIs as they need human in loop to feed it screenshots.

Upvote

-4

(1

/

-5)

We've spent so much time training our parents and grandparents not to let the friendly-sounding tech support person to remotely control our computers, only to be doing the same thing but for an AI agent.

Upvote

14

(14

/

0)

I gave claude-code root without password on my Linux desktop months ago so it could quickly address some issues I was having. It solved my problem and there was no collateral damage. I wouldn't put it in charge of anything other people depend on, but I think it's good enough to treat like an entry-level engineer.

Upvote

-9

(3

/

-12)

I am able to imagine some pretty bad things, like this AI running on a computer deep in the Cheyenne Mountain Complex and hitting the wrong "button" during a simulated attack ...I think we do, because I don't think the worst thing you can imagine is the actual worst thing that could happen. Sadly.

But you are probably correct - there are actually even worse scenarios...

Upvote

2

(2

/

0)

DevContainer, maximum firewall rules.

Been able to do some cool stuff with Ghidra and Claude Code (circumventing the licensure schema of a long-abandoned piece of software), but it certainly gives you a skin crawling feeling seeing it work.

Been able to do some cool stuff with Ghidra and Claude Code (circumventing the licensure schema of a long-abandoned piece of software), but it certainly gives you a skin crawling feeling seeing it work.

Upvote

2

(2

/

0)

James Brooks

Ars Praetorian

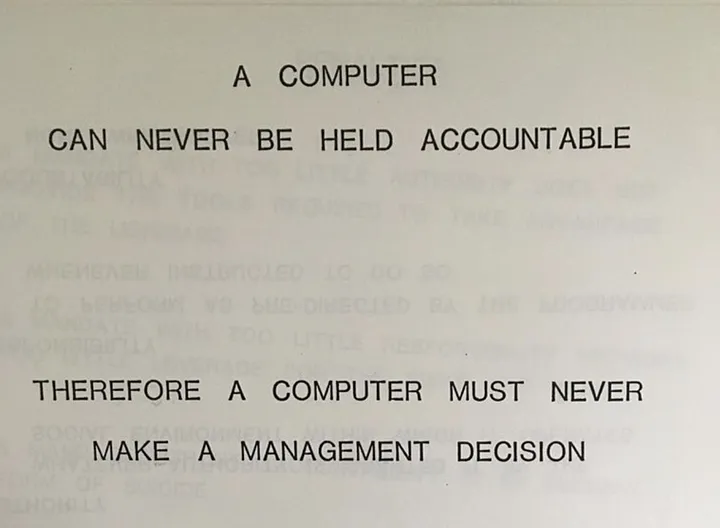

This motto seems to have changed in the last few years to “a computer can never be held accountable, but neither seemingly can anyone in management be held accountable so whats the difference?”Don't make me tap the sign.

Upvote

7

(9

/

-2)

That's an incorrect assumption that it's my job. These projects are for the community as a volunteer.Laziness confirmed.

do your job.

Believe it or not, not everyone has infinite time or resources. We identify automations in daily life and deploy them where we can balance with the risk.

This isn't always an "us vs them" argument. We can acknowledge that using AI for things carries risk (both societal and in cybersecurity) while also understanding where and when people who are different from us and have different values might find it appropriate to accept risk when the alternate is just not doing a thing. And that goes for all manner of automation. Did you use that numpy library without checking? Maybe if it's a small project. Might be a bit more diligent if it's connected to a critical system. Or you might build your own libraries because you're dealing with financial data and you just don't trust it.

So tired of people getting so tribal.

Last edited:

Upvote

-5

(1

/

-6)

A product that only deletes all of your data 1% of the time is not safe, and stupid to use, but usually won't delete all your data. It will generate quite a few anecdotes of success. Basing your judgment on those anecdotes is going to get you in trouble.I gave claude-code root without password on my Linux desktop months ago so it could quickly address some issues I was having. It solved my problem and there was no collateral damage. I wouldn't put it in charge of anything other people depend on, but I think it's good enough to treat like an entry-level engineer.

Upvote

7

(7

/

0)

Clearly AI won't stop until it reaches MAX_STUPID.

Sigh.

Sigh.

Upvote

0

(0

/

0)

Fully functional? So 2022 of you.I love these companies constantly admitting don't actually know how their LLMs work, because they cannot stop it from doing stupid things. "It's trained not to do this but it might anyways! Good luck everyone!"

Much has also been written about users become beta testers more often. It's one thing to get a free video game demo to help report bugs, but if I'm paying a company up to $100-200/mo (Claude Max), I expect to get FULLY FUNCTIONAL software/hardware.

Upvote

4

(4

/

0)

We really are going to end up living in Idiocracy.

We already are, except that Donald Trump is more over-the-top and stupid than Dwayne Elizondo Mountain Dew Herbert Camacho. At least President Camacho had the humility to realize that he needed the help of a smart person.

Upvote

3

(3

/

0)