You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

AMD updates Radeon GPU line: Higher clocks for three “50” suffix refreshes

- Thread starter JournalBot

- Start date

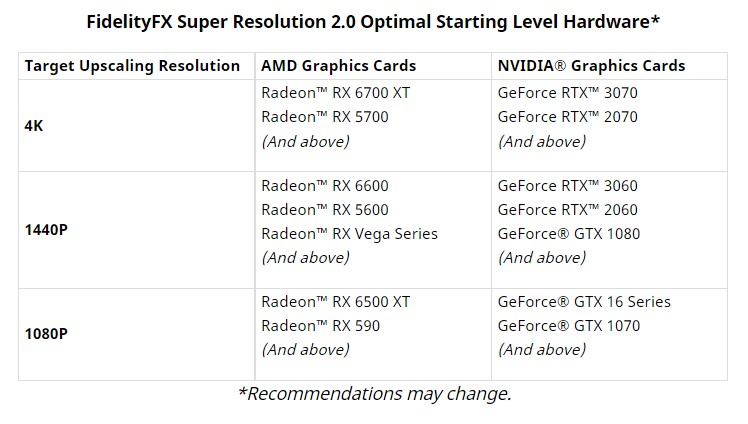

Good to see that AMD is allowing previous cards to benefit from new versions of FSR...I keep hoping that Nvidia will roll out resizable BAR to my RTX 2070 (and other pre-3000 series RTX GPUs), but it seems unlikely.

Upvote

4

(4

/

0)

DaveSimmons

Ars Legatus Legionis

Absolute rip-off pricing.

In fairness, if they priced it lower the money would just go to scalpers instead. If the prices "have to" be this high then I'd rather have the money go to AMD and Nvidia than to the scalpers.

Upvote

0

(3

/

-3)

Hey, sounds good to me!Slight typo

6660XT sounds a bit ominousThe RX 6950XT, RX 6750XT, and RX 6660XT are heading to retailers today

Upvote

7

(7

/

0)

I know nothing about those particular SW packages other than that they've been around for a long time, but my educated guess would be that they don't use GPU ray-tracing even if it's available, & do it using only the CPU. (FWIW, I also use my PC for art, rather than gaming, but I'm a photographer, not an animator or 3D graphics artist.)This may be a dumb question, or something folks think I should know by now, but how does the whole "gaming" video card thing relate to graphic art?

I get that the more powerful the processor and amount of RAM available in a video card/computer the faster the renders for graphic art, but then I hear about iRay in games and I do manual rendering in iRay using DAZ 3D, and that kind of confuses me because that's not quite the same thing. Real time rendering of a gaming scene using ray tracing is one thing, but rendering a model/scene in iRay in DAZ takes orders of magnitude longer.

So while using the same name, and I figure the same kind of ray tracing, the processes are entirely different. From what I gather, the game uses pre-rendered scenes, while I'm rendering the scene new. But I'm not sure that's how it actually works. I've tried looking this stuff up, but the explanations start sounding like they're coming from graphics engineers who assume prior graphic engineering knowledge the reader probably doesn't have.

I'm using a GTX 1070 now, which is old, and I've been looking to upgrade. The nVidia line of hamstrung gaming processors are more affordable than the fully unlocked ones, but I'm concerned that my graphic art rendering will be massively negatively impacted by the hamstringing.

So I guess the question is, are the same things in video cards that render games so fast and well the same bits AND is used the same way in iRay rendering of graphic art? My guess is probably yes, but I'm uncertain how ray tracing rendering in games works compared to iRay rendering (which is ray-tracing) a still image in fairly high resolution. The game seems to run that rendering a whole hell of a lot faster.

Could someone who understand this stuff better than I please explain the difference (if any) in terms anyone can understand? It'd help those of us who game, and do art, better understand the relationship and make better video card buying decisions.

Upvote

1

(2

/

-1)

Mr. Perfect

Ars Praetorian

Meh prices for meh performance.

The RX 6950 XT would make a bit of sense compared to RTX 3080 Ti and RTX 3090... but neither of those GPUs made any sense in the first place.

At this point, might as well just wait for RX 7000 and RTX 4000. Even if you plan on buying RX 6000 or RTX 3000, as long as crypto doesn't somehow blow up 4-5x, prices can only really go down, especially for used, former mining GPUs.

I wouldn't buy ex-mining GPUs, unless you like random crashes and visual artifacts. They tend to get pretty trashed.

Has there ever been a proper study of this? Thess are always the first concerns people have with ex-mining cards, and while they're reasonable concerns it all seems to be hear-say and speculation.

On the other hand people make claims that the cards have all been undervolted and saved from the effects of thermal cycling due to being on all the time.

Before I pass over a cheap GPU stained with the tears of a miner, a good study would be prudent.

Upvote

13

(13

/

0)

Speaking as a hardware person, if an ex-mining card works at all, I see no reason to believe it'd be any less reliable than any other used card of the same kind. TBH, I suspect that people just resent miners so much that they want an excuse to make it hard for miners to resell their obsolete (for mining, at least) video cards.Meh prices for meh performance.

The RX 6950 XT would make a bit of sense compared to RTX 3080 Ti and RTX 3090... but neither of those GPUs made any sense in the first place.

At this point, might as well just wait for RX 7000 and RTX 4000. Even if you plan on buying RX 6000 or RTX 3000, as long as crypto doesn't somehow blow up 4-5x, prices can only really go down, especially for used, former mining GPUs.

I wouldn't buy ex-mining GPUs, unless you like random crashes and visual artifacts. They tend to get pretty trashed.

Has there ever been a proper study of this? Thess are always the first concerns people have with ex-mining cards, and while they're reasonable concerns it all seems to be hear-say and speculation.

On the other hand people make claims that the cards have all been undervolted and saved from the effects of thermal cycling due to being on all the time.

Before I pass over a cheap GPU stained with the tears of a miner, a good study would be prudent.

Upvote

4

(5

/

-1)

johnt007871

Ars Praefectus

Execute my order for a Sixty Six... Fifty XT.Slight typo

6660XT sounds a bit ominousThe RX 6950XT, RX 6750XT, and RX 6660XT are heading to retailers today

Upvote

4

(4

/

0)

I perhaps should have explained that the option to use both the CPU and GPU is toggled, so its using both when I do a render. I have a CPU temp monitoring program running to see the values (and that also tells me when I need to clean the fans/fins/vents on the inside when the temps hit near T-Max and begin throttling the workload to avoid exceeding it), which typically tell me when the render is done as it suddenly drops back near normal for an idle state. Between very quiet fans and my failing hearing, I can't always tell when the system heats up and cools off.I know nothing about those particular SW packages other than that they've been around for a long time, but my educated guess would be that they don't use GPU ray-tracing even if it's available, & do it using only the CPU. (FWIW, I also use my PC for art, rather than gaming, but I'm a photographer, not an animator or 3D graphics artist.)This may be a dumb question, or something folks think I should know by now, but how does the whole "gaming" video card thing relate to graphic art?

I get that the more powerful the processor and amount of RAM available in a video card/computer the faster the renders for graphic art, but then I hear about iRay in games and I do manual rendering in iRay using DAZ 3D, and that kind of confuses me because that's not quite the same thing. Real time rendering of a gaming scene using ray tracing is one thing, but rendering a model/scene in iRay in DAZ takes orders of magnitude longer.

So while using the same name, and I figure the same kind of ray tracing, the processes are entirely different. From what I gather, the game uses pre-rendered scenes, while I'm rendering the scene new. But I'm not sure that's how it actually works. I've tried looking this stuff up, but the explanations start sounding like they're coming from graphics engineers who assume prior graphic engineering knowledge the reader probably doesn't have.

I'm using a GTX 1070 now, which is old, and I've been looking to upgrade. The nVidia line of hamstrung gaming processors are more affordable than the fully unlocked ones, but I'm concerned that my graphic art rendering will be massively negatively impacted by the hamstringing.

So I guess the question is, are the same things in video cards that render games so fast and well the same bits AND is used the same way in iRay rendering of graphic art? My guess is probably yes, but I'm uncertain how ray tracing rendering in games works compared to iRay rendering (which is ray-tracing) a still image in fairly high resolution. The game seems to run that rendering a whole hell of a lot faster.

Could someone who understand this stuff better than I please explain the difference (if any) in terms anyone can understand? It'd help those of us who game, and do art, better understand the relationship and make better video card buying decisions.

The CPU and GPU are both involved, but I have the option to toggle one or the other or both to do the rendering.

I've actually done a LOT of looking into this. Compounding the issue is that DAZ3D has undergone several versions, and iterations, and iRay is a later addition which has undergone a lot of revisions.Game rendering uses the GPU for the heavy lifting, and less accurate but much faster algorithms to create real-time visuals that look nice in the context of the game. Tricks and shortcuts are used based on knowing what is being rendered.

App rendering uses the CPU and (only if the application supports it) GPU acceleration to create each frame using more accurate and more general-purpose algorithms. If the GPU is used, it's as a multi-multi-core CPU not to display frames.

It depends on the software you are using whether a newer GPU will help a lot, a little, or not at all. Finding forums for your specific apps is a good place to start. One of the StackOverflow sibling sites might have content too.

The primary way it loads a scene is through the CPU, but the GPU carries the heavy lifting for rendering, from what I read. It CAN render through CPU alone, but it takes about four times longer (I found that out when a model broke and I couldn't figure out why it wouldn't render properly. I eventually dumped the model, saved each of the parts of it as a preset and started over with a new base model, applying the presets until I had what I wanted and it rendered fine - go figure).

But believe me when I say I've spent hundreds of hours poring over forums, sites and resources trying to figure this out. Considering how expensive GPU's are today, its not a decision to take lightly. The ONLY thing of note I have is that it requires a CUDA-enabled NVIDIA video card, or it goes entirely to the CPU to render (which given the temps makes sense).

Given that, I'm assuming that I'd have better luck with bigger/faster CPU than JUST upgrading my video card. And considering the CPU load, that may be a better, and cheaper, way to go, since unlike the GPU, it's brand agnostic regarding rendering for CPU's and AMD makes great, reasonably priced CPU's.

Using your suggestion about formulas, and limiting searches to within the last year, I DID fine a reddit conversation about video cards in DAZ, and it does still need an nVidia card to include the GPU when rendering, and it DOES have an impact on rendering speeds based on the stats of the card alone.

At least that narrows down my search. What impact the throttling nVidia has on it remains to be seen, though, since it's using the card strictly to calculate, and not as much to put it out on the screen in real time. That's mostly what crypto miners do, too. That throttling is pretty new, so it may not have filtered into the community enough for issues to unfold. And if as it seems the CPU is used most in rendering this way in the first place, might not necessarily be a thing to worry about if the CPU is buffed up while keeping the same video card (which is fine IMHO, but I don't have huge demands on it otherwise, either).

I may start looking for one of the last/best AM4 socket AMDs instead. It kind of seems like it's more important than the video card, and the video card is just an additional goodie if you have an nVidia.

So thanks for the clues that helped me find that out!

Upvote

4

(4

/

0)

Lets be frank, "value" in the GPU market means getting one for close to MSRP. Unless things radically change, don't expect price wars to happen... ever.

I think a viable 3rd competitor should count as radical. The next gen of AMD APUs are rumored to compete with the current midrange (3060 sans raytracing) which should also qualify as radical.

Im really looking forward to the 2nd generation of Intel discrete GPUs, but not half as much as ARS updating their comment system, it's painful on desktop & not worth the frustration on mobile. The only redeeming feature is the people enduring it.

Upvote

2

(2

/

0)

Okay, so bear in mind that RTX ray-tracing is a relatively recent thing, & it's not CUDA. You might want to research whether DAZ actually uses NVidia RTX or not, because, based on what you're saying, it sounds like it doesn't. Now, RTX ray-tracing is probably not of the same quality as CPU or CUDA based ray-tracing, but it'd be great for real-time ray-tracing that'd be good enough for prototyping / setup. I would strongly advise you to contact DAZ themselves & ask them if their app uses RTX when available.I perhaps should have explained that the option to use both the CPU and GPU is toggled, so its using both when I do a render. I have a CPU temp monitoring program running to see the values (and that also tells me when I need to clean the fans/fins/vents on the inside when the temps hit near T-Max and begin throttling the workload to avoid exceeding it), which typically tell me when the render is done as it suddenly drops back near normal for an idle state. Between very quiet fans and my failing hearing, I can't always tell when the system heats up and cools off.I know nothing about those particular SW packages other than that they've been around for a long time, but my educated guess would be that they don't use GPU ray-tracing even if it's available, & do it using only the CPU. (FWIW, I also use my PC for art, rather than gaming, but I'm a photographer, not an animator or 3D graphics artist.)This may be a dumb question, or something folks think I should know by now, but how does the whole "gaming" video card thing relate to graphic art?

I get that the more powerful the processor and amount of RAM available in a video card/computer the faster the renders for graphic art, but then I hear about iRay in games and I do manual rendering in iRay using DAZ 3D, and that kind of confuses me because that's not quite the same thing. Real time rendering of a gaming scene using ray tracing is one thing, but rendering a model/scene in iRay in DAZ takes orders of magnitude longer.

So while using the same name, and I figure the same kind of ray tracing, the processes are entirely different. From what I gather, the game uses pre-rendered scenes, while I'm rendering the scene new. But I'm not sure that's how it actually works. I've tried looking this stuff up, but the explanations start sounding like they're coming from graphics engineers who assume prior graphic engineering knowledge the reader probably doesn't have.

I'm using a GTX 1070 now, which is old, and I've been looking to upgrade. The nVidia line of hamstrung gaming processors are more affordable than the fully unlocked ones, but I'm concerned that my graphic art rendering will be massively negatively impacted by the hamstringing.

So I guess the question is, are the same things in video cards that render games so fast and well the same bits AND is used the same way in iRay rendering of graphic art? My guess is probably yes, but I'm uncertain how ray tracing rendering in games works compared to iRay rendering (which is ray-tracing) a still image in fairly high resolution. The game seems to run that rendering a whole hell of a lot faster.

Could someone who understand this stuff better than I please explain the difference (if any) in terms anyone can understand? It'd help those of us who game, and do art, better understand the relationship and make better video card buying decisions.

The CPU and GPU are both involved, but I have the option to toggle one or the other or both to do the rendering.

I've actually done a LOT of looking into this. Compounding the issue is that DAZ3D has undergone several versions, and iterations, and iRay is a later addition which has undergone a lot of revisions.Game rendering uses the GPU for the heavy lifting, and less accurate but much faster algorithms to create real-time visuals that look nice in the context of the game. Tricks and shortcuts are used based on knowing what is being rendered.

App rendering uses the CPU and (only if the application supports it) GPU acceleration to create each frame using more accurate and more general-purpose algorithms. If the GPU is used, it's as a multi-multi-core CPU not to display frames.

It depends on the software you are using whether a newer GPU will help a lot, a little, or not at all. Finding forums for your specific apps is a good place to start. One of the StackOverflow sibling sites might have content too.

The primary way it loads a scene is through the CPU, but the GPU carries the heavy lifting for rendering, from what I read. It CAN render through CPU alone, but it takes about four times longer (I found that out when a model broke and I couldn't figure out why it wouldn't render properly. I eventually dumped the model, saved each of the parts of it as a preset and started over with a new base model, applying the presets until I had what I wanted and it rendered fine - go figure).

But believe me when I say I've spent hundreds of hours poring over forums, sites and resources trying to figure this out. Considering how expensive GPU's are today, its not a decision to take lightly. The ONLY thing of note I have is that it requires a CUDA-enabled NVIDIA video card, or it goes entirely to the CPU to render (which given the temps makes sense).

Given that, I'm assuming that I'd have better luck with bigger/faster CPU than JUST upgrading my video card. And considering the CPU load, that may be a better, and cheaper, way to go, since unlike the GPU, it's brand agnostic regarding rendering for CPU's and AMD makes great, reasonably priced CPU's.

Using your suggestion about formulas, and limiting searches to within the last year, I DID fine a reddit conversation about video cards in DAZ, and it does still need an nVidia card to include the GPU when rendering, and it DOES have an impact on rendering speeds based on the stats of the card alone.

At least that narrows down my search. What impact the throttling nVidia has on it remains to be seen, though, since it's using the card strictly to calculate, and not as much to put it out on the screen in real time. That's mostly what crypto miners do, too. That throttling is pretty new, so it may not have filtered into the community enough for issues to unfold. And if as it seems the CPU is used most in rendering this way in the first place, might not necessarily be a thing to worry about if the CPU is buffed up while keeping the same video card (which is fine IMHO, but I don't have huge demands on it otherwise, either).

I may start looking for one of the last/best AM4 socket AMDs instead. It kind of seems like it's more important than the video card, and the video card is just an additional goodie if you have an nVidia.

So thanks for the clues that helped me find that out!

Upvote

0

(0

/

0)

This may be a dumb question, or something folks think I should know by now, but how does the whole "gaming" video card thing relate to graphic art?

I get that the more powerful the processor and amount of RAM available in a video card/computer the faster the renders for graphic art, but then I hear about iRay in games and I do manual rendering in iRay using DAZ 3D, and that kind of confuses me because that's not quite the same thing. Real time rendering of a gaming scene using ray tracing is one thing, but rendering a model/scene in iRay in DAZ takes orders of magnitude longer.

So while using the same name, and I figure the same kind of ray tracing, the processes are entirely different. From what I gather, the game uses pre-rendered scenes, while I'm rendering the scene new. But I'm not sure that's how it actually works. I've tried looking this stuff up, but the explanations start sounding like they're coming from graphics engineers who assume prior graphic engineering knowledge the reader probably doesn't have.

I'm using a GTX 1070 now, which is old, and I've been looking to upgrade. The nVidia line of hamstrung gaming processors are more affordable than the fully unlocked ones, but I'm concerned that my graphic art rendering will be massively negatively impacted by the hamstringing.

So I guess the question is, are the same things in video cards that render games so fast and well the same bits AND is used the same way in iRay rendering of graphic art? My guess is probably yes, but I'm uncertain how ray tracing rendering in games works compared to iRay rendering (which is ray-tracing) a still image in fairly high resolution. The game seems to run that rendering a whole hell of a lot faster.

Could someone who understand this stuff better than I please explain the difference (if any) in terms anyone can understand? It'd help those of us who game, and do art, better understand the relationship and make better video card buying decisions.

Games don't use pre-rendered scenes, it's the opposite. Rendering something in 'not-real-time' is pre-rendering.

To be honest none of the following is relevant to your purchasing decision. Any [edit: nvidia] gaming GPU will render your DAZ content just fine if DAZ is utilizing GPU rendering. If it isn't, then the rendering is happening on the CPU and the GPU doesn't even matter except to show the viewport with acceptable performance.

"Ray tracing" is a meta term that encompasses several different techniques on the CPU or the GPU (ray tracing was first done on the CPU before GPUs even existed). 'Art' and movie VFX rendering don't use the same algorithms that games do. There are similarities, yes, but as another user said, games use approximations, shortcuts, and a lot of "cheats" in order to run in real time and this means that their rendering is not fully accurate to life, it is merely 'good enough' smoke and mirrors and comes with a lot of limitations and things you cannot do. Pre-rendering takes so long because it's not using any shortcuts or cheats and is fully accurate with a complete simulation of photons bouncing all over the place.

Some examples: games don't do real transparency and translucency phenomena like caustics or index of refraction, nor light casting from blackbody radiation, nor fluid and particle simulation because those are all too computationally expensive to run in real time. To diminish the workload games also don't render everything with the highest detail; they use a Level of Detail system to switch from high-resolution assets that are close to the game camera to less and less detailed assets farther away from the camera (and very often no asset at all if the object isn't in-camera, which has implications for light bounce and reflection), while VFX rendering uses the highest quality assets at all distances.

A lot of this is highly technical and involves things like forward vs deferred rendering and whether your ray tracing calculations are emitting from light sources or from the camera (IRL photons come from light sources, bounce, and enter the eye/camera, but some types of ray tracing do the opposite; they shoot a ray out from the camera to bounce around the scene).

Upvote

2

(2

/

0)

DaveSimmons

Ars Legatus Legionis

This may be a dumb question, or something folks think I should know by now, but how does the whole "gaming" video card thing relate to graphic art?

I get that the more powerful the processor and amount of RAM available in a video card/computer the faster the renders for graphic art, but then I hear about iRay in games and I do manual rendering in iRay using DAZ 3D, and that kind of confuses me because that's not quite the same thing. Real time rendering of a gaming scene using ray tracing is one thing, but rendering a model/scene in iRay in DAZ takes orders of magnitude longer.

So while using the same name, and I figure the same kind of ray tracing, the processes are entirely different. From what I gather, the game uses pre-rendered scenes, while I'm rendering the scene new. But I'm not sure that's how it actually works. I've tried looking this stuff up, but the explanations start sounding like they're coming from graphics engineers who assume prior graphic engineering knowledge the reader probably doesn't have.

I'm using a GTX 1070 now, which is old, and I've been looking to upgrade. The nVidia line of hamstrung gaming processors are more affordable than the fully unlocked ones, but I'm concerned that my graphic art rendering will be massively negatively impacted by the hamstringing.

So I guess the question is, are the same things in video cards that render games so fast and well the same bits AND is used the same way in iRay rendering of graphic art? My guess is probably yes, but I'm uncertain how ray tracing rendering in games works compared to iRay rendering (which is ray-tracing) a still image in fairly high resolution. The game seems to run that rendering a whole hell of a lot faster.

Could someone who understand this stuff better than I please explain the difference (if any) in terms anyone can understand? It'd help those of us who game, and do art, better understand the relationship and make better video card buying decisions.

Games don't use pre-rendered scenes, it's the opposite. Rendering something in 'not-real-time' is pre-rendering.

To be honest none of the following is relevant to your purchasing decision. Any gaming GPU will render your DAZ content just fine if DAZ is utilizing GPU rendering. If it isn't, then the rendering is happening on the CPU and the GPU doesn't even matter except to show the viewport with acceptable performance.

"Ray tracing" is a meta term that encompasses several different techniques on the CPU or the GPU (ray tracing was first done on the CPU before GPUs even existed). 'Art' and movie VFX rendering don't use the same algorithms that games do. There are similarities, yes, but as another user said, games use approximations, shortcuts, and a lot of "cheats" in order to run in real time and this means that their rendering is not fully accurate to life, it is merely 'good enough' smoke and mirrors and comes with a lot of limitations and things you cannot do. Pre-rendering takes so long because it's not using any shortcuts or cheats and is fully accurate with a complete simulation of photons bouncing all over the place.

Some examples: games don't do real transparency and translucency phenomena like caustics or index of refraction, nor light casting from blackbody radiation, nor fluid and particle simulation because those are all too computationally expensive to run in real time. To diminish the workload games also don't render everything with the highest detail; they use a Level of Detail system to switch from high-resolution assets that are close to the game camera to less and less detailed assets farther away from the camera (and very often no asset at all if the object isn't in-camera, which has implications for light bounce and reflection), while VFX rendering uses the highest quality assets at all distances.

A lot of this is highly technical and involves things like forward vs deferred rendering and whether your ray tracing calculations are emitting from light sources or from the camera (IRL photons come from light sources, bounce, and enter the eye/camera, but some types of ray tracing do the opposite; they shoot a ray out from the camera to bounce around the scene).

> "To be honest none of the following is relevant to your purchasing decision. Any gaming GPU will render your DAZ content just fine if DAZ is utilizing GPU rendering. If it isn't, then the rendering is happening on the CPU and the GPU doesn't even matter except to show the viewport with acceptable performance."

They mention in a reply that DAZ only supports CUDA for GPU-accelerated content creation so AMD cards will not work. Only Nvidia cards have CUDA support. https://developer.nvidia.com/cuda-faq

Upvote

4

(4

/

0)

At 335W for just the CPU, I need to look at solutions to play with the whole box outside the house for 6-9 months a year. Or maybe watercool with the radiator outside...

Silly to try to keep the house cool with a 700W heater on my desk.

Modern monitors and SSDs saved some power, CPUs and GPUs ate all those gains in exchange for sweet 4K gaming...

Yes, it's a lot of heat. But, CPUs and GPUs don't run at full power all the time. So, the energy impact really depends on your usage patterns.

Upvote

3

(3

/

0)

This may be a dumb question, or something folks think I should know by now, but how does the whole "gaming" video card thing relate to graphic art?

I get that the more powerful the processor and amount of RAM available in a video card/computer the faster the renders for graphic art, but then I hear about iRay in games and I do manual rendering in iRay using DAZ 3D, and that kind of confuses me because that's not quite the same thing. Real time rendering of a gaming scene using ray tracing is one thing, but rendering a model/scene in iRay in DAZ takes orders of magnitude longer.

So while using the same name, and I figure the same kind of ray tracing, the processes are entirely different. From what I gather, the game uses pre-rendered scenes, while I'm rendering the scene new. But I'm not sure that's how it actually works. I've tried looking this stuff up, but the explanations start sounding like they're coming from graphics engineers who assume prior graphic engineering knowledge the reader probably doesn't have.

I'm using a GTX 1070 now, which is old, and I've been looking to upgrade. The nVidia line of hamstrung gaming processors are more affordable than the fully unlocked ones, but I'm concerned that my graphic art rendering will be massively negatively impacted by the hamstringing.

So I guess the question is, are the same things in video cards that render games so fast and well the same bits AND is used the same way in iRay rendering of graphic art? My guess is probably yes, but I'm uncertain how ray tracing rendering in games works compared to iRay rendering (which is ray-tracing) a still image in fairly high resolution. The game seems to run that rendering a whole hell of a lot faster.

Could someone who understand this stuff better than I please explain the difference (if any) in terms anyone can understand? It'd help those of us who game, and do art, better understand the relationship and make better video card buying decisions.

Games don't use pre-rendered scenes, it's the opposite. Rendering something in 'not-real-time' is pre-rendering.

To be honest none of the following is relevant to your purchasing decision. Any gaming GPU will render your DAZ content just fine if DAZ is utilizing GPU rendering. If it isn't, then the rendering is happening on the CPU and the GPU doesn't even matter except to show the viewport with acceptable performance.

"Ray tracing" is a meta term that encompasses several different techniques on the CPU or the GPU (ray tracing was first done on the CPU before GPUs even existed). 'Art' and movie VFX rendering don't use the same algorithms that games do. There are similarities, yes, but as another user said, games use approximations, shortcuts, and a lot of "cheats" in order to run in real time and this means that their rendering is not fully accurate to life, it is merely 'good enough' smoke and mirrors and comes with a lot of limitations and things you cannot do. Pre-rendering takes so long because it's not using any shortcuts or cheats and is fully accurate with a complete simulation of photons bouncing all over the place.

Some examples: games don't do real transparency and translucency phenomena like caustics or index of refraction, nor light casting from blackbody radiation, nor fluid and particle simulation because those are all too computationally expensive to run in real time. To diminish the workload games also don't render everything with the highest detail; they use a Level of Detail system to switch from high-resolution assets that are close to the game camera to less and less detailed assets farther away from the camera (and very often no asset at all if the object isn't in-camera, which has implications for light bounce and reflection), while VFX rendering uses the highest quality assets at all distances.

A lot of this is highly technical and involves things like forward vs deferred rendering and whether your ray tracing calculations are emitting from light sources or from the camera (IRL photons come from light sources, bounce, and enter the eye/camera, but some types of ray tracing do the opposite; they shoot a ray out from the camera to bounce around the scene).

> "To be honest none of the following is relevant to your purchasing decision. Any gaming GPU will render your DAZ content just fine if DAZ is utilizing GPU rendering. If it isn't, then the rendering is happening on the CPU and the GPU doesn't even matter except to show the viewport with acceptable performance."

They mention in a reply that DAZ only supports CUDA for GPU-accelerated content creation so AMD cards will not work. Only Nvidia cards have CUDA support. https://developer.nvidia.com/cuda-faq

Yeah I got ninja'd by their response, and yours too, before I could do an edit.

CUDA is one of my biggest irks when it comes to GPU computation because it's proprietary and I am loathe to support nvidia's business practices.

Upvote

6

(6

/

0)

and at current street prices, for former products, the "Devil may Cry"Slight typo

6660XT sounds a bit ominousThe RX 6950XT, RX 6750XT, and RX 6660XT are heading to retailers today

That confirms it plays Doom, really really well!

Upvote

2

(2

/

0)

It's not meant to upsell existing 6000 series owners. They're just refreshes of existing products. The Hardware Unboxed review of the 6650XT puts it at 6% faster than the 6600XT for only 2% more power. This replaces the 6600XT on their lineup.So a bigger electric bill and possible new power supply for some faster clock speeds? I'm struggling to see big value here or on the visible horizon. What am I missing?

It's also 6% more expensive than the 6600 XT and I'd expect that the others follow similarly.

That hardly represents value if AMD is going to scale pricing that way. Unless that 6% somehow makes something that was barely playable, playable now. I doubt that's the case however.

My thoughts exactly. If both performance and price go up by the same amount then it's not an upgrade, aside from the case of halo products. If it's the fastest then you almost always get a higher price jump than performance jump, but for low to mid tier products I expect performance to increase by more than price. I'll pass.

Upvote

4

(4

/

0)

NeoMorpheus

Ars Tribunus Militum

It's great to see prices returning to MSRP, but how much has MSRP for a given class of GPU increased?

Thanks to nvidia, way too much.

They started this when they released the rtx20 series.

They knew that amd didnt had anything to compete, so they increased the msrp by around $200 and haven’t stopped since.

Just check how much they are charging for a 3090ti.

Upvote

3

(4

/

-1)

NeoMorpheus

Ars Tribunus Militum

This may be a dumb question, or something folks think I should know by now, but how does the whole "gaming" video card thing relate to graphic art?

I get that the more powerful the processor and amount of RAM available in a video card/computer the faster the renders for graphic art, but then I hear about iRay in games and I do manual rendering in iRay using DAZ 3D, and that kind of confuses me because that's not quite the same thing. Real time rendering of a gaming scene using ray tracing is one thing, but rendering a model/scene in iRay in DAZ takes orders of magnitude longer.

So while using the same name, and I figure the same kind of ray tracing, the processes are entirely different. From what I gather, the game uses pre-rendered scenes, while I'm rendering the scene new. But I'm not sure that's how it actually works. I've tried looking this stuff up, but the explanations start sounding like they're coming from graphics engineers who assume prior graphic engineering knowledge the reader probably doesn't have.

I'm using a GTX 1070 now, which is old, and I've been looking to upgrade. The nVidia line of hamstrung gaming processors are more affordable than the fully unlocked ones, but I'm concerned that my graphic art rendering will be massively negatively impacted by the hamstringing.

So I guess the question is, are the same things in video cards that render games so fast and well the same bits AND is used the same way in iRay rendering of graphic art? My guess is probably yes, but I'm uncertain how ray tracing rendering in games works compared to iRay rendering (which is ray-tracing) a still image in fairly high resolution. The game seems to run that rendering a whole hell of a lot faster.

Could someone who understand this stuff better than I please explain the difference (if any) in terms anyone can understand? It'd help those of us who game, and do art, better understand the relationship and make better video card buying decisions.

Games don't use pre-rendered scenes, it's the opposite. Rendering something in 'not-real-time' is pre-rendering.

To be honest none of the following is relevant to your purchasing decision. Any gaming GPU will render your DAZ content just fine if DAZ is utilizing GPU rendering. If it isn't, then the rendering is happening on the CPU and the GPU doesn't even matter except to show the viewport with acceptable performance.

"Ray tracing" is a meta term that encompasses several different techniques on the CPU or the GPU (ray tracing was first done on the CPU before GPUs even existed). 'Art' and movie VFX rendering don't use the same algorithms that games do. There are similarities, yes, but as another user said, games use approximations, shortcuts, and a lot of "cheats" in order to run in real time and this means that their rendering is not fully accurate to life, it is merely 'good enough' smoke and mirrors and comes with a lot of limitations and things you cannot do. Pre-rendering takes so long because it's not using any shortcuts or cheats and is fully accurate with a complete simulation of photons bouncing all over the place.

Some examples: games don't do real transparency and translucency phenomena like caustics or index of refraction, nor light casting from blackbody radiation, nor fluid and particle simulation because those are all too computationally expensive to run in real time. To diminish the workload games also don't render everything with the highest detail; they use a Level of Detail system to switch from high-resolution assets that are close to the game camera to less and less detailed assets farther away from the camera (and very often no asset at all if the object isn't in-camera, which has implications for light bounce and reflection), while VFX rendering uses the highest quality assets at all distances.

A lot of this is highly technical and involves things like forward vs deferred rendering and whether your ray tracing calculations are emitting from light sources or from the camera (IRL photons come from light sources, bounce, and enter the eye/camera, but some types of ray tracing do the opposite; they shoot a ray out from the camera to bounce around the scene).

> "To be honest none of the following is relevant to your purchasing decision. Any gaming GPU will render your DAZ content just fine if DAZ is utilizing GPU rendering. If it isn't, then the rendering is happening on the CPU and the GPU doesn't even matter except to show the viewport with acceptable performance."

They mention in a reply that DAZ only supports CUDA for GPU-accelerated content creation so AMD cards will not work. Only Nvidia cards have CUDA support. https://developer.nvidia.com/cuda-faq

Yeah I got ninja'd by their response, and yours too, before I could do an edit.

CUDA is one of my biggest irks when it comes to GPU computation because it's proprietary and I am loathe to support nvidia's business practices.

Everything that nvidia does and releases is with one objective: keep you locked to their hardware.

Thats why i refuse to give them a penny.

Upvote

1

(2

/

-1)

Meh prices for meh performance.

The RX 6950 XT would make a bit of sense compared to RTX 3080 Ti and RTX 3090... but neither of those GPUs made any sense in the first place.

At this point, might as well just wait for RX 7000 and RTX 4000. Even if you plan on buying RX 6000 or RTX 3000, as long as crypto doesn't somehow blow up 4-5x, prices can only really go down, especially for used, former mining GPUs.

I wouldn't buy ex-mining GPUs, unless you like random crashes and visual artifacts. They tend to get pretty trashed.

Has there ever been a proper study of this? Thess are always the first concerns people have with ex-mining cards, and while they're reasonable concerns it all seems to be hear-say and speculation.

On the other hand people make claims that the cards have all been undervolted and saved from the effects of thermal cycling due to being on all the time.

Before I pass over a cheap GPU stained with the tears of a miner, a good study would be prudent.

My educated guess is that a sanely utilized mining GPU will be the same or better as a GPU utilized for rendering or gaming when the total number of ownership hours are similar, but that there's too many variables to actually run an effective study.

Assuming the miners are sane and aren't making any GPU modifications other than undervolting the card and potentially upping the memory clocks, the cards should age pretty gracefully due to the comparatively lower power/thermal load and the lack of thermal cycling that you mentioned. Unfortunately, you also don't have any assurances that the miner didn't do something stupid to the card to try and improve the card's hashing performance (uninformed overclocking, repeatedly flashing the card's VBIOS, flashing the card with third party VBIOS, etc.), and you can be reasonably sure that if you're buying a used card from a miner that they likely won't be forthcoming with anything they could have done to negatively impact the long-term health of the card.

Upvote

1

(1

/

0)

I waited so long for a GPU at a decent price that at this point I will wait for a RTX 4xxx or AMD RX 7xxx.

That's an interesting point of view.

I got my beloved Sapphire 580 8 GB for $309 back in July of '18. It was mid-rangeish back then. Today NewEgg won't sell me an equivalent card for less than $343. Buy it now on eBay starts at $250. A combination of mining, everyone being home for 18 months saying "I bet I could make a gaming PC," and the chip shortage FUBARed the market. Some of that stuff is unique to 2022, and will likely not happen again. BTC rising in value again, OTOH, is highly likely, and when that happens a graphics card that can do cryptography real fast will become extremely valuable.

I do not understand people who honestly believe they can wait on a graphics card, and one will be available for a non-FUBARed price at some indeterminate point in the future.

Maybe you realized your 3-year-old-card that was mid-range then serves your needs fine?

Meh prices for meh performance.

The RX 6950 XT would make a bit of sense compared to RTX 3080 Ti and RTX 3090... but neither of those GPUs made any sense in the first place.

At this point, might as well just wait for RX 7000 and RTX 4000. Even if you plan on buying RX 6000 or RTX 3000, as long as crypto doesn't somehow blow up 4-5x, prices can only really go down, especially for used, former mining GPUs.

I wouldn't buy ex-mining GPUs, unless you like random crashes and visual artifacts. They tend to get pretty trashed.

How does that work? Electronics are not analog. They either function to spec, or they don't.

The miners may have a custom firmware of some sort that is not intended to be good for games, but that can be replaced. They are more likely to have undervolted the cards to save money on power than overclocked the cards, and overclocking is much more likely to burn out chips/bust capacitors/etc. than under-clocking.

I mean, weird unlikely edge cases can exist, where an underclocked mining GPU will function well enough that you can game on it for two hours and then it kills the system, but those have to be really weird edge cases.

Upvote

1

(1

/

0)

onlysublime

Ars Tribunus Militum

Is there any chance modern consoles (Series X) get FSR 2.0?

Yes. At the recent Game Developers' Conference AMD confirmed that FSR 2.0 is coming to PS5/XSX/XSS consoles.

Actually, AMD only confirmed Xbox and Nvidia 1070 and up cards (and AMD cards, of course)...

Upvote

0

(1

/

-1)

The thing is... I don't know a lot of gamers that game around the clock 24/7 and while I also don't know a lot of miners I would kind of guess they do mine around the clock.Meh prices for meh performance.

The RX 6950 XT would make a bit of sense compared to RTX 3080 Ti and RTX 3090... but neither of those GPUs made any sense in the first place.

At this point, might as well just wait for RX 7000 and RTX 4000. Even if you plan on buying RX 6000 or RTX 3000, as long as crypto doesn't somehow blow up 4-5x, prices can only really go down, especially for used, former mining GPUs.

I wouldn't buy ex-mining GPUs, unless you like random crashes and visual artifacts. They tend to get pretty trashed.

Has there ever been a proper study of this? Thess are always the first concerns people have with ex-mining cards, and while they're reasonable concerns it all seems to be hear-say and speculation.

On the other hand people make claims that the cards have all been undervolted and saved from the effects of thermal cycling due to being on all the time.

Before I pass over a cheap GPU stained with the tears of a miner, a good study would be prudent.

My educated guess is that a sanely utilized mining GPU will be the same or better as a GPU utilized for rendering or gaming when the total number of ownership hours are similar, but that there's too many variables to actually run an effective study.

Assuming the miners are sane and aren't making any GPU modifications other than undervolting the card and potentially upping the memory clocks, the cards should age pretty gracefully due to the comparatively lower power/thermal load and the lack of thermal cycling that you mentioned. Unfortunately, you also don't have any assurances that the miner didn't do something stupid to the card to try and improve the card's hashing performance (uninformed overclocking, repeatedly flashing the card's VBIOS, flashing the card with third party VBIOS, etc.), and you can be reasonably sure that if you're buying a used card from a miner that they likely won't be forthcoming with anything they could have done to negatively impact the long-term health of the card.

I waited so long for a GPU at a decent price that at this point I will wait for a RTX 4xxx or AMD RX 7xxx.

That's an interesting point of view.

I got my beloved Sapphire 580 8 GB for $309 back in July of '18. It was mid-rangeish back then. Today NewEgg won't sell me an equivalent card for less than $343. Buy it now on eBay starts at $250. A combination of mining, everyone being home for 18 months saying "I bet I could make a gaming PC," and the chip shortage FUBARed the market. Some of that stuff is unique to 2022, and will likely not happen again. BTC rising in value again, OTOH, is highly likely, and when that happens a graphics card that can do cryptography real fast will become extremely valuable.

I do not understand people who honestly believe they can wait on a graphics card, and one will be available for a non-FUBARed price at some indeterminate point in the future.

Maybe you realized your 3-year-old-card that was mid-range then serves your needs fine?

Meh prices for meh performance.

The RX 6950 XT would make a bit of sense compared to RTX 3080 Ti and RTX 3090... but neither of those GPUs made any sense in the first place.

At this point, might as well just wait for RX 7000 and RTX 4000. Even if you plan on buying RX 6000 or RTX 3000, as long as crypto doesn't somehow blow up 4-5x, prices can only really go down, especially for used, former mining GPUs.

I wouldn't buy ex-mining GPUs, unless you like random crashes and visual artifacts. They tend to get pretty trashed.

How does that work? Electronics are not analog. They either function to spec, or they don't.

The miners may have a custom firmware of some sort that is not intended to be good for games, but that can be replaced. They are more likely to have undervolted the cards to save money on power than overclocked the cards, and overclocking is much more likely to burn out chips/bust capacitors/etc. than under-clocking.

I mean, weird unlikely edge cases can exist, where an underclocked mining GPU will function well enough that you can game on it for two hours and then it kills the system, but those have to be really weird edge cases.

It's not easy to explain but I don't think I'm getting a lot of value by paying MRSP for a card that is ending its life cycle compared to paying 2 or 1,5 or whatever MRSP some months ago for a card that had a lot of life into its cycle.

Games evolve and when I put my 500 or something euro on it I want something which will hold its own for some time.

If AMD or nVidia feel a sudden need to discount their current generation offerings then I can think it over. No, I don't think that will happen.

My needs are to play good games. I have a long Steam account filled with probably thousand games, some older, some newer, some just to remember.

In the last months I played not so new games, those I expect to play latter this year.

I don't know if any of this makes sense...

Upvote

2

(3

/

-1)

onlysublime

Ars Tribunus Militum

It's not easy to explain but I don't think I'm getting a lot of value by paying MRSP for a card that is ending its life cycle compared to paying 2 or 1,5 or whatever MRSP some months ago for a card that had a lot of life into its cycle.

Games evolve and when I put my 500 or something euro on it I want something which will hold its own for some time.

If AMD or nVidia feel a sudden need to discount their current generation offerings then I can think it over. No, I don't think that will happen.

My needs are to play good games. I have a long Steam account filled with probably thousand games, some older, some newer, some just to remember.

In the last months I played not so new games, those I expect to play latter this year.

I don't know if any of this makes sense...

I don't know how anyone can justify spending 2x for a card a few months ago versus spending MSRP now. The performance of the card didn't change. It's the same performance now as it was a few months ago. If spending 2x for that card is worth it to you, then it's worth it to you. But for a lot of people, it doesn't. For a lot of people, performance is performance.

Upvote

0

(1

/

-1)

structuralnoise

Seniorius Lurkius

This may be a dumb question, or something folks think I should know by now, but how does the whole "gaming" video card thing relate to graphic art?

I get that the more powerful the processor and amount of RAM available in a video card/computer the faster the renders for graphic art, but then I hear about iRay in games and I do manual rendering in iRay using DAZ 3D, and that kind of confuses me because that's not quite the same thing. Real time rendering of a gaming scene using ray tracing is one thing, but rendering a model/scene in iRay in DAZ takes orders of magnitude longer.

So while using the same name, and I figure the same kind of ray tracing, the processes are entirely different. From what I gather, the game uses pre-rendered scenes, while I'm rendering the scene new. But I'm not sure that's how it actually works. I've tried looking this stuff up, but the explanations start sounding like they're coming from graphics engineers who assume prior graphic engineering knowledge the reader probably doesn't have.

I'm using a GTX 1070 now, which is old, and I've been looking to upgrade. The nVidia line of hamstrung gaming processors are more affordable than the fully unlocked ones, but I'm concerned that my graphic art rendering will be massively negatively impacted by the hamstringing.

So I guess the question is, are the same things in video cards that render games so fast and well the same bits AND is used the same way in iRay rendering of graphic art? My guess is probably yes, but I'm uncertain how ray tracing rendering in games works compared to iRay rendering (which is ray-tracing) a still image in fairly high resolution. The game seems to run that rendering a whole hell of a lot faster.

Could someone who understand this stuff better than I please explain the difference (if any) in terms anyone can understand? It'd help those of us who game, and do art, better understand the relationship and make better video card buying decisions.

If this has not been answered yet, the key thing in your question is that you're using iRay. It is made by nvidia, so you will definitely benefit from a new gpu from them. An AMD one on the other hand would limit you to using only the cpu.

All the flashy graphics and marketing speak can be found on nvidias website:

https://www.nvidia.com/en-us/design-visualization/iray/

Upvote

1

(1

/

0)

AlexisR200X

Ars Praefectus

So a bigger electric bill and possible new power supply for some faster clock speeds? I'm struggling to see big value here or on the visible horizon. What am I missing?

A bigger electric bill plus an undeserved premium price tag for what is functionally no more than a couple extra frames per second. This is AMD cashing in on the supply shortage by binning product that could have alleviated the supply of their previous 00 models.

Upvote

0

(0

/

0)