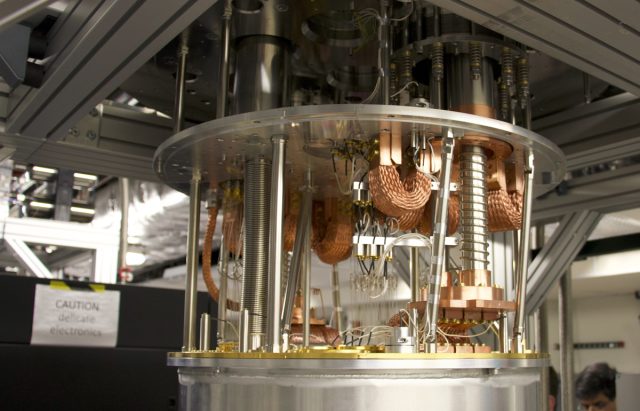

YORKTOWN HEIGHTS, NY—I’m in a room with one possible future for computing. The computer itself is completely unimposing, looking like a metal tank suspended from the ceiling. What makes an impression is the noise, a regular metallic ping that dominates the room. It’s the sound of a cooling system designed to take hardware to the edge of absolute zero. And the hardware being cooled isn’t a standard chip; it’s IBM’s take on quantum computing.

In 2016, IBM made a lot of noise when it invited the public to try out an early iteration of its quantum computer, hosting only five qubits—far too few qubits to do any serious calculations but more than enough for people to gain some real-world experience with programming on the new technology. Amidst some rapid progress, IBM installed more tanks in its quantum computing room and added new processors as they were ready. As the company scaled up the number of qubits to 20, it optimistically announced that 50-qubit hardware was on its way.

During our recent visit to IBM’s Thomas Watson Research Center, the company’s researchers were far more circumspect, being clear they weren’t making promises and that 50-qubit hardware is just a stepping stone toward quantum computing’s future. But they did make the case that IBM was well-positioned to be part of that future, in part because of the ecosystem the company is building up around these early efforts.

Building blocks to chips

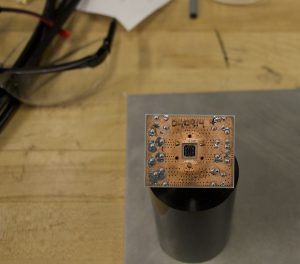

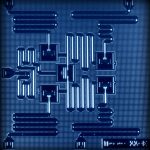

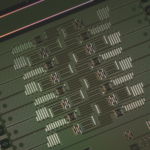

For its qubits, IBM uses superconducting wires linked to a resonator, all built on top of a silicon wafer. The wire and wafer let the company leverage its experience building circuitry, but in this case, the wire is a mix of niobium and aluminum, which allows it to superconduct at extremely low temperatures. Jerry Chow, who showed us around the hardware testing room, says the company is still experimenting with the details of how to improve its qubits, testing different formulations and geometries individually or in pairs.

Loading comments...

Loading comments...