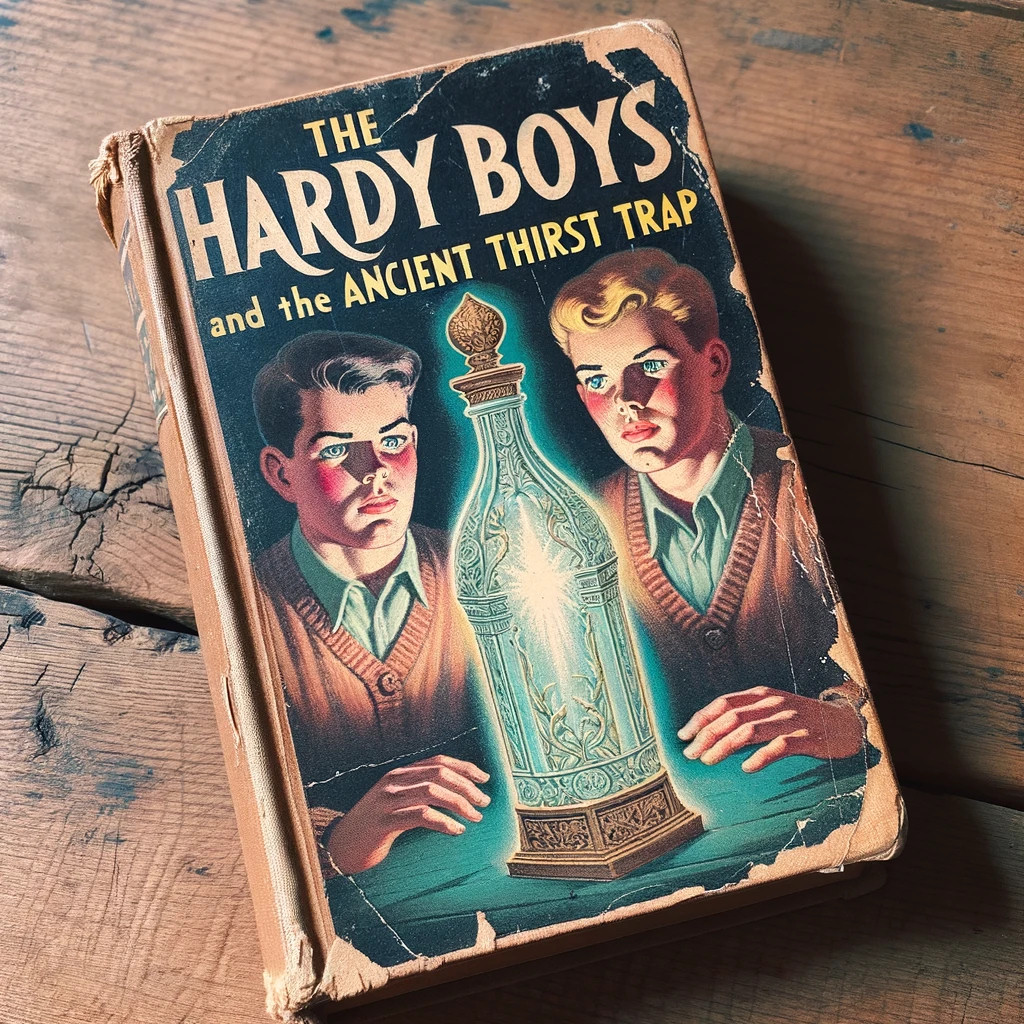

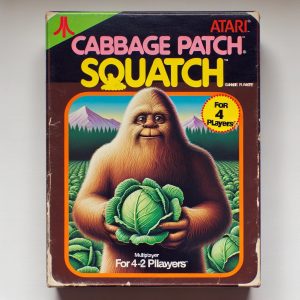

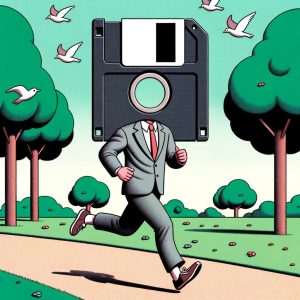

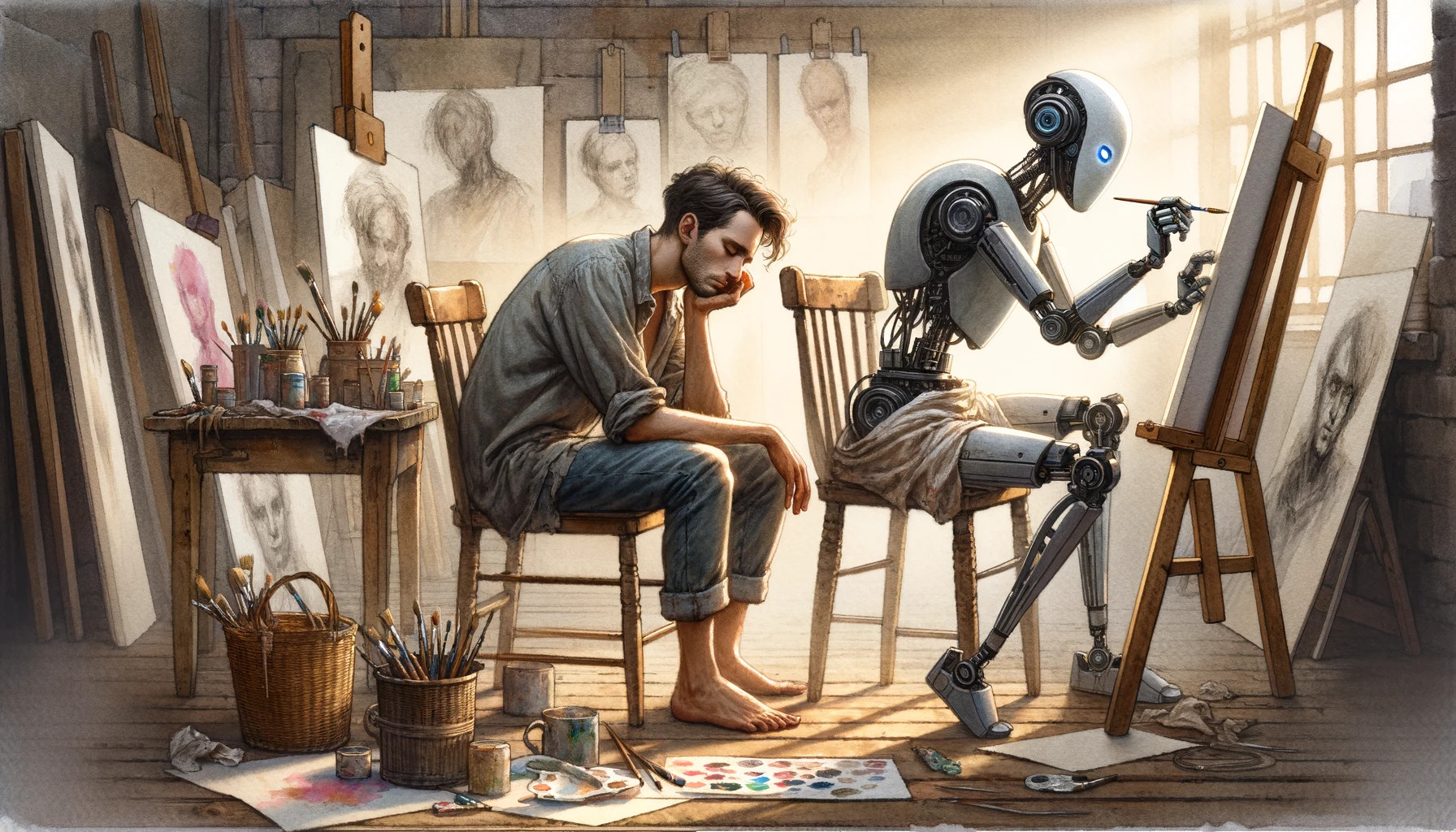

In October, OpenAI launched its newest AI image generator—DALL-E 3—into wide release for ChatGPT subscribers. DALL-E can pull off media generation tasks that would have seemed absurd just two years ago—and although it can inspire delight with its unexpectedly detailed creations, it also brings trepidation for some. Science fiction forecast tech like this long ago, but seeing machines upend the creative order feels different when it’s actually happening before our eyes.

“It’s impossible to dismiss the power of AI when it comes to image generation,” says Aurich Lawson, Ars Technica’s creative director. “With the rapid increase in visual acuity and ability to get a usable result, there’s no question it’s beyond being a gimmick or toy and is a legit tool.”

With the advent of AI image synthesis, it’s looking increasingly like the future of media creation for many will come through the aid of creative machines that can replicate any artistic style, format, or medium. Media reality is becoming completely fluid and malleable. But how is AI image synthesis getting more capable so rapidly—and what might that mean for artists ahead?

Using AI to improve itself

We first covered DALL-E 3 upon its announcement from OpenAI in late September, and since then, we’ve used it quite a bit. For those just tuning in, DALL-E 3 is an AI model (a neural network) that uses a technique called latent diffusion to pull images it “recognizes” out of noise, progressively, based on written prompts provided by a user—or in this case, by ChatGPT. It works using the same underlying technique as other prominent image synthesis models like Stable Diffusion and Midjourney.

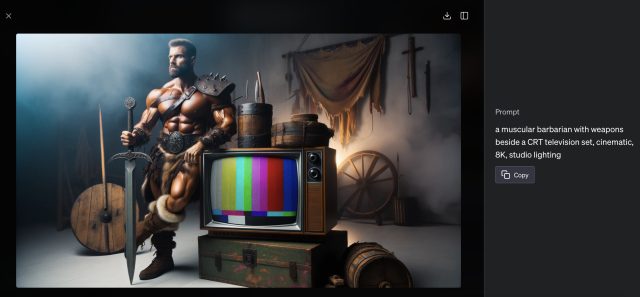

You type in a description of what you want to see, and DALL-E 3 creates it.

ChatGPT and DALL-E 3 currently work hand-in-hand, making AI art generation into an interactive and conversational experience. You tell ChatGPT (through the GPT-4 large language model) what you’d like it to generate, and it writes ideal prompts for you and submits them to the DALL-E backend. DALL-E returns the images (usually two at a time), and you see them appear through the ChatGPT interface, whether through the web or via the ChatGPT app.

Loading comments...

Loading comments...