On top of Wednesday’s news that Nvidia earnings have performed far better than expected, Reuters reports that Nvidia CEO Jensen Huang expects the AI boom to last well into next year. As a testament to this outlook, Nvidia will buy back $25 billion of shares—which happen to be worth triple what they were just before the generative AI craze kicked off.

“A new computing era has begun,” said Huang breathlessly in an Nvidia press release announcing the company’s financial results, which include a quarterly revenue of $13.51 billion, up 101 percent from a year ago and 88 percent from the previous quarter. “Companies worldwide are transitioning from general-purpose to accelerated computing and generative AI.”

For those just tuning in, Nvidia enjoys what Reuters calls a “near monopoly” on hardware that accelerates the training and deployment of neural networks that power today’s generative AI models—and a 60–70 percent AI server market share. In particular, its data center GPU lines are exceptionally good at performing billions of the matrix multiplications necessary to run neural networks due to their parallel architecture. In this way, hardware architectures that originated as video game graphics accelerators now power the generative AI boom.

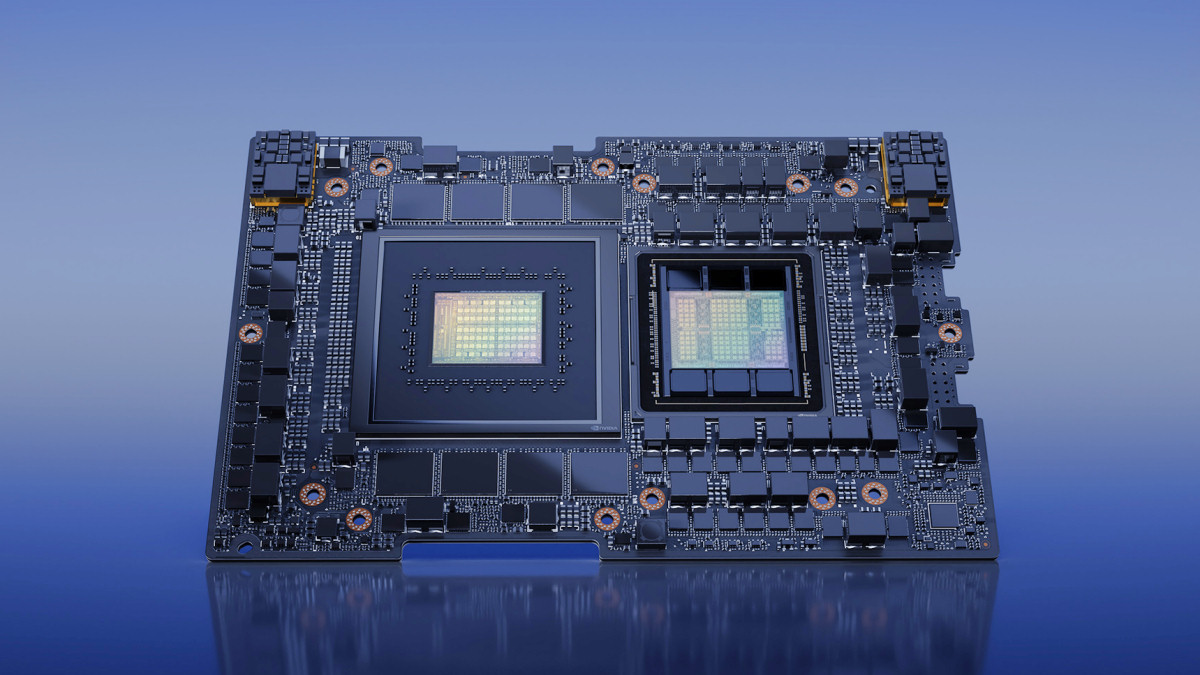

Nvidia’s most popular AI hardware products currently include A100 and H100 data center GPUs, and Nvidia has also combined the H100 and a CPU into a package called the GH200 “Grace Hopper” chipset that powers Nvidia’s line of computer systems. These are not consumer-grade gaming GPUs like the GeForce RTX 4090—The Verge reports that the H100 chip sells for roughly $40,000—and they can execute a great deal more calculations every second.

Loading comments...

Loading comments...