This is the second episode in our exploration of “no-code” machine learning. In our first article, we laid out our problem set and discussed the data we would use to test whether a highly automated ML tool designed for business analysts could return cost-effective results near the quality of more code-intensive methods involving a bit more human-driven data science.

If you haven’t read that article, you should go back and at least skim it. If you’re all set, let’s review what we’d do with our heart attack data under “normal” (that is, more code-intensive) machine learning conditions and then throw that all away and hit the “easy” button.

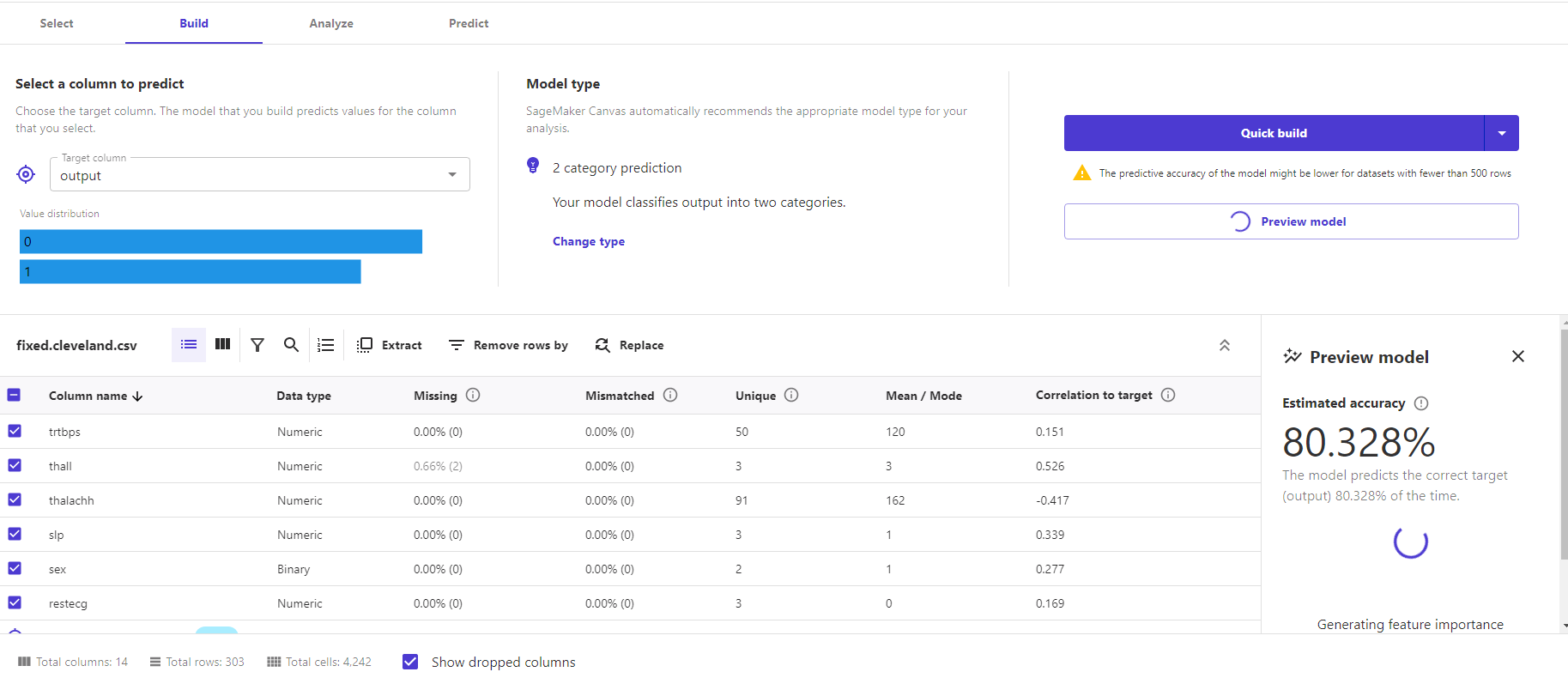

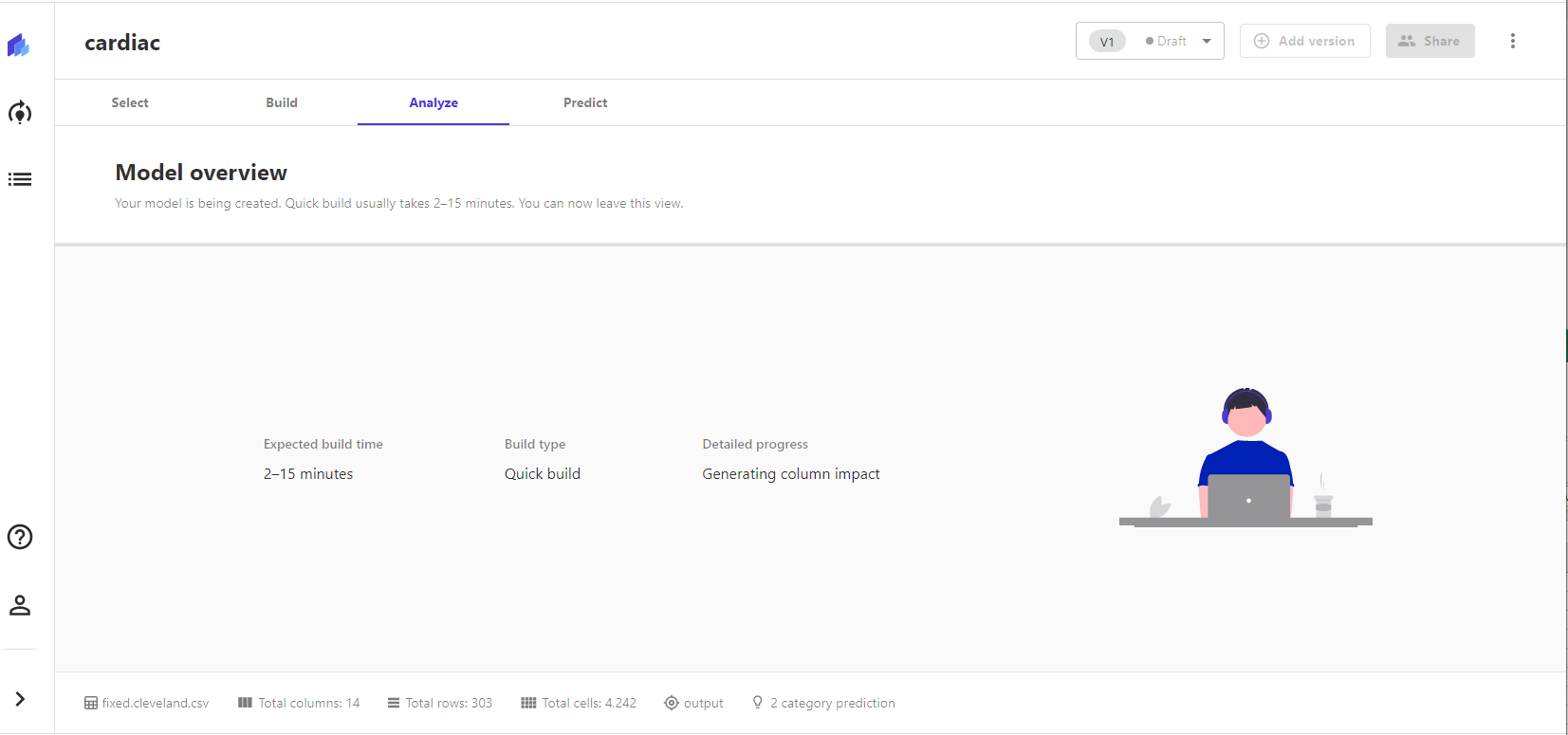

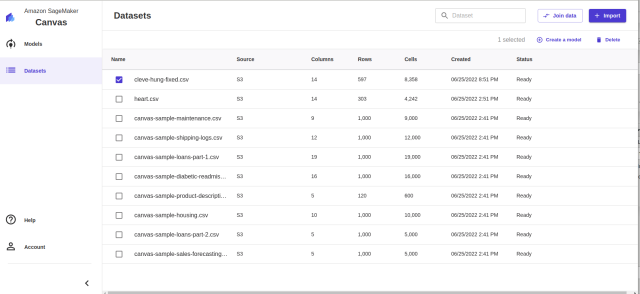

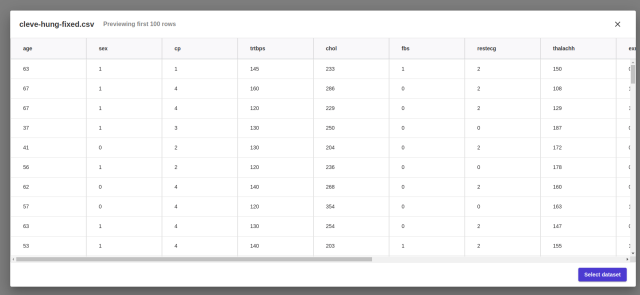

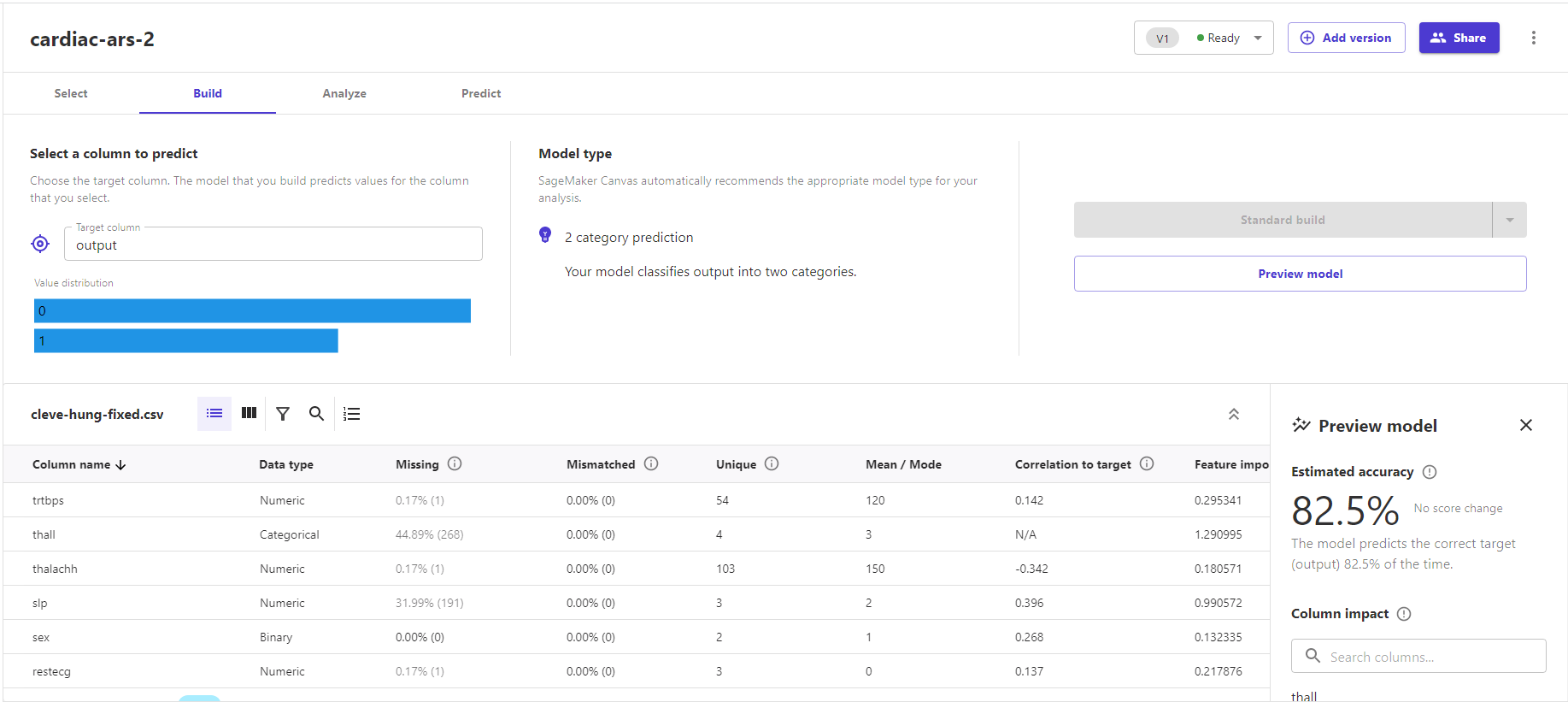

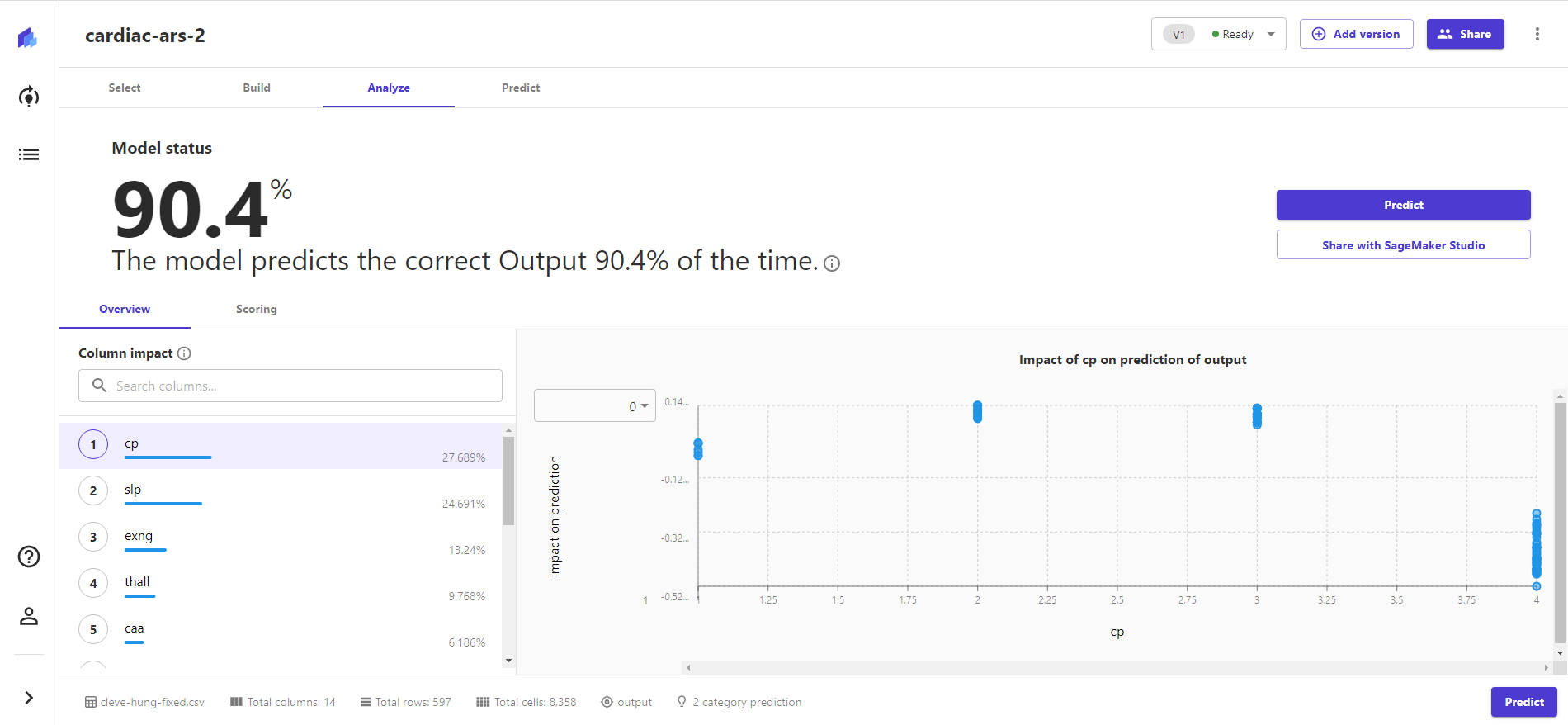

As we discussed previously, we’re working with a set of cardiac health data derived from a study at the Cleveland Clinic Institute and the Hungarian Institute of Cardiology in Budapest (as well as other places whose data we’ve discarded for quality reasons). All that data is available in a repository we’ve created on GitHub, but its original form is part of a repository of data maintained for machine learning projects by the University of California-Irvine. We’re using two versions of the data set: a smaller, more complete one consisting of 303 patient records from the Cleveland Clinic and a larger (597 patient) database that incorporates the Hungarian Institute data but is missing two of the types of data from the smaller set.

The two fields missing from the Hungarian data seem potentially consequential, but the Cleveland Clinic data itself may be too small a set for some ML applications, so we’ll try both to cover our bases.

The plan

With multiple data sets in hand for training and testing, it was time to start grinding. If we were doing this the way data scientists usually do (and the way we tried last year), we would be doing the following:

Loading comments...

Loading comments...