Welcome to a supplemental edition of our “Web Served” series, a DIY guide on tackling the challenges of setting up and running a Web server for fun. It’s been a while since we last published an entry—so long in fact, that at some point very soon, I’ll be going back through the series and bringing everything up to date with current versions and commands. But after spending the last weekend tinkering with shifting my personal site over to all-HTTPS, it was just too much fun not to share.

Note that if you’re not the kind of person who thinks screwing around with the command line is fun, this probably isn’t a guide you’re going to be interested in.

Encrypt all the things

The unencrypted Web is on the way out, and that’s a good thing. We’re still making the switch here at Ars—subscriptors can use HTTPS today, but we’re still working out the mixed content kinks for everyone else (the main holdup is handling the ad networks. Since subscriptors don’t see ads, there’s no holdup there!). But if you’ve followed along with the previous Web Served pieces you’ve probably got a shiny Nginx instance happily serving up pages and an SSL/TLS certificate so that privacy-minded visitors have the option of using HTTPS on your site.

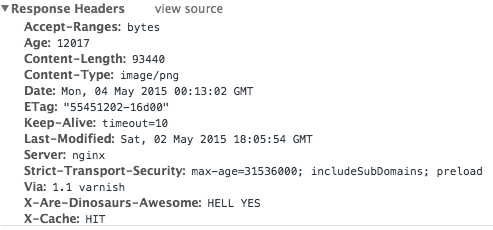

In this guide, we’re going to take things a step further and make everything HTTPS for everyone. At the same time we’re going to start participating in HSTS—that’s “HTTP Strict Transport Security,” a way to ensure that your site communicates to your visitors that not only do you support HTTPS, but that you insist on it.

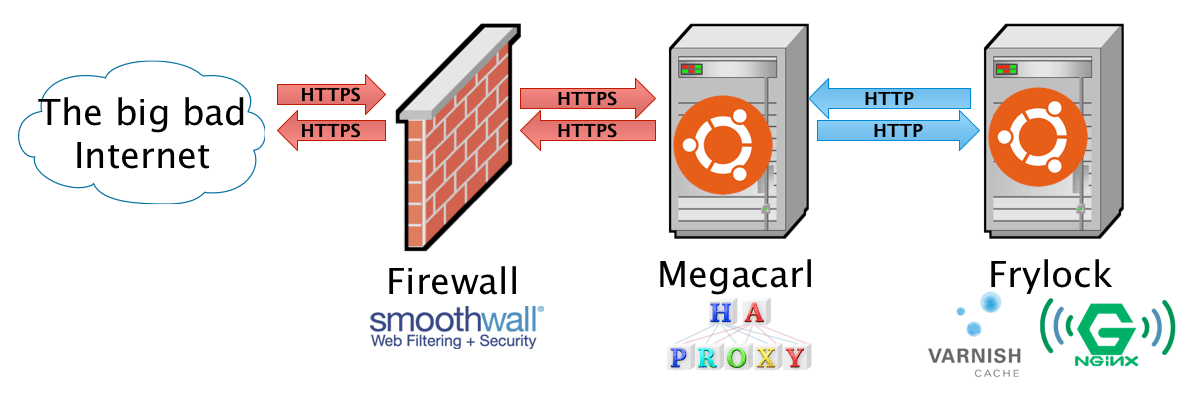

How will we accomplish this? There are a lot of potential ways, but the way I did it with my personal site (and the way I’m going to describe herein) is by employing “HTTPS termination.” In other words, we’re going to stick a reverse-proxy application in front of the Web server to handle the HTTPS part. This winds up being a lot simpler and more flexible than trying to do all-HTTPS with just your Web server’s redirection abilities. So while it may seem a little counterintuitive that adding another app to the stack is the simpler way, trust us: it really, really is.

Loading comments...

Loading comments...