So there are a bunch of articles around, comparing the two..

https://www.howtogeek.com/3-reasons-why-your-next-monitor-should-be-mini-led/

And that, well, seems to make sense.

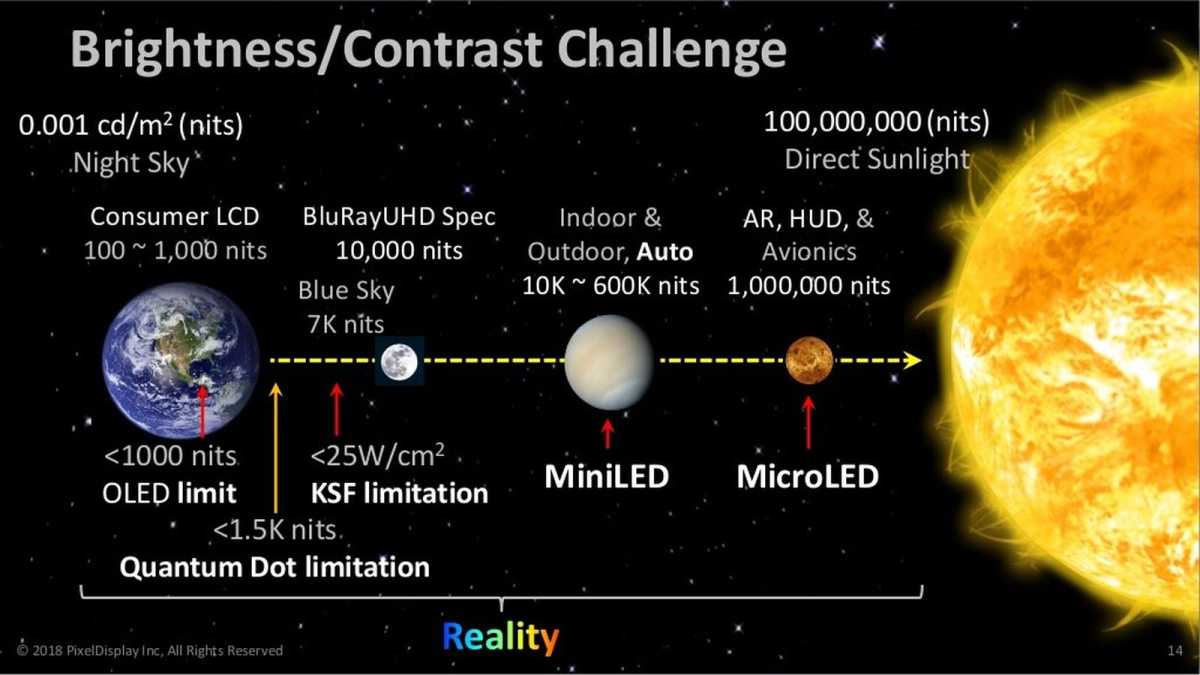

Brightness levels that OLED can't touch

from https://www.pcworld.com/article/560224/oled-vs-mini-led-the-pc-displays-of-the-future-compared.html

Then I see this about affordable next gen WOLED monitors

https://www.xda-developers.com/2026-will-make-oled-monitors-affordable/

"This year saw multiple hotly anticipated 4th Gen WOLED monitors make it to the market. While Asus debuted its XG27AQWMG for $700, Gigabyte announced the MO27Q28G for just $500. This brings 1440p 27" 280Hz WOLED monitors with primary RGB tandem OLED panels and a TrueBlack 500 rating to a price I don't recall having seen before. "

https://www.howtogeek.com/3-reasons-why-your-next-monitor-should-be-mini-led/

And that, well, seems to make sense.

Brightness levels that OLED can't touch

from https://www.pcworld.com/article/560224/oled-vs-mini-led-the-pc-displays-of-the-future-compared.html

Then I see this about affordable next gen WOLED monitors

https://www.xda-developers.com/2026-will-make-oled-monitors-affordable/

"This year saw multiple hotly anticipated 4th Gen WOLED monitors make it to the market. While Asus debuted its XG27AQWMG for $700, Gigabyte announced the MO27Q28G for just $500. This brings 1440p 27" 280Hz WOLED monitors with primary RGB tandem OLED panels and a TrueBlack 500 rating to a price I don't recall having seen before. "