You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Is distributed computing dying, or just fading into the backdrop?

- Thread starter JournalBot

- Start date

Found an OG slashdot reader.Imagine a Beowulf cluster running...

Upvote

9

(9

/

0)

Isn't distributed computing still bigger today (in terms of compute & participants) than it was in heyday (in terms of mindshare)?

So many things have changed since Seti@home. Not the least of which is Seti@home closing.

Protein folding was the next project to capture people's imaginations... and some clever people found better tools for that.

It was easy to lose a CPU in all the noise on your power bill. Maxing out a CPU could take a computer from 20 watts to 30. An AMD K6-2+ 550mhz chip cpu was under 20 watts, less than the CRT you didn't bother to turn off. You had a bunch of 60w or 100w incandescent bulbs to hide the CPU too.

Once a CPU required a big honking heatsink & could draw more than all the LED lightbulbs in your home combined the math changed & people had much more effective options when they wanted to donate $20 or $50 bucks a month.

I think the culture has changed too. Many fewer people are able to believe in something larger than themselves or feel good about contributing to it. More people feel exploited & unappreciated in their lives more often, the average person's life is less financially secure than it was 20 years ago, with less hope & more dread. It's probably more common that giving more than you take makes people feel weak & foolish instead of magnanimous & part of a greater whole.

... If you ever turn on an electric heater for comfort you might as well fire up the CPU instead & get some work out of it. 3 years back my building's boiler was on the fritz & I made my desk into something like a kotatsu, a little heated tent I put my legs into while I worked. It's super comfy & distributing computing helps warm your heart too.

As a side note: Is there a way to set up blockchain/crypto that does useful work? Speculators & charlatans turned what could be a useful tool with a meaningful niche into a force for ill, but a distributed ledger/database will find an application eventually. There are too many things that are too important for one person to have ultimate control over & you might as well be modeling every possible chemical reaction or something.

So many things have changed since Seti@home. Not the least of which is Seti@home closing.

Protein folding was the next project to capture people's imaginations... and some clever people found better tools for that.

It was easy to lose a CPU in all the noise on your power bill. Maxing out a CPU could take a computer from 20 watts to 30. An AMD K6-2+ 550mhz chip cpu was under 20 watts, less than the CRT you didn't bother to turn off. You had a bunch of 60w or 100w incandescent bulbs to hide the CPU too.

Once a CPU required a big honking heatsink & could draw more than all the LED lightbulbs in your home combined the math changed & people had much more effective options when they wanted to donate $20 or $50 bucks a month.

I think the culture has changed too. Many fewer people are able to believe in something larger than themselves or feel good about contributing to it. More people feel exploited & unappreciated in their lives more often, the average person's life is less financially secure than it was 20 years ago, with less hope & more dread. It's probably more common that giving more than you take makes people feel weak & foolish instead of magnanimous & part of a greater whole.

... If you ever turn on an electric heater for comfort you might as well fire up the CPU instead & get some work out of it. 3 years back my building's boiler was on the fritz & I made my desk into something like a kotatsu, a little heated tent I put my legs into while I worked. It's super comfy & distributing computing helps warm your heart too.

As a side note: Is there a way to set up blockchain/crypto that does useful work? Speculators & charlatans turned what could be a useful tool with a meaningful niche into a force for ill, but a distributed ledger/database will find an application eventually. There are too many things that are too important for one person to have ultimate control over & you might as well be modeling every possible chemical reaction or something.

Upvote

8

(9

/

-1)

I do remember about 15 years ago, someone set up a private BOINC project to break the TLS encryption for an MMO EA had sunset back in 2004, a game called Earth and Beyond. It took a year, but the encryption was eventually broken, the packets that were PCAP'd in the last month of the games history could be decrypted, and the developers of the emulator project were eventually able to rebuild the server. Pretty crazy story of some short-ish term gain for rebuilding a game that could have been lost to time.

Upvote

11

(11

/

0)

Thank you, exactly. I was reading through all the comments trying to find mention of distributed.net. I used that back when it came out in '97. My friends and I sneakernet'd blocks of hashes to the demo computers at CompUSA, where we worked. It was good fun. If I remember correctly, we were members of the ufies.org group, lol.No mention of distributed.net?

Upvote

6

(6

/

0)

Didn’t SETI@home get its radio data from Arecibo, which collapsed in 2020? Would make it hard to continue after that!

Upvote

13

(13

/

0)

The price of electricity is going through the roof along with the global temperature, cryptominers and scalpers inflated the value of GPUs which means I'm not going to use cycles on the card I have on distributed computing. I'm going to use those cycles on my projects; which includes AI training.

It's a multi-factor reason why distributed computing is waning.

I do have a vast fondness for when I ran Folding@home on my PS3 for many, many, hours.

It's a multi-factor reason why distributed computing is waning.

I do have a vast fondness for when I ran Folding@home on my PS3 for many, many, hours.

Upvote

3

(4

/

-1)

Ars used to run quite a few distributed computing teams - we even have a Forum for it, though it's been pretty quiet for awhile. I used to be quite active - but as others have noted, the idle efficiency changes in home computers makes for a noticeably bigger cost to participate nowadays.Folding@home was kind of fun (well, relatively fun) back in the early 2000s. From what I remember, a lot of tech forums (like this one) created F@H teams and there was some friendly competition between some of the rival sites to see who could fold the most proteins. Plus, it was pretty neat to see the hardware some forum members were putting into the effort - things like dual-CPU systems back when that was a pipe dream for most anyone, a room full of old PCs scavenged from a school or whatever.

I suppose those forum-based F@H teams were kind of the forerunner to the "strong leaders" and "social media presence" that the author talks about but, many of them died off as the sites themselves closed up shop and nothing came around to fill the void.

https://meincmagazine.com/civis/forums/distributed-computing-arcana.18/

Upvote

12

(12

/

0)

Exactly the case for me too. Back then, building custom rigs was very entertaining as a hobby. Powering up a Asus A7M266-D with a pair of Athlon MP for the first time, spinning SCSI drives in RAID 10 over LSI adapters. Good old days and the Folding@home provided burn-in and benchmarking amusement and competition.Folding@home was kind of fun (well, relatively fun) back in the early 2000s. From what I remember, a lot of tech forums (like this one) created F@H teams and there was some friendly competition between some of the rival sites to see who could fold the most proteins. Plus, it was pretty neat to see the hardware some forum members were putting into the effort - things like dual-CPU systems back when that was a pipe dream for most anyone, a room full of old PCs scavenged from a school or whatever.

It's just not the same anymore.

Upvote

10

(10

/

0)

Which is a shame because AlphaFold didn't solve the problem. It offers a solution to an aspect of protein folding, but as far as I'm aware it does nothing to help with determining protein shapes as they are folding. AlphaFold will just present a final state of a single protein. Also there are good questions about AlphaFold's ability to handle proteins that don't align with its training data.Maybe Folding at Home dropped off after it was announced in 2020 that Google's Alpha Fold had mostly solved the problem.

While I think there is a healthy discussion to be had about the efficacy of Folding@Home, I also think AlphaFold has given a false impression of what protein folding simulations are meant for and thus leads too many people to think that such simulations are no longer needed.

Upvote

8

(8

/

0)

HomersGhost

Wise, Aged Ars Veteran

i had Set@Home run on an unused workstation for a job. I kept the screen on and left it running 24/7. I thought it was super cool until the day came when I turned my monitor off and saw the resulting screen burn-in. Let's just say, it wasn't a very good screensaver.

Funny thing, no one at my work really cared.

Funny thing, no one at my work really cared.

Upvote

3

(3

/

0)

One thing that made distributed computing obsolete is the ability to “cheaply” spin up thousands of virtual computers with almost no cost in administering or maintenance. How cheap? Probably cheaper than all the maintenance and programming needed just to track your volunteers, distribute data, and gather and process the results.

Need a few thousands CPUs for a project? You can spin up an entire datacenter worth of computers in minutes. Before large data centers and virtual environments, you’d be lucky to have a dozen machines to work on your project no matter how big your budget.

AI is a perfect example of this. Behind that chatty AI is an unbelievable amount of virtual distributed computing power.

Distributed computing is still taking place. We just don’t need to beg individuals for slices of CPU processing power any more.

Need a few thousands CPUs for a project? You can spin up an entire datacenter worth of computers in minutes. Before large data centers and virtual environments, you’d be lucky to have a dozen machines to work on your project no matter how big your budget.

AI is a perfect example of this. Behind that chatty AI is an unbelievable amount of virtual distributed computing power.

Distributed computing is still taking place. We just don’t need to beg individuals for slices of CPU processing power any more.

Upvote

6

(6

/

0)

SirMrManGuy

Wise, Aged Ars Veteran

If you have a heat pump using the waste heat from the processor is 3.5x less efficient/more costly. If using resistive heating carry on.I have run F@home for a while,

But only in the winter,

Great way ti locally heat my office, while using that energy to also potentially do something useful.

But i’m not running it in summer…

Upvote

7

(7

/

0)

You're not. And I know of at least one other Ars reader who would get the reference.I can't be the only one here that sees Zooniverse and immediately thinks of Bob Fossil, Vince Noir, and Howard Moon, right?

....

And that's why I don't like cricket.

Upvote

1

(1

/

0)

DeschutesCore

Ars Scholae Palatinae

You were there, how does this piece strike you? Are you even allowed to comment on it?Ars used to run quite a few distributed computing teams - we even have a Forum for it, though it's been pretty quiet for awhile. I used to be quite active - but as others have noted, the idle efficiency changes in home computers makes for a noticeably bigger cost to participate nowadays.

https://meincmagazine.com/civis/forums/distributed-computing-arcana.18/

Upvote

5

(5

/

0)

Good times, just brought back memories of participating over at HardOCP. Like someone else mentioned, I gave up due to a combination of GPU's smashing CPU's contributions in terms of points, and idle cycles not being as cost-free as they seemed to be in previous years. Also I always felt that certain leaders of key projects, like Vijay Pande, held the competitive aspect in some measure of contempt. There was rarely an effort to think through issues of fairness, etc. It was even hard to get updates from projects.

The whole BOINC organization was very, I thought, loose. Points between projects for example weren't very comparable, and the benchmark the program used was easy to cheat and didn't always give consistent results.

Not to even mention a feeling of waste. Because the work was distributed across the internet to machines of unknown providence, some of them known to be running modified binaries to cheat the system, redundancy was pretty high. Eventually I started thinking the whole thing was an enormous waste, just throw some money at a public cloud or university system.

The whole BOINC organization was very, I thought, loose. Points between projects for example weren't very comparable, and the benchmark the program used was easy to cheat and didn't always give consistent results.

Not to even mention a feeling of waste. Because the work was distributed across the internet to machines of unknown providence, some of them known to be running modified binaries to cheat the system, redundancy was pretty high. Eventually I started thinking the whole thing was an enormous waste, just throw some money at a public cloud or university system.

Upvote

4

(4

/

0)

Cost here in Florida about the same, 11±c.On top of this, with the increased cost of electricity in many parts of the world, it is not an insignificant cost increase to run projects like this.

Where I live our electric is on the fairly low side of cost. Something like 12-15c/kwh total (both generation+supply) and with my Ryzen 5800X based desktop machine I noticed a decent change in my electric bill going from just letting it run full power around the clock and idling to letting it sleep when not in use. I can only imagine the jump if it was maxed out 24/7.

There was a time many moons ago I ran S@H and some other BOINC projects on my personal machines, but as much as I hate to say it (especially given projects involved with medical studies): It isn't worth it these days unless you're well off enough to afford the increased electricity usage.

I always let all of the office computers run for Set. Just figured the cost was my contribution. Nowadays the calculation is different, though.

Upvote

1

(1

/

0)

As a college student, running a gravity wave analysis on my desktop computer while I was away from it was very cool. But laptops demand power efficiency, and idle running things is not something you even think about. Consider the screen saver. Very popular in the '90s, but you'd not use a fancy screen saver that drains battery on a laptop in the 2010s. My own experience is that moving to a laptop took me off the distributed computing projects, and I suspect that as screen savers left the public focus, so did these projects.

Upvote

2

(2

/

0)

I recommend https://eyewire.org, a "game" to map neurons in the human brain. The results will be used to train artificial intelligence algorithms to map neurons.Pearson suggested that the center of attention has shifted to projects that more directly involve users

Last edited:

Upvote

1

(1

/

0)

The article and some comments mention not wanting to wear down laptop batteries as one reason for the supposed decline in participation. But laptops can also function plugged in.

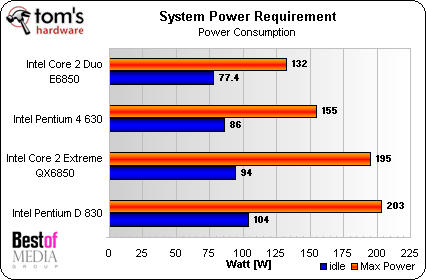

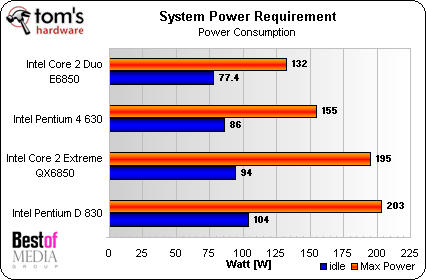

Regarding idle and load power consumption being equal in the past, I'm not sure about the Pentium 3 era, but by the mid-00s there was a difference:

Regarding idle and load power consumption being equal in the past, I'm not sure about the Pentium 3 era, but by the mid-00s there was a difference:

Upvote

3

(4

/

-1)

I ran Folding@Home briefly when I was in college. I quit because I didn't have much in the way of computing resources to contribute, and I didn't feel my paltry contribution was worth the trouble.

Maybe I should get back into it, but I suspect it still holds true. I have an M1 Max laptop and a 10-year-old gaming PC with a 7-year-old GPU. The laptop, at least, is no slouch as a personal workstation, but for doing serious number crunching? I suspect it would still not really be worth me leaving it running while I'm at work.

Maybe I should get back into it, but I suspect it still holds true. I have an M1 Max laptop and a 10-year-old gaming PC with a 7-year-old GPU. The laptop, at least, is no slouch as a personal workstation, but for doing serious number crunching? I suspect it would still not really be worth me leaving it running while I'm at work.

Upvote

3

(3

/

0)

Seti@Home was probably oversold a bit in the "your PC might find the Wow! signal" regard. That wasn't really the design of the experiment, but it certainly got the general public interested in distributed computing. There was quite a bit of participation here at Ars as Tom points out. But, principal researchers want to move forward in their careers and its difficult to have a "forever" project sucking your time, particularly when you have a huge and possibly expensive back end effort to sift through results and do follow on study.

Folding@Home is interesting in that researchers and projects come and go, so there is always something new somebody is studying. Einstein@Home has new telescope data they can analyze (Fermi LAT, Meerkat) and of course LIGO data for gravitational waves.

Folding@Home is interesting in that researchers and projects come and go, so there is always something new somebody is studying. Einstein@Home has new telescope data they can analyze (Fermi LAT, Meerkat) and of course LIGO data for gravitational waves.

Upvote

5

(5

/

0)

As others have mentioned: Some of it strikes me as more the "Breathless Wired article" than the Ars coverage I rely on. "An incalculable amount of PC cycles and electricity wasted for nothing" in particular.You were there, how does this piece strike you? Are you even allowed to comment on it?

It's kind of like saying Edison's first 25,000 attempts at a practical incandescent light bulb were "wasted for nothing" - which is completely untrue. It proved that those 25,000 approaches didn't work well enough - and by analyzing better and worse results, that would have guided toward better experiments.

SETI worked on and eliminated an immense amount of collected data. Finding nothing is actual evidence - if there were a nearby radio-spewing civilization, it would have been found.

...and (per Wikipedia) the project didn't end in 2020, just the distributed computing aspect. They then did data analysis and have gotten time on a 500 meter radiotelescope to examine the top 100 signals found.

https://en.wikipedia.org/wiki/SETI@home

Looking at the SETI timeline, I must have been in right at the beginning, because I remember installing it on the 4 PCs at the first site I worked on for a company, and that site was moved in July 1999.

Upvote

10

(10

/

0)

Llampshade

Ars Praetorian

I used to have a desktop or two running 24x7 at home. Now I primarily use my phone for personal things. I'm definitely not running those types of things on a device for which I prioritize battery runtime.

If I have a task that will take massive amounts of compute and is highly parallelizable, I'm going to go to the cloud. It might cost more than having people volunteer time on their home computers, but I'll also finish the job faster and more consistently. Today's cloud environment didn't exist when the SETI project started. If they started with the compute environment they have today, it might be much more efficient to run a crowdfunding campaign to run the job in the cloud.

Academics at large universities have much better access to supercomputers these days, too. Anyone can write a grant application to gain access to compute time on some of the world's largest systems. If you have a shot at that, you can get the research done quickly enough to get your PhD before retirement age. If you have an opportunity to attend the Supercomputing conference (in Denver in November this year) you can get some good insight into what these machines are capable of... and how almost anyone can gain access to one with a good enough justification. Machines of this nature have been around for a few decades, but the size and number of them have grown massively in recent years. It's not all that difficult to program for them either. Most systems run Linux and use a variety open source libraries for parallelization.

With readily accessible and relatively cheap cloud computing and a decent shot at using a supercomputer, why waste time trying to convince people to run your software on their personal hardware?

If I have a task that will take massive amounts of compute and is highly parallelizable, I'm going to go to the cloud. It might cost more than having people volunteer time on their home computers, but I'll also finish the job faster and more consistently. Today's cloud environment didn't exist when the SETI project started. If they started with the compute environment they have today, it might be much more efficient to run a crowdfunding campaign to run the job in the cloud.

Academics at large universities have much better access to supercomputers these days, too. Anyone can write a grant application to gain access to compute time on some of the world's largest systems. If you have a shot at that, you can get the research done quickly enough to get your PhD before retirement age. If you have an opportunity to attend the Supercomputing conference (in Denver in November this year) you can get some good insight into what these machines are capable of... and how almost anyone can gain access to one with a good enough justification. Machines of this nature have been around for a few decades, but the size and number of them have grown massively in recent years. It's not all that difficult to program for them either. Most systems run Linux and use a variety open source libraries for parallelization.

With readily accessible and relatively cheap cloud computing and a decent shot at using a supercomputer, why waste time trying to convince people to run your software on their personal hardware?

Upvote

4

(4

/

0)

jeromeyers2

Ars Scholae Palatinae

I'm surprised there was no mention of the great mersenne prime search which I believe started in 1996 and was my first exposure to and participation in a distributed computing project. And it succeeded in identifying many of the largest prime numbers ever discovered: https://www.mersenne.org/report_milestones/

Upvote

5

(5

/

0)

I used to run seti@home, but I think the point I stopped was when they discontinued the screensaver! My thinking was while I didn't get much from running seti@home beyond a warm fuzzy feeling, the screensaver at least made it feel like I was getting to watch the action whenever I was nearby my idle PC. It was a small thing but it somehow made it fun enough to keep going.

Here's a different idea to keep the dream of finding aliens with your PC alive: take the software that seti@home used to analyze signals, alter it to run offline on any Linux/Windows based machine (ideally an rpi) with 1 or more Software Defined Radios, then if the software finds something interesting it can either send in the sample to SETI for further analysis, either automatically or after alerting the user to authorize it.

I have a bunch of Raspberry Pis (various models) that aren't in active use that I would love to use for something like this. I've been thinking about doing the meteor sky watcher camera project but I don't have any of the recommended cameras at the moment. I do have 100% of the hardware to do SDR based SETI work, including some directional antennas and some sat dishes that aren't doing anything at the moment. Give me the software and I'd gladly run it on my own!

Here's a different idea to keep the dream of finding aliens with your PC alive: take the software that seti@home used to analyze signals, alter it to run offline on any Linux/Windows based machine (ideally an rpi) with 1 or more Software Defined Radios, then if the software finds something interesting it can either send in the sample to SETI for further analysis, either automatically or after alerting the user to authorize it.

I have a bunch of Raspberry Pis (various models) that aren't in active use that I would love to use for something like this. I've been thinking about doing the meteor sky watcher camera project but I don't have any of the recommended cameras at the moment. I do have 100% of the hardware to do SDR based SETI work, including some directional antennas and some sat dishes that aren't doing anything at the moment. Give me the software and I'd gladly run it on my own!

Upvote

0

(0

/

0)

I got more and more hooked on Folding@home over the course of 2008-2015. First it was just my PS3, then I started adding in my laptop and some older desktops at my parents' house that were still effective. It led me into building my own desktops, which has continued to be fun.

When I was in college, it was great because I wasn't paying for the power in the dorms, and my parents were okay covering the costs at their house. When I graduated, hoo boy was I running up the electric bills, like $400/month, but I accepted the price as the cost of my hobby. I stopped once I lost my job and those bills were no longer affordable. I went through several years of tenuous finances so I never started back up, and it mostly left my mind.

At one point I was one of the top 100 all-time. Who knows where I am since it's been so long, surely much, much lower.

F@h did lead me to one of my most cherished memories, which was shoving an insane server into a regular desktop case. Back in I want to say 2012-2013, there was a class of projects known as 'bigadv'. They were very large molecules that the GPUs of the time weren't quite powerful enough to handle, but multi-multi core CPUs could, and they were rewarded accordingly. I was willing to spend ~$4000 on a quad-CPU server (each CPU having 4 cores/8 threads), which was basically the most powerful thing one could assemble on a single motherboard; it got something like 1 million points per day when the best GPUs of the time could only manage like 150k. Since I didn't want to buy an actual server rack for my apartment, I bought the biggest desktop tower I could find and drilled my own standoff holes to mount the motherboard. Wasn't the prettiest job, but it worked!

I retired that machine once bigadv ended, since on all the regular CPU projects it wasn't cost-efficient with respective to points (there was a lot of internal controversy over it, with some smaller users feeling like their contributions weren't worth the cost and that only the handful of whales who could afford bigadv-class machines were paid attention to, and then those who had sunk significant money into bigadv machines being upset once it was discontinued, making their investments a lot less valuable). I kept it in closets for a while; I was kind of sad when I tried to boot it up a couple years ago and found it wouldn't POST any more.

When I was in college, it was great because I wasn't paying for the power in the dorms, and my parents were okay covering the costs at their house. When I graduated, hoo boy was I running up the electric bills, like $400/month, but I accepted the price as the cost of my hobby. I stopped once I lost my job and those bills were no longer affordable. I went through several years of tenuous finances so I never started back up, and it mostly left my mind.

At one point I was one of the top 100 all-time. Who knows where I am since it's been so long, surely much, much lower.

F@h did lead me to one of my most cherished memories, which was shoving an insane server into a regular desktop case. Back in I want to say 2012-2013, there was a class of projects known as 'bigadv'. They were very large molecules that the GPUs of the time weren't quite powerful enough to handle, but multi-multi core CPUs could, and they were rewarded accordingly. I was willing to spend ~$4000 on a quad-CPU server (each CPU having 4 cores/8 threads), which was basically the most powerful thing one could assemble on a single motherboard; it got something like 1 million points per day when the best GPUs of the time could only manage like 150k. Since I didn't want to buy an actual server rack for my apartment, I bought the biggest desktop tower I could find and drilled my own standoff holes to mount the motherboard. Wasn't the prettiest job, but it worked!

I retired that machine once bigadv ended, since on all the regular CPU projects it wasn't cost-efficient with respective to points (there was a lot of internal controversy over it, with some smaller users feeling like their contributions weren't worth the cost and that only the handful of whales who could afford bigadv-class machines were paid attention to, and then those who had sunk significant money into bigadv machines being upset once it was discontinued, making their investments a lot less valuable). I kept it in closets for a while; I was kind of sad when I tried to boot it up a couple years ago and found it wouldn't POST any more.

Upvote

2

(2

/

0)

I run Folding at Home. As we have solar panels, the electricity used is very cheap. In winter I sometimes use my PC as a source of heat for the study. When it's warmer (I live in southern Australia) then there's more sun and our panels generate excess electricity, so the cost to me is the slight reduction in revenue from what I would get if I fed that excess back into the grid. Feed in tariffs in Australia have fallen substantially. (And to deal with the heat in the study I open the window.)

That said, I agree that getting some feedback every now and again about what the project is achieving would be encouraging.

That said, I agree that getting some feedback every now and again about what the project is achieving would be encouraging.

Upvote

1

(1

/

0)

Team Lamb Chop and Team Prime Rib...many, many fun years and they didn't seem "wasted" to me.

I remember the names and still pleased to see them occasionally post. A great community.

I remember the names and still pleased to see them occasionally post. A great community.

Upvote

2

(2

/

0)

I feel like this article completely misses the forest by not even mentioning the rise of crypto mining, or cloud computing. Crypto found a way for people to (briefly) monetize those CPU cycles that were previously dedicated to distributed computing. And cloud computing (especially with the the massive growth of highly dense GPU compute cores) gave a lot of projects much cheaper access to massively powerful computing capacity. Sure it's not the same scale as F@H, but the total cost of just buying dedicated compute from Amazon or Azure diverts a lot of projects that would've used distributed computing 2 decades ago.

Add on to that the more recent performance jump in high end consumer CPUs and GPUs and you have home labs matching the performance of full dc networks from 15 years ago.

Add on to that the more recent performance jump in high end consumer CPUs and GPUs and you have home labs matching the performance of full dc networks from 15 years ago.

Upvote

5

(5

/

0)

Did SETI/FOLD/GENOME until about 2008 when I finally decided that Dual Socket Era Workstations made my pad a hot sauna. First Dual PII/450Mhz, then Dual XP Lead pencil modded MPs 2400?? Custom H20 until the radiator blew a hole.

We havent found a real ET, nor finding the cure to all Cancers. At least I did some compute in my early times. These were fun projects but my solar panels and Heat gestation of todays power behemoths in the 1Kw isn't funny to have now. We have Super computers that probably equate to a good State's worth population of PC compute to do it but those are committed to stuff like Weather/etc so WTF? I don't feel that desire to commit my power even if my solar provides freebie power.

We havent found a real ET, nor finding the cure to all Cancers. At least I did some compute in my early times. These were fun projects but my solar panels and Heat gestation of todays power behemoths in the 1Kw isn't funny to have now. We have Super computers that probably equate to a good State's worth population of PC compute to do it but those are committed to stuff like Weather/etc so WTF? I don't feel that desire to commit my power even if my solar provides freebie power.

Upvote

1

(1

/

0)

Andrew Norton

Ars Tribunus Militum

One of the distributed projects that had an Ars team [team Atomic Milkshake] was the muon1 Distributed Particle Accelerator Design project. Unlike most, where they assigned you a work unit, you processed it and sent it back (because they're brute force projects) muon1DPAD was evolutionary based, because the phase-spaces were so hard . RC5-72 has been going on for 20 years, does 300B+ keys/second on average and is only 5% done, with 10^15 keys total. We're talking 10^1200 possible simulations, each taking minutes. So it had to be evolutionary. The bonus is it's interactive, you could create your own designs and put them in, because odds are no-one else would have done it anyway, so its another random datapoint to potentially evolve from.

As a result, almost 50 sub-projects (each with a phase-space of at least 10^100) were done over the years, and I went and created a video of the best result for each of them.

Alas, in 2015ish, the UK Government cut funding for the RAL's Neutrino Factory project, which this was part of, and so the project wound up.

Anyone interested in some of the science behind it though, I did do a talk on it in 2011, plus there's a ton of papers and conference posters on it. (the pre-2015 stuff here)

K`Tetch (guy who wrote a bunch of the press releases and media stuff for it)

As a result, almost 50 sub-projects (each with a phase-space of at least 10^100) were done over the years, and I went and created a video of the best result for each of them.

Alas, in 2015ish, the UK Government cut funding for the RAL's Neutrino Factory project, which this was part of, and so the project wound up.

Anyone interested in some of the science behind it though, I did do a talk on it in 2011, plus there's a ton of papers and conference posters on it. (the pre-2015 stuff here)

K`Tetch (guy who wrote a bunch of the press releases and media stuff for it)

Upvote

7

(7

/

0)

Even before the Covid era the power bill for running folding hardware all the time was high. That's the main reason why I got out of the game (team Maximum PC represent) in the early 2010s.

Upvote

0

(0

/

0)

Two things:

I used Seti@Home in or around 1999 until F@H came online and I ditched Seti and have been a Folder ever since. However, I live in Texas and with daytime temperatures reaching 106°F and nighttime temps not getting down to 83-84°F (at best), it's just too doggone hot to fold anymore. In the colder months, it's great, but right now? Not so much.

Also, though it's gotten better over the years, the F@H website & software installs weren't really geared towards ease of use. They did a decent job of explaining the project in layman's terms, but not so much when it came to setting up & running the software. To me, it seemed to always require just a little bit of advanced knowledge that a novice user may not know.

I remain dedicated to the F@H project, but only when it cools down some.

I used Seti@Home in or around 1999 until F@H came online and I ditched Seti and have been a Folder ever since. However, I live in Texas and with daytime temperatures reaching 106°F and nighttime temps not getting down to 83-84°F (at best), it's just too doggone hot to fold anymore. In the colder months, it's great, but right now? Not so much.

Also, though it's gotten better over the years, the F@H website & software installs weren't really geared towards ease of use. They did a decent job of explaining the project in layman's terms, but not so much when it came to setting up & running the software. To me, it seemed to always require just a little bit of advanced knowledge that a novice user may not know.

I remain dedicated to the F@H project, but only when it cools down some.

Upvote

5

(5

/

0)

I'm at 93 "years" donated to World Community Grid since '05 (and zero to crypto.)I have been a contributor to the World Community Grid since 2004 and have (according to their statistics) contributed over 22 years of computing results in varying projects, such as Mapping Cancer Markers, Open Pandemics, Africa Rainfall Project, and Help Stop TB.

I am proud to have assisted in important research on these topics and have urged others to help as well. I understand that there have been many distractions, not least among them the urge to mine cryptocurrency, a following I have no intention of doing.

It remains to be seen if AI can make any significant contribution to similar research projects. In the meantime, I am glad to devote some of my resources to seeking solutions.

The research updates have improved under the new stewardship of Krembil. Here is a recent "Mapping Cancer Markers" update:

https://www.worldcommunitygrid.org/about_us/article.s?articleId=794

Upvote

2

(2

/

0)

Andrew Norton

Ars Tribunus Militum

D.net was ok, I worked with them a bunch of years, and even pointed out the flaw in their initial OGR runs. however, when they got bought up by united devices and moved to Austin, everything changed, especially their attitude (to the point that the founders wife left him, and they were a close couple before).Thank you, exactly. I was reading through all the comments trying to find mention of distributed.net. I used that back when it came out in '97. My friends and I sneakernet'd blocks of hashes to the demo computers at CompUSA, where we worked. It was good fun. If I remember correctly, we were members of the ufies.org group, lol.

That's what made me start with muon1. i still occasionally run into people from the old dnet irc chan though.

Upvote

1

(1

/

0)

Shadow Lord

Smack-Fu Master, in training

Also SETI@Home was far from the first (probably GIMPS - Mersenne Primes), or even the first big one (distributed.net), and there were others that predated SETI@Home.

I remember the early days of GIMPS, when one had to periodically e-mail the results your PC had generated to the founder, George Woltman. It was nice that this sometimes resulted in brief little exchanges with him, but fortunately the whole process was automated soon after and could actually scale. Interesting to think of that moment being the birth of true distributed computing, or at least the "first well-known and successful project" (GPT's words).

Upvote

2

(2

/

0)

whydoihavetoexplainthis

Smack-Fu Master, in training

Distributed computing is a booming industry, employing thousands, if not tens of thousands, of software engineers.

Unfortunately for your readers, neither your writers, nor your editors, nor apparently the majority of people commenting on this article actually know what distributed computing is.

Distributed computing is the practice of computing on distributed systems, and what you have described here (crowdsourced computing) is only a tiny subset of the field.

Unfortunately for your readers, neither your writers, nor your editors, nor apparently the majority of people commenting on this article actually know what distributed computing is.

Distributed computing is the practice of computing on distributed systems, and what you have described here (crowdsourced computing) is only a tiny subset of the field.

Upvote

-9

(1

/

-10)

Arstechnica too had a folding@home team IIRC, I was in Beyond3D team though.Folding@home was kind of fun (well, relatively fun) back in the early 2000s. From what I remember, a lot of tech forums (like this one) created F@H teams and there was some friendly competition between some of the rival sites to see who could fold the most proteins. Plus, it was pretty neat to see the hardware some forum members were putting into the effort - things like dual-CPU systems back when that was a pipe dream for most anyone, a room full of old PCs scavenged from a school or whatever.

I suppose those forum-based F@H teams were kind of the forerunner to the "strong leaders" and "social media presence" that the author talks about but, many of them died off as the sites themselves closed up shop and nothing came around to fill the void.

Upvote

1

(1

/

0)

Bad Monkey!

Ars Legatus Legionis

I used to run seti@home, but I think the point I stopped was when they discontinued the screensaver! My thinking was while I didn't get much from running seti@home beyond a warm fuzzy feeling, the screensaver at least made it feel like I was getting to watch the action whenever I was nearby my idle PC. It was a small thing but it somehow made it fun enough to keep going.

Here's a different idea to keep the dream of finding aliens with your PC alive: take the software that seti@home used to analyze signals, alter it to run offline on any Linux/Windows based machine (ideally an rpi) with 1 or more Software Defined Radios, then if the software finds something interesting it can either send in the sample to SETI for further analysis, either automatically or after alerting the user to authorize it.

I have a bunch of Raspberry Pis (various models) that aren't in active use that I would love to use for something like this. I've been thinking about doing the meteor sky watcher camera project but I don't have any of the recommended cameras at the moment. I do have 100% of the hardware to do SDR based SETI work, including some directional antennas and some sat dishes that aren't doing anything at the moment. Give me the software and I'd gladly run it on my own!

What made S@H a legit scientific project is that all their signal data came from legit radio observatories where the researchers were assured of the provenance of the original data and had the ability to prove its veracity, and even then they were sending the same work unit out to multiple participants, and only using them to find interesting signals that would flagged for validation and further study. Having randos collect radio data from who knows what equipment with who knows what interference that can't be independently validated is not how science is done. 100% you're going to get assholes either manipulating the signal or the data.

Upvote

5

(5

/

0)