You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

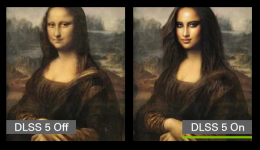

Gamers react with overwhelming disgust to DLSS 5’s generative AI glow-ups

- Thread starter JournalBot

- Start date

That is intentional and why they used ultra low settings on the before image and ultra slop on the after imageHmm, I don’t know if I’m the odd one out but I quite liked the after result! Is it still a bit artificial? Yes, quite, but miles better than that flat textures in the before images?

Upvote

43

(45

/

-2)

A single 5090 by itself can already get very very close to the detail shown on a single 5090 when the settings aren't intentionally set to all of the lowest possible settings.I very much doubt that two 5090 couldn't get damn close without DLSS5 to what the've shown here.

It's hard to overstate the degree to which Nvidia ratfucked the comparison in the first place. And yet the generative AI still spectacularly failed compared to the worst output the game allows for.

Upvote

41

(44

/

-3)

I'm in the minority here, but I really like it—really nice character improvements with an obvious limitation: the AI doesn't improve the character’s motion.

Now it's even more important to get character rigging right. If you have limited facial and body movements, the AI isn't (currently) able to smooth that out. Some people aren't able to handle the more realistic character with the unrealistic marionette motions [they get the ick].

intersting to see that the the way that the hair was stylistically created on the male character came through on the up-do—and showed that it plain looked weird. It was weird on the low res model, more so on the high res recreation.

Now it's even more important to get character rigging right. If you have limited facial and body movements, the AI isn't (currently) able to smooth that out. Some people aren't able to handle the more realistic character with the unrealistic marionette motions [they get the ick].

intersting to see that the the way that the hair was stylistically created on the male character came through on the up-do—and showed that it plain looked weird. It was weird on the low res model, more so on the high res recreation.

Upvote

-11

(10

/

-21)

KalemLeone

Seniorius Lurkius

Which LLM did you copy-paste this blatant glaze fromI think we're having a "blue-black dress, white-gold dress" moment here, where people actually see different things in an image. I'm in the minority of commenters - I think the tech outputs impressively photorealistic immersive worlds.

Artist Direction: I think there's a massive void between the people that see the tech change artistic direction and those who don't. I don't get what anybody is saying about the change in artistic direction. I will admit that I'm not a terribly emotional person, so maybe my threshold for detecting subtlety and nuance in artistic direction is simply higher than the "against" crowd. Also - the games we're seeing here are the digital equivalent of commodity products. Of course they're going to look generic. They're generic like the show Friends (or whatever the kids are watching these days), or popcorn movies, or even like the "artwork" prints that are available for purchase at Target. I wouldn't expect the artistry to be stylized like an indie game.

Beautified Faces: I'd liken the faces on the characters to AAA blockbuster movie actors, and not Instagram models? Maybe I'm showing my age. I know that for those games where the developers are targeting the most cinematic/realistic imagery, I would turn this on and happily be immersed in the fidelity. It looks like what the developers would have aimed for with their design had consumers the technology to compute it in real-time: an interactive cinematic experience. I love games like that - Tomb Raider reboot made me feel like I was in an action movie. That's not what all games are designed to do - some are very stylized (Lost Records, What Remains of Edith Finch, Tearaway, The Walking Dead, Borderlands) and in that case, I'd expect that I wouldn't use it. I am curious what it would do for games like A Plague Tale where the characters (and, really, the world) are supposed to have dirt caked on their faces. I would hope it wouldn't clean it up and just make it look more realistic.

Steep Hardware Requirements: Digital Foundry has a good video about the tech. They mention that NVidia has it running on a single card in their labs. That's what they're hoping to achieve with this generation. Even if not this generation, I'd expect the next.

Glitches: (like the guy with weird eyes in Oblivion) It's a tech demo! Of course there will be glitches. But for everything it gets right, it's impressive. This feels like reaching.

The DLSS 5 Name: It's stupid (to keep calling it DLSS when it's doing way more than super-sampling). I happily concede this point.

It's optional. You don't have to use it. Just like you didn't have to comment on the article. Or you didn't have to read the article. Have you ever admonished someone for posting they didn't like an article on here? Well, here ya go. You don't like it, don't use it. Stop pooping on the parade of the people that are excited for this!

Upvote

10

(18

/

-8)

Looking at the DLSS5 results, what stands out to me is that humans are thrown into focus and everything else is washed out. Is that the case for all use of this, or is the AI drawing a conclusion that this particular game is person-focused?

Because other bits of what look like very intentionally created artwork (brightness of various lights, shapes of signs and lettering, etc.) have been subtly modified in a way that draws attention away from them, when I'd guess the game creator wanted users to NOTICE those details.

Because other bits of what look like very intentionally created artwork (brightness of various lights, shapes of signs and lettering, etc.) have been subtly modified in a way that draws attention away from them, when I'd guess the game creator wanted users to NOTICE those details.

Upvote

19

(20

/

-1)

Post content hidden for low score.

Show…

That is clearly not what is being done here.Each game needs to be trained on what the characters would ideally look like if rendered at some very high fidelity, and then the model reconstructs it from a lower-res base.

Upvote

28

(30

/

-2)

The rocket didn’t blow up. It had a very fast fire.Hate it or love it or meh, everyone seems to be misunderstanding what it is.

It's not an "AI filter" or any filter at all - that wouldn't create consistent or predictable characters.

Each game needs to be trained on what the characters would ideally look like if rendered at some very high fidelity, and then the model reconstructs it from a lower-res base.

Now, either that is the artist's vision, or it's some human's interpretation of an artist's vision ("here, let me upscale this model with more detailed textures"), or the tech isn't great and it's exaggerating what it's supposed to be reconstructing.

What it's not, is an AI hallucination. It's some pipeline gone wonky.

Upvote

16

(16

/

0)

Post content hidden for low score.

Show…

The ‘murk’ in the Resident Evil scene is rain, fog and overcast lighting. Rendered in low detail because Nvidia intentionally used settings that don’t render the volumetric fog and rain.Sorry, but I like the look of DLSS5. I've NEVER been a fan of the "murk" that substitutes for artistic detail in many science fiction movies and games. If artists are offended, they can suppress it, according to NVIDIA's statements. I suppose there will be a toggle for users, as well.

Upvote

36

(37

/

-1)

Ratfucked, now there's one I haven't heard in ages. Thank you for reminding me of this particular colloquial. I shall use it in measure and responsibly.A single 5090 by itself can already get very very close to the detail shown on a single 5090 when the settings aren't intentionally set to all of the lowest possible settings.

It's hard to overstate the degree to which Nvidia ratfucked the comparison in the first place. And yet the generative AI still spectacularly failed compared to the worst output the game allows for.

Upvote

17

(18

/

-1)

Post content hidden for low score.

Show…

Wait, so why are you upset?Amazing what gets 20 something males living in the parent’s basement upset.

Upvote

31

(33

/

-2)

Post content hidden for low score.

Show…

That depends which blur you're talking about.Looking at the DLSS5 results, what stands out to me is that humans are thrown into focus and everything else is washed out. Is that the case for all use of this, or is the AI drawing a conclusion that this particular game is person-focused?

Because other bits of what look like very intentionally created artwork (brightness of various lights, shapes of signs and lettering, etc.) have been subtly modified in a way that draws attention away from them, when I'd guess the game creator wanted users to NOTICE those details.

If you watch the video, you can see a blur behind any moving object. That's a normal DLSS upscaling artifact. They did get pretty good at getting rid of the ghosting for the spatial upscaling versions, but the framegen version definitely still has it, so that could be part of it. DLSS 5 likely makes that worse.

There's also the fact that they likely aren't using the same settings for DLSS off and on. Wouldn't at all be surprised if whoever cooked up these comparisons disabled depth of field for the "before" samples.

Upvote

8

(9

/

-1)

Post content hidden for low score.

Show…

Post content hidden for low score.

Show…

MilkyBarKid

Ars Praetorian

My background is in AI. I don’t think all AI is shit, but the AI that isn’t is generally tailored to the problem domain, using appropriate data sets and with a clear understanding of what it’s meant to do and how to manage inaccuracy. Not shoveled in any old place it can go and with all the defects hand waved away. What was it, 19 out of 20 rollouts fail? Maybe the reason people don’t like it has something to do with it consistently not living up to expectations - that it’s often functionally shit as well as all those other ways.I think there's a more probable explanation.

AI is shit, but it just happens to be aesthetically pleasing to you. Your position is a minority position, but you are not completely alone, which means your preferences are being reinforced. Meanwhile, a great diversity of people comment on Ars, with all different backgrounds in music, art, computer science, "real" science, even the law. And the majority consensus amongst that cohort is that AI is shit. Aesthetically, morally, ethically, environmentally, economically shit.

Also, is it opt-out or opt-in? How does it work for games that are already made? Edited to add: in the video linked upthread, they point out that users may go around the developer’s intent to enable it for games that don’t ship with support. I can understand why that would make developers unhappy.Citation needed

Last edited:

Upvote

18

(19

/

-1)

Don't put words into people's mouths.Turmoil because artificial creations look artificial in a different way - cuz gamers thought the original digital creations were "real"....the humanity

Upvote

20

(22

/

-2)

Post content hidden for low score.

Show…

gruberduber

Wise, Aged Ars Veteran

I think it's an impressive demo, with the DLSS 5 versions looking much more photorealistic than the originals. I still have a lot of questions about how well it would work in the real world, but to me these cherry-picked examples look great. I know this is highly subjective, but I'm genuinely puzzled as to how so many people - general concerns about AI aside - hate the results from these demos so much.

Part of it is that people won't, as you say, put "general concerns about AI aside". They think the damage AI is doing to, well.. everything is not worth any of this shit. Which I think is fair.

Many of us are also angry that we can't even afford to buy RAM, hard drives, or graphics cards because it's all too expensive because of shit like this too.

But I honestly think the main difference of opinion is something I've come to think of as "art blindness", for want of a better description.

Some people are colour-blind or tone deaf. Certain colours and notes just don't register in their brain. Some people (like me) can't taste much, so complex flavours are lost on them. Some are face-blind or don't process subtle expressions... None of that is criticism or moral judgement, we're all different, and it can have benefits too.

So, when a lot of people look at art they simply don't see the things that others do. The use of atmospheric perspective to evoke mood is lost on them. Subtle differences in facial expression don't register. They don't notice the way composition is used to subtly draw the eye, or the way colour palette elicits emotion. They can't tell a more uniqe face from a generic one. None of that is criticism, people are just different. But when you can't see this stuff, you don't realise you're missing it, so other people just seem pretentious or upset over 'nothing'. One commenter here even stated 'games are not art', which proves my point, but boggles my mind.

In this instance, many of us can see a lot of stuff in the original image which is simply lost with this tech turned on. e.g The first face expresses a lot of subtle things about the character and is memorably unique. The second looks like every instagram photoshoot smashed into one - it loses all nuance and says nothing.

AI sands off the unique corners of things, and makes it generic. That's literally all it can do, by design. All the subtler quirks and details get blasted away. But if you didn't register what's lost, you don't see the problem.

And even that would be fine, but this will not be something you can just 'turn off if you don't like it' as people are claiming. If people are going to turn on a filter that smears away all the unique touches and art direction, why would developers devote time to including it in the first place? The underlying art will be greatly impoverished if this becomes commonplace.

While it's fine that not everyone can tell what is being lost here, many of us can. Add that to a backdrop where everything seems to be getting dumber, shitter, more generic, and more corporate everyday... tech which turns art with a personal touch into generic instagram photos is the last thing we want.

Upvote

26

(29

/

-3)

Good, AI is only worthy of being despised.Thanks for the editorial Kyle on how you emotionally despise AI.

Upvote

8

(15

/

-7)

MilkyBarKid

Ars Praetorian

Some of this comes down to training, too. If you learn more about art, you have a more critical eye and will pick up on issues that other people might let slide.Part of it is that people won't, as you say, put "general concerns about AI aside". They think the damage AI is doing to, well.. everything is not worth any of this shit. Which I think is fair.

Many of us are also angry that we can't even afford to buy RAM, hard drives, or graphics cards because it's all too expensive because of shit like this too.

But I honestly think the main difference of opinion is something I've come to think of as "art blindness", for want of a better description.

Some people are colour-blind or tone deaf. Certain colours and notes just don't register in their brain. Some people (like me) can't taste much, so complex flavours are lost on them. Some are face-blind or don't process subtle expressions... None of that is criticism or moral judgement, we're all different, and it can have benefits too.

So, when a lot of people look at art they simply don't see the things that others do. The use of atmospheric perspective to evoke mood is lost on them. Subtle differences in facial expression don't register. They don't notice the way composition is used to subtly draw the eye, or the way colour palette elicits emotion. They can't tell a more uniqe face from a generic one. None of that is criticism, people are just different. But when you can't see this stuff, you don't realise you're missing it, so other people just seem pretentious or upset over 'nothing'. One commenter here even stated 'games are not art', which proves my point, but boggles my mind.

In this instance, many of us can see a lot of stuff in the original image which is simply lost with this tech turned on. e.g The first face expresses a lot of subtle things about the character and is memorably unique. The second looks like every instagram photoshoot smashed into one - it loses all nuance and says nothing.

AI sands off the unique corners of things, and makes it generic. That's literally all it can do, by design. All the subtler quirks and details get blasted away. But if you didn't register what's lost, you don't see the problem.

And even that would be fine, but this will not be something you can just 'turn off if you don't like it' as people are claiming. If people are going to turn on a filter that smears away all the unique touches and art direction, why would developers devote time to including it in the first place? The underlying art will be greatly impoverished if this becomes commonplace.

While it's fine that not everyone can tell what is being lost here, many of us can. Add that to a backdrop where everything seems to be getting dumber, shitter, more generic, and more corporate everyday... tech which turns art with a personal touch into generic instagram photos is the last thing we want.

This is definitely also the case for AI music. If you know about music structure, it makes Suno’s issues with structuring a song properly more glaring.

Upvote

20

(20

/

0)

It may be because the technology being used quite probably is one that was mostly developed for faces:Looking at the DLSS5 results, what stands out to me is that humans are thrown into focus and everything else is washed out. Is that the case for all use of this, or is the AI drawing a conclusion that this particular game is person-focused?

Because other bits of what look like very intentionally created artwork (brightness of various lights, shapes of signs and lettering, etc.) have been subtly modified in a way that draws attention away from them, when I'd guess the game creator wanted users to NOTICE those details.

View: https://www.youtube.com/watch?v=KnozAHKTz9o

Time ago I saw another video that I can't find anymore where somebody talked about it with nVidia engineers, and they were "sad" that the original reveal (which now has comments turned off...) was poorly received, and were working hard to avoid that in future presentations... Didn't work out so well in the end, it seems.

Upvote

24

(24

/

0)

Were did you get that information?Hate it or love it or meh, everyone seems to be misunderstanding what it is.

It's not an "AI filter" or any filter at all - that wouldn't create consistent or predictable characters.

Each game needs to be trained on what the characters would ideally look like if rendered at some very high fidelity, and then the model reconstructs it from a lower-res base.

Now, either that is the artist's vision, or it's some human's interpretation of an artist's vision ("here, let me upscale this model with more detailed textures"), or the tech isn't great and it's exaggerating what it's supposed to be reconstructing.

What it's not, is an AI hallucination. It's some pipeline gone wonky.

Upvote

17

(17

/

0)

It's not free performance, it's more frames (and even then not equivalent to regular frames), which is not the same, things like input will run at the original FPS.While Frame Gen can be a little wonky in some games, for the most part it's free performance.

Upvote

14

(14

/

0)

That's not how it works, and it's not how it should work. These AIs cannot know what surfaces the rain are supposed to be hitting, or how they should be rendered. And it's very doubtful that they can know with enough certainty when it's raining and when it's not. The developers must be responsible for those things, it's not that hard.Or the original image clearly shows it's raining and yet the textures shown for the surfaces that rain is hitting do not reflect that. I know how to make textures look wet.

Upvote

8

(10

/

-2)

That was my point, which I think was missed. I as a developer don't get to say "no" to this. I can, according to Nvidia, surpress it in some places. I don't want to surpress it, I want to disable it so the artistic intent of my game is preserved. Nor do I have time to go through and figure out how to surpress it, because I'm busy developing a game that Nvidia is determined to make into slop for anyone that has a 5090. I think the "yay photorealism" crowd is missing that Nvidia is taking something I have made, with tears and sweat and deliberate artistic choices, and changing it without my consent or input. That's not cool.Also, is it opt-out or opt-in? How does it work for games that are already made? Edited to add: in the video linked upthread, they point out that users may go around the developer’s intent to enable it for games that don’t ship with support. I can understand why that would make developers unhappy.

Upvote

16

(16

/

0)

DaveSimmons

Ars Legatus Legionis

Samsung should sue Nvidia for infringing their patent on phone camera faked moon photos.

Upvote

2

(2

/

0)

It may be because the technology being used quite probably is one that was mostly developed for faces:

View: https://www.youtube.com/watch?v=KnozAHKTz9o

Time ago I saw another video that I can't find anymore where somebody talked about it with nVidia engineers, and they were "sad" that the original reveal (which now has comments turned off...) was poorly received, and were working hard to avoid that in future presentations... Didn't work out so well in the end, it seems.

It seems really apparent that the AI half of Nvidia is desperately trying to invade the gaming half. And just like the rest of AI Nvidia they don’t seem to at all understand nobody likes them forcing their way in to various things.

AI can be a useful tool. But AI Nvidia doesn’t understand that. They think they have a magic product that everyone must love and just its presence makes everything better. And they have zero understanding of what creative license is, much less aesthetic style and mood.

This is a terminal issue if they don’t get a handle on it. We’re now at the place where it’s going to choke the gaming product to death.

Upvote

20

(21

/

-1)

It may be because the technology being used quite probably is one that was mostly developed for faces:

View: https://www.youtube.com/watch?v=KnozAHKTz9o

Time ago I saw another video that I can't find anymore where somebody talked about it with nVidia engineers, and they were "sad" that the original reveal (which now has comments turned off...) was poorly received, and were working hard to avoid that in future presentations... Didn't work out so well in the end, it seems.

I found this presentation video, and while I didn't hear the comment you state (I didn't watch the whole thing), the video does at least start with the engineers calling the tech "experimental," so seeming to hedge against criticism. Still comments are OFF for this video as well:

View: https://www.youtube.com/watch?v=VsEExFQD-ps

Upvote

5

(5

/

0)

Don't put words into people's mouths.

It’s too late, I have already depicted you as the soyjak and myself as the chad

Upvote

-7

(3

/

-10)

Yeah, someone posted a screencap before, but I found a video of gameplay of Resident Evil Requiem on an RTX 5090 with "Max Settings", and the player model looks a lot better than in the Nvidia comparison.A single 5090 by itself can already get very very close to the detail shown.... when the settings aren't intentionally set to all of the lowest possible settings.

View: https://www.youtube.com/watch?v=_YLpaWRjx6c

Note that the video says "DLSS On," but I believe that's DLSS 4.5, so none of the new gen AI image manipulation.

Upvote

29

(30

/

-1)

Post content hidden for low score.

Show…

Post content hidden for low score.

Show…

There have actually been a number of nuanced takes about DLSS 5, including from people who have been fans of past DLSS versions, who have been pro-AI in certain aspects (again, like previous DLSS versions, that just did upscaling and/or frame interpolation), that think this is just bad, and a step in the wrong direction.The blindness cuts both ways. If you are a part of a community that views AI as the root of all evil and deems everything touched by AI as "slop", then of course you are going to be incapable of forming an unbiased opinion about new AI applications. It's really hard for me to accept your explanation that the anti-DLSSv5 folks are somehow espousing a more cultured and nuanced view when their opinions are so extreme and inflexible.

Is it groupthink, or consensus? After all, the upvote button exists for all those posts as well. If the "average person," likes it, why isn't that showing in the upvote count?Just look at the way people are being downvoted to oblivion for expressing what should be very uncontroversial opinions like saying "I like it". Does this discussion really feel like its being led by people who have a more discerning eye than your average person? Feels more like groupthink to me.

Upvote

25

(26

/

-1)

The funniest part to me, is how low they must've set the default game graphics to create the comparison.

This is pulled from a Youtube compressed gamplay recording, but the base game already looks just as good as the DLSS version if not better...

And also looks like a Completely different character than the DLSS interpretation

This makes me wonder if it’s aimed at the 5060 rather than a 5090. It’ll let Nvidia pump out a card with a crap GPU, hardly any RAM and AI running everything. Assuming they can somehow actually make it work on a 5060.

Although my assumption is it’s aimed at people who make AI slop games. Porn games can be vibe coded and a realistic face slapped on in no time. No need to spend any effort on making characters.

Upvote

-5

(2

/

-7)

I really don't understand how some of you do not see anything particular wrong with it.

It's adding and removing content that was or wasn't originally there. The character's hair is a different color, if it only added detail that would've been great, but it CHANGED it. Instead of enhancing the light from the street lamp, it dimmed the lamp and removed the glow from said street lamp.

That is the AI slop that people are complaining about.

It's adding and removing content that was or wasn't originally there. The character's hair is a different color, if it only added detail that would've been great, but it CHANGED it. Instead of enhancing the light from the street lamp, it dimmed the lamp and removed the glow from said street lamp.

That is the AI slop that people are complaining about.

Upvote

24

(27

/

-3)

Which is why @Sajuuk was correct in their response to this post:No more so than "looking worse".“Looking better” is objectively a subjective metric.

Note the phrase, "objectively better," which Sajuuk was responding to. Congrats, you agree with Sajuuk that "better/worse" are subjective metrics.All On examples are objectively better looking than the Off examples.

Upvote

22

(22

/

0)