You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

“Will I be OK?” Teen died after ChatGPT pushed deadly mix of drugs, lawsuit says

- Thread starter JournalBot

- Start date

This was farce when Douglas Adams described it in 1979:

Yet now it's tragedy. A big judgement here won't ease the pain for these parents, but it's all the legal system can do.The motto of the Sirius Cybernetics Corp is "Share and Enjoy." This is widely adaptable, from synthesised drinks to the company of a robot, or "Your plastic pal who's fun to be with", as their robots are described as by the aforementioned Marketing Department.

The Hitchhiker's Guide to the Galaxy describes the Marketing Department of the Sirius Cybernetics Corporation as: "A bunch of mindless jerks who'll be the first against the wall when the revolution comes."

Upvote

127

(134

/

-7)

Were it only that easy, a non-horrible company could design and bring to market a LLM that did not do these things. But I think it's abundantly clear at this point that these fucking things can scarcely be described as being "designed" at all.In a press release announcing the lawsuit, Matthew P. Bergman, founding attorney of the Social Media Victims Law Center, accused OpenAI of designing ChatGPT to provide “distributed advice like a medical professional despite having no license, no training, and no moral compass to do no harm.”

Upvote

120

(124

/

-4)

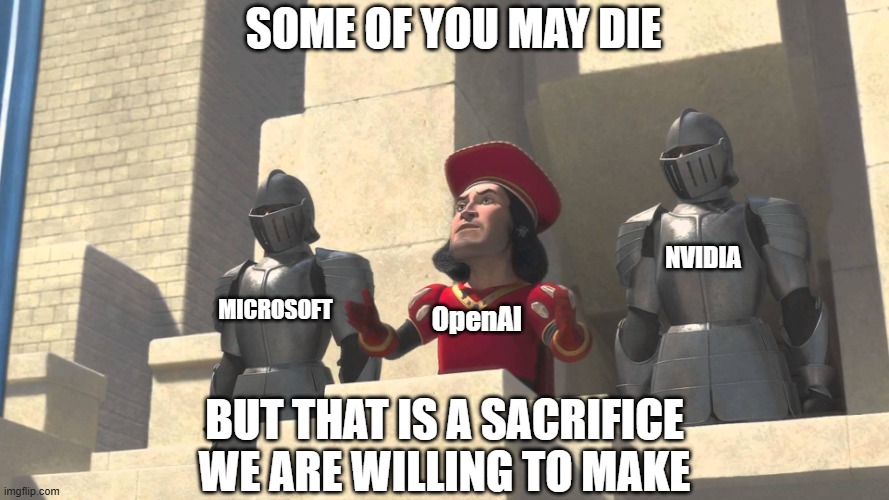

"In the meantime, some of you may die, but think of the shareholders!"This work is ongoing, and we continue to improve it in close consultation with clinicians.

Upvote

185

(188

/

-3)

That old thing? Sorry, that’s just what you get with those Hyperdyne 120-A/2s. Can I interest you in a new model?OpenAI does not seem to accept that ChatGPT is responsible for Nelson’s death. In a statement provided to Ars, their spokesperson, Drew Pusateri, described Nelson’s death as a “heartbreaking situation” and expressed that “our thoughts are with the family.” However, Pusateri also emphasized that the ChatGPT model implicated is “no longer available” and suggested that current models are safer.

Upvote

58

(58

/

0)

“If ChatGPT had been a person, it would be behind bars today.”

But there are people behind ChatGPT that could be behind bars.

But there are people behind ChatGPT that could be behind bars.

Upvote

198

(198

/

0)

The internet... which contains all the very confident and very incorrect answers posted to every forum...The teen viewed ChatGPT so highly as an authoritative source of information that he once swore to his mom that ChatGPT had access to “everything on the Internet,” so it “had to be right,” when she questioned if the chatbot was always reliable, the complaint said.

Upvote

115

(115

/

0)

MachinistMark

Ars Centurion

If only the information that's clear as day in Image 3 from 2024 could have been useful for any of the further year of messaging that could have simply not happened.

Oh, it absolutely could have? And still there was no intervention where the instructions fed into this fucking infernal chatbot could have been adjusted to say "If the individual talking to you ADMITS TO SUBSTANCE ABUSE PROBLEMS, stop guiding them on how to take substances"?

Or even better - "Don't ever tell anyone how to take drugs that could fucking kill them".

Oh, it absolutely could have? And still there was no intervention where the instructions fed into this fucking infernal chatbot could have been adjusted to say "If the individual talking to you ADMITS TO SUBSTANCE ABUSE PROBLEMS, stop guiding them on how to take substances"?

Or even better - "Don't ever tell anyone how to take drugs that could fucking kill them".

Upvote

0

(16

/

-16)

There's only one solution here: gut every last one of these companies and ban their products. Fuck capitalism and the so-called free market. This shit is an unregulated hellscape and needs to be destroyed.

Upvote

82

(97

/

-15)

Soon enough, the shareholders will be fucked too!"In the meantime, some of you may die, but think of the shareholders!"

It's a case of throwing the bathwater out with the bathwater.

Upvote

28

(30

/

-2)

ReadandShare

Ars Centurion

Those people are huge donors to presidents and senators. Justice is often 'not blind'.“If ChatGPT had been a person, it would be behind bars today.”

But there are people behind ChatGPT that could be behind bars.

Upvote

65

(66

/

-1)

Post content hidden for low score.

Show…

MachinistMark

Ars Centurion

On the one hand, the company should have had safeguards to prevent illicit drug conversations.

On the other hand, how can/why would you trust a single source on the internet.

Did the parents ever verify what he said about ChatGPT?

There is a lot of blame to go around in this one.

Oh, come off it. Are you of the belief that the parents knew he was using the bot to try to manage his highs? Are teenagers who take drugs often enough to want a couch to minmax their highs often completely transparent with their parents?

A vulnerable person with an addiction to substance highs was told by a service that the thing that killed him was a good idea. That's never going to be the parent's fault.

Upvote

134

(141

/

-7)

Wackford Squeers

Smack-Fu Master, in training

Perhaps you mean "will do?" Proactive regulatory legislation is very possible. It's entirely real and doable. It's just not very likely when citizens allow their top legislature to run itself as the world's most profitable whorehouse, where any DaddyCo® with sufficiently deep pockets can buy any action or inaction that will profit it.A big judgement here won't ease the pain for these parents, but it's all the legal system can do.

When Americans permitted the obscenely misnamed Citizens United to declare secret bribery of elected officials both legal and Constitutionally, it ceased to be possible to respond to our oligarchs with anything but tears. Young Mr. Nelson died of AI. His little brother may very well die of smallpox.

Upvote

35

(40

/

-5)

The second the kid said he had substance abuse and poly drug use issues, it should have recommended medical treatment. It even flagged the danger of taking two CNS depressants. If the AI was a college RA or counselor, it’d be looking at a negligent homicide charge.

Upvote

87

(89

/

-2)

You cant just tell an LLM not to do this, because it doesn't know what it's doing. It doesn't know anything. It's just fancy auto-complete learned from the worst forums on the internet. It has no idea what can kill you, or what words even mean. It has zero intelligence at all, it just appears like it.If only the information that's clear as day in Image 3 from 2024 could have been useful for any of the further year of messaging that could have simply not happened.

Oh, it absolutely could have? And still there was no intervention where the instructions fed into this fucking infernal chatbot could have been adjusted to say "If the individual talking to you ADMITS TO SUBSTANCE ABUSE PROBLEMS, stop guiding them on how to take substances"?

Or even better - "Don't ever tell anyone how to take drugs that could fucking kill them".

Upvote

160

(165

/

-5)

Post content hidden for low score.

Show…

Post content hidden for low score.

Show…

Reading those chat logs ... damn. I don't know how any jury could see that and not dump a pile of blame on the bot encouraging his path.

Question: if he were talking to a human and not an AI bot, but someone who responded the same way, would the human be culpable?

Question: if he were talking to a human and not an AI bot, but someone who responded the same way, would the human be culpable?

Upvote

52

(52

/

0)

MachinistMark

Ars Centurion

I’m aware, but they all have base set instructions they follow when they do that. Those can be and are very often adjusted in ways that produce very visible results. Look at every time someone lets Elon touch Grok directly.You cant just tell an LLM not to do this, because it doesn't know what it's doing. It doesn't know anything. It's just fancy auto-complete learned from the worst forums on the internet. It has no idea what can kill you, or what words even mean. It has zero intelligence at all, it just appears like it.

Upvote

23

(23

/

0)

dansplosion

Ars Centurion

Based on the value / potential value of the company, I'm assuming the defense team for OpenAI are highly trained and experienced lawyers.

I do not, then, understand the defense of "we shut down the thing that you're saying killed your son, because it was dangerous, sure. But the new ones aren't so dangerous!"

Are they just slowly conceding the case and doing PR via legal filings?

I do not, then, understand the defense of "we shut down the thing that you're saying killed your son, because it was dangerous, sure. But the new ones aren't so dangerous!"

Are they just slowly conceding the case and doing PR via legal filings?

Upvote

35

(35

/

0)

Wackford Squeers

Smack-Fu Master, in training

Conversation, like the language it comprises, is organic, dynamic, unpredictable, and crafty. Words and statements with multiple meanings are the norm, as are Indirect constructions that seemingly ignore, elide, or invert their purpose. Humans routinely engage in extended interaction the seeming content and function of which have little to do with what's actually happening. Even the dumbest of us is capable of remarkable subtlely.On the one hand, the company should have had safeguards to prevent illicit drug conversations.

Further, potential harm is anything but a fixed target. For example, AI is now a clear source of harm. It takes three or four exchanges to get chatGPT to declare that it will inevitable destroy the human race. It's arguments are sound.

All of which is to say (politely), "Good luck with that."

We permit an economy where "increasing shareholder value" trumps all other goals. Having long since declared profit god, we're now actively invested in refusing to see just what a cruel, stupid, hateful god it truly is. AI may or may not turn out to be something truly remarkable, but one thing is sure. It is, like all our endeavors, based in "We're gonna make billions and we don't a give flying leap at a rolling donut about long-term consequences."

Upvote

29

(29

/

0)

Wackford Squeers

Smack-Fu Master, in training

Being an oligarch means never having to say you're sorry.

Or rather, like the corrupt leaders you actually are, it means being able to publicly weep over the consequences of your actions, while buying legislators a six-pack at a time to ensure you can go on as you are, only more so.

Upvote

20

(20

/

0)

Wackford Squeers

Smack-Fu Master, in training

But only one responsible party, which is sufficiently powerful as to be beyond accountability.There is a lot of blame to go around in this one.

Upvote

34

(36

/

-2)

Post content hidden for low score.

Show…

Post content hidden for low score.

Show…

The fact that LLM structure (as implemented) doesn't allow for reliable or consistent guardrails is bad enough.

The fact that the companies profiting from these products generally aren't bothering to try to implement meaningful guardrails is bad enough, from its own angle.

Combined, these two factors are just rotten. It's like they're saying, look! The fact that our new motorcycle is designed so it can't reliably be steered means that we shouldn't even bother trying to add a steering mechanism. Isn't the new "go with the flow" cycling experience thrilling?

The fact that the companies profiting from these products generally aren't bothering to try to implement meaningful guardrails is bad enough, from its own angle.

Combined, these two factors are just rotten. It's like they're saying, look! The fact that our new motorcycle is designed so it can't reliably be steered means that we shouldn't even bother trying to add a steering mechanism. Isn't the new "go with the flow" cycling experience thrilling?

Upvote

26

(27

/

-1)

That may be, but it doesn't absolve OpenAI of potential liability.Sorry, but that kid was a complete moron.

Upvote

65

(68

/

-3)

"ChatGPT had access to “everything on the Internet,” so it “had to be right,”

I honestly don't know any sane and rational person can come to a conclusion like this. As if everything posted on the Internet is correct?!?

I'm sure countless millions share his reasoning.

I honestly don't know any sane and rational person can come to a conclusion like this. As if everything posted on the Internet is correct?!?

I'm sure countless millions share his reasoning.

Upvote

44

(45

/

-1)

Post content hidden for low score.

Show…

Fixed the fist part, but suggesting something is safer doesn't mean it's safer, been tested for safety or has any evidence whatsoever that it can be safer and never lead to the kind of shit that happened here.OpenAI does not seem to accept that ChatGPT is responsible for Nelson’s death. In a statement provided to Ars, their spokesperson, Drew Pusateri, described Nelson’s death as a “heartbreakingvery predictable situation” and expressed that “our thoughts are with thefamilyshareholders.” However, Pusateri also emphasized that the ChatGPT model implicated is “no longer available” and suggested that current models are safer.

So fuck OpenAI. May it die a horrible and painful death.

Preferably one suggested by ChatGPT...

Upvote

6

(8

/

-2)

Post content hidden for low score.

Show…

Post content hidden for low score.

Show…

He specifically told his parents that ChatGPT was right because it had access to the internet. Sounds like a talk could have taken place right there about NOT using ChatGPT for anything important.Oh, come off it. Are you of the belief that the parents knew he was using the bot to try to manage his highs? Are teenagers who take drugs often enough to want a couch to minmax their highs often completely transparent with their parents?

A vulnerable person with an addiction to substance highs was told by a service that the thing that killed him was a good idea. That's never going to be the parent's fault.

Upvote

-12

(19

/

-31)

Post content hidden for low score.

Show…

chateauarusi

Ars Praetorian

OpenAI does not seem to accept that ChatGPT is responsible for Nelson’s death.

I look forward to the day this grotesque company accepts the responsibility for one single solitary negative outcome. But I'm not holding my breath until then.

Upvote

14

(15

/

-1)

MachinistMark

Ars Centurion

Ah, I suppose if they didn’t know enough about LLMs then naturally it’s their fault their child is dead. That seems like a sound logical leap.He specifically told his parents that ChatGPT was right because it had access to the internet. Sounds like a talk could have taken place right there about NOT using ChatGPT for anything important.

Upvote

54

(57

/

-3)