AI coding agents from OpenAI, Anthropic, and Google can now work on software projects for hours at a time, writing complete apps, running tests, and fixing bugs with human supervision. But these tools are not magic and can complicate rather than simplify a software project. Understanding how they work under the hood can help developers know when (and if) to use them, while avoiding common pitfalls.

We’ll start with the basics: At the core of every AI coding agent is a technology called a large language model (LLM), which is a type of neural network trained on vast amounts of text data, including lots of programming code. It’s a pattern-matching machine that uses a prompt to “extract” compressed statistical representations of data it saw during training and provide a plausible continuation of that pattern as an output. In this extraction, an LLM can interpolate across domains and concepts, resulting in some useful logical inferences when done well and confabulation errors when done poorly.

These base models are then further refined through techniques like fine-tuning on curated examples and reinforcement learning from human feedback (RLHF), which shape the model to follow instructions, use tools, and produce more useful outputs.

Over the past few years, AI researchers have been probing LLMs’ deficiencies and finding ways to work around them. One recent innovation was the simulated reasoning model, which generates context (extending the prompt) in the form of reasoning-style text that can help an LLM home in on a more accurate output. Another innovation was an application called an “agent” that links several LLMs together to perform tasks simultaneously and evaluate outputs.

How coding agents are structured

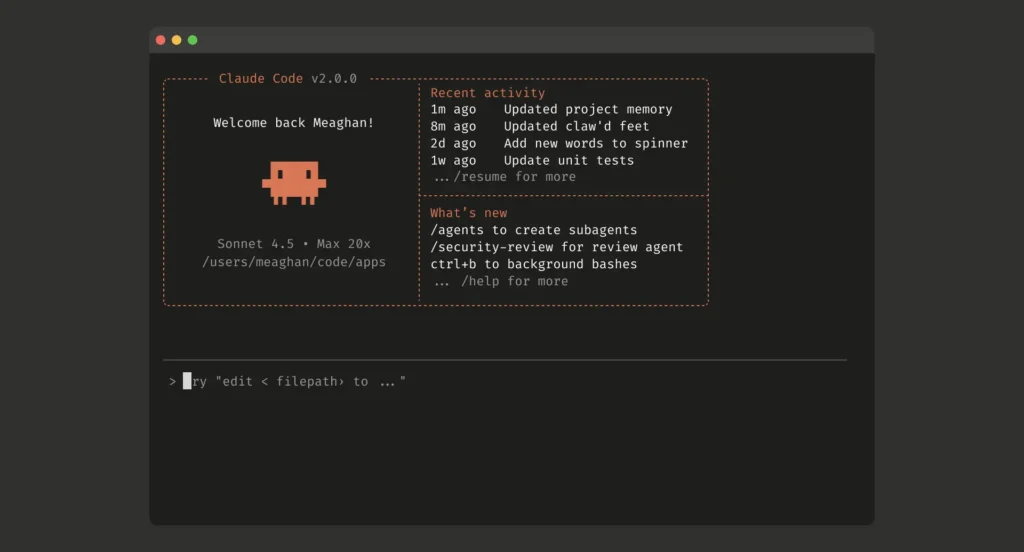

In that sense, each AI coding agent is a program wrapper that works with multiple LLMs. There is typically a “supervising” LLM that interprets tasks (prompts) from the human user and then assigns those tasks to parallel LLMs that can use software tools to execute the instructions. The supervising agent can interrupt tasks below it and evaluate the subtask results to see how a project is going. Anthropic’s engineering documentation describes this pattern as “gather context, take action, verify work, repeat.”

So it will be with LLMs. LLMs right now are maybe the conceptual equivalent of Windows 3.1 - a GUI running on top of DOS rather than a standalone OS.

The 'trick' to coding more than individual functions with LLMs right now is you can't just wing it. You can't just dive in and start coding. You absolutely have to think through your architecture, invariants, etc., first - at least good enough to do a first dev version. You have to go Intent --> Mission Engineering/Analysis --> Stakeholder Needs & Requirements --> Logical Architecture --> System Requirements --> Test Plans. Then you can start coding with a LLM. And those system requirements have to be well written...which, historically, is a massive weakness of many people. You can iterate afterwards, but you have to start from a strong foundation.

If a requirement isn't written in a way that it can be unambiguously verified yes/no with no room for misinterpretation, using one or more verification methods of inspection/demonstration/analysis/test/similarity/sampling, and each requirement covers one and only one operational, functional, or design characteristic/constraint, if the requirement isn't consistent with others, feasible, traceable, and unique...then it's a poor requirement. Generally speaking, most people aren't trained in writing good requirements. Requirements management is one of our bread and butter elements in systems engineering, along with verification and validation, but most people haven't needed to internalize it like we do.

Context limitations are significant given the stateless nature of current LLMs. But as others have noted, careful management of handoffs between threads, using a System_Intent.md and other artifacts to provide the constraints/guiderails within threads, careful use of versioning, and other processes will greatly lessen the pitfalls.

However, integrating all of that into DevSecOps will take a while longer - like I said, I view current state as somewhat analogous to Win 3.1. LLMs seem to be optimized for being pretty, flashy, and looking useful rather than being designed from the ground up to say 'no' and prevent mistakes/errors. To be useful as infrastructure we need AIs that are stateful instead of stateless and can take user inputs and say "I can't do that - it violates X, Y, and Z" when you're trying to do something that can have genuine safety and/or security concerns. More like the industrial systems that we entrust to fly aircraft, manage power grids, etc., although that analogy has its flaws, too.