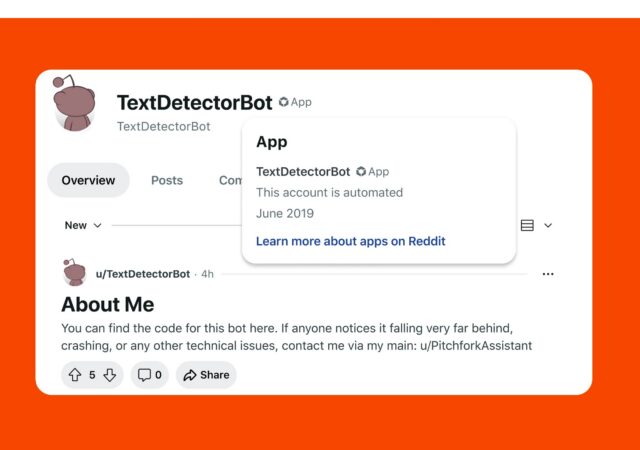

Reddit will require accounts that exhibit “automated or otherwise fishy behavior” to verify that a human runs them, Reddit CEO Steve Huffman said in a Reddit post today. The verification process aims to combat unwanted bots from flooding Reddit at a time when AI bots are poised to take over the Internet.

“As AI becomes a bigger part of the Internet, we want to make sure that when you’re on Reddit, you know when you’re talking to a person and when you’re not,” Huffman said.

Human verification will only occur if Reddit suspects that an account is a bot. This is “rare” and won’t apply to “most users,” Huffman emphasized. If the account cannot prove that it’s human, it “may be restricted,” he said.

Reddit will check if an account is run by a human by using third-party tools that Huffman said won’t expose users’ true identity, Reddit username, or Reddit activity. Current methods that Reddit is exploring include passkeys, which Huffman said are a great starting point but don’t provide any “proof of individuality or anything other than ‘a human probably did something.’”

Reddit is also looking into third-party biometric services, like World ID, which uses iris-scanning tech.

“I think the Internet needs verification solutions like this, where your account information, usage data, and identity never mix,” Huffman said.

A last resort may be third-party government ID services, which Reddit is already required to use in some geographies, like the UK. Huffman said this is “the least secure, least private, and least preferred” method for human verification on Reddit.

“When we are forced to do this, we design the integrations so that we never actually see your ID information, so your Reddit data cannot be tied to you,” he added.

Loading comments...

Loading comments...