We review a lot of hardware at Ars, and part of that review process involves running benchmark apps. The exact apps we use may change over time and based on what we’re trying to measure, but the purpose is the same: to compare the relative performance of two or more things and to make sure that products perform as well in real life as they do on paper.

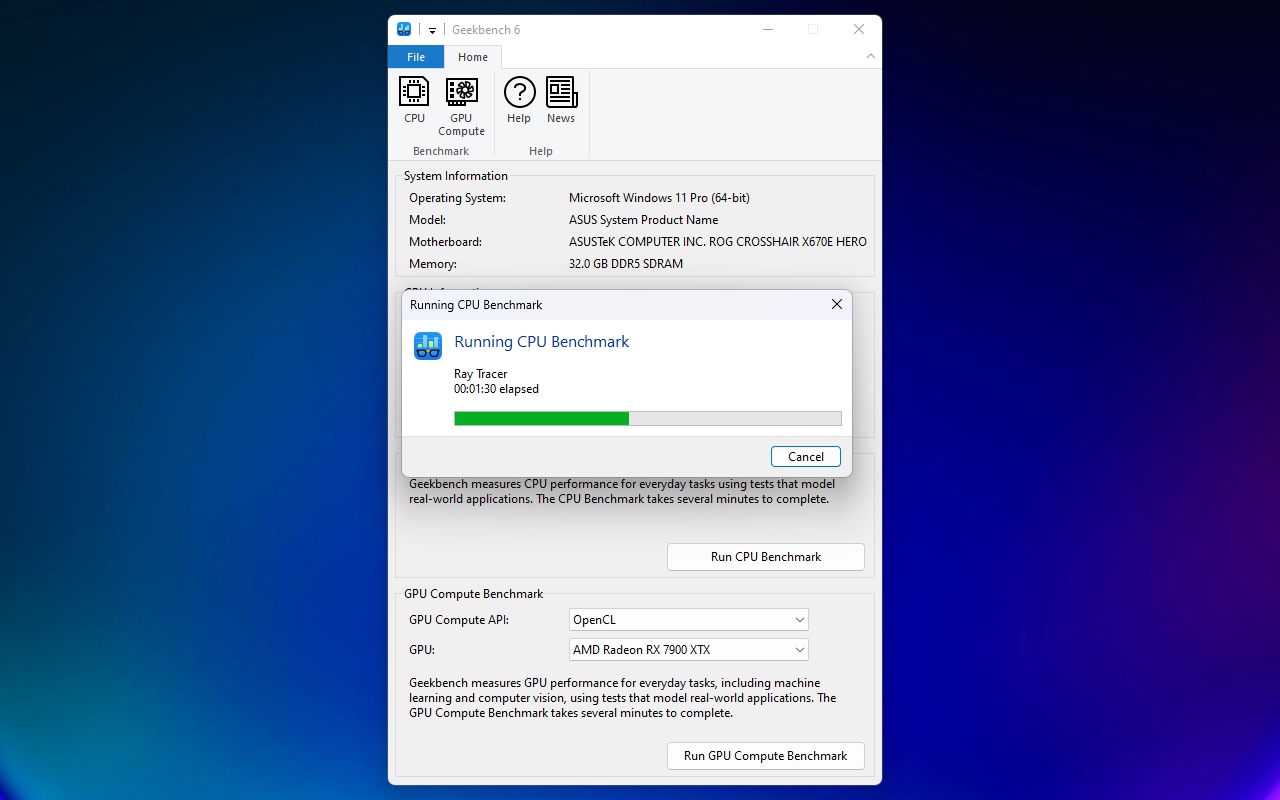

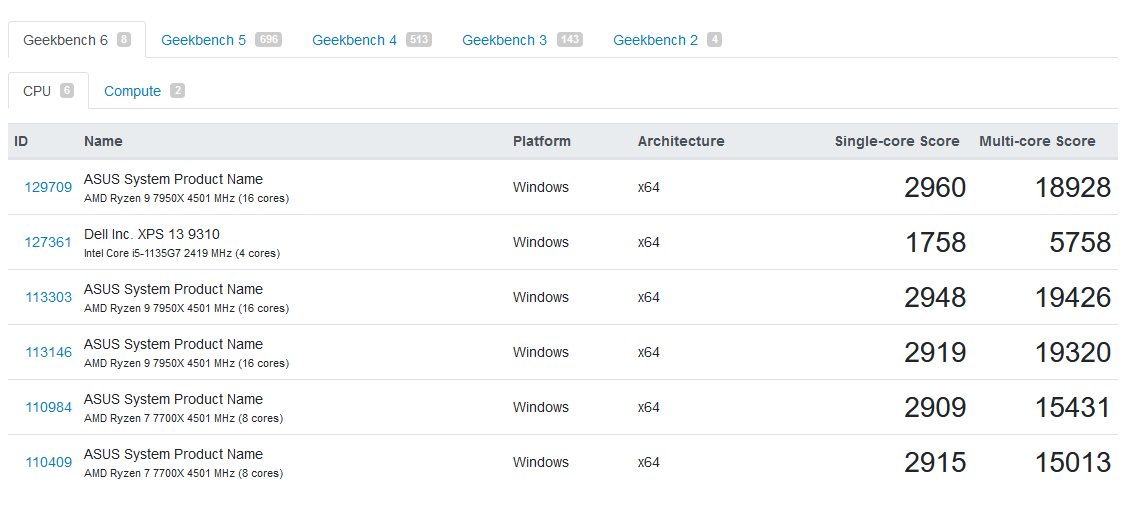

One app that has been a consistent part of our test suite for over a decade is Geekbench, a CPU and GPU compute benchmark that is releasing its sixth major version today. Partly because it’s small, free, and easy to run; partly because developer Primate Labs maintains a gigantic searchable database spanning millions of test runs across millions of devices; and partly because it will run on just about anything under the sun, Geekbench has become one of the Internet’s most-used (and most-argued-about) benchmarking tools.

“I’m really glad that people seem to have latched onto it,” Primate Labs founder and Geekbench creator John Poole told Ars of Geekbench’s popularity. “I know Gordon Ung at PCWorld basically calls Geekbench the official benchmark of Twitter arguments, which is the fallout from that.”

Cross-platform right from the start

Geekbench’s cross-platform compatibility is part of its appeal, which has been baked into the benchmark since its earliest versions. It began at the height of the PowerPC Mac era when Apple’s hardware was exotic and niche and apps that ran on Mac OS X were relatively rare.

“I just switched over to the Mac back in about 2002,” Poole told Ars. “So I was getting used to that ecosystem. And then the [Power Mac] G5 came out and I thought, oh, this looks really cool. I went out, bought one of the new G5s, and it felt slower than my previous Mac. And I thought, well, this is really strange; what’s going on. … So, you know, I grabbed what [benchmarks] I could download and ran them and got really confused, because what the benchmarks were saying wasn’t jiving with my experience.

Loading comments...

Loading comments...