Kirby, meet Stanley

(This article is the first of a three-part series. Click here for part 2 and here for part 3)

Until recently, it was the stuff of Hollywood. Movies from The Love Bug to Minority Report have depicted cars that let passengers just hop in, tell the car where they want to go, and then relax and enjoy the ride. But here in the real world, cars need human drivers.

Then in 2005, a modified Volkswagen, dubbed Stanley, turned science fiction into reality. This technological marvel, developed by a team at Stanford, navigated a course of more than 100 miles of desert terrain completely autonomously. There were no human beings inside the vehicle, and no one was relaying instructions to the vehicle from outside. Stanley finished at the head of a pack of 23 vehicles in a race called the Grand Challenge, which was sponsored by DARPA, the military research agency that also provided early funding for the network that became the Internet.

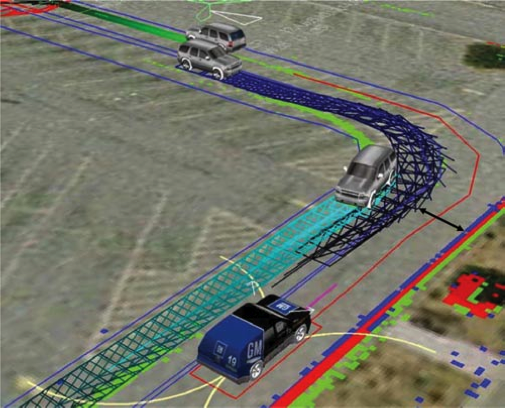

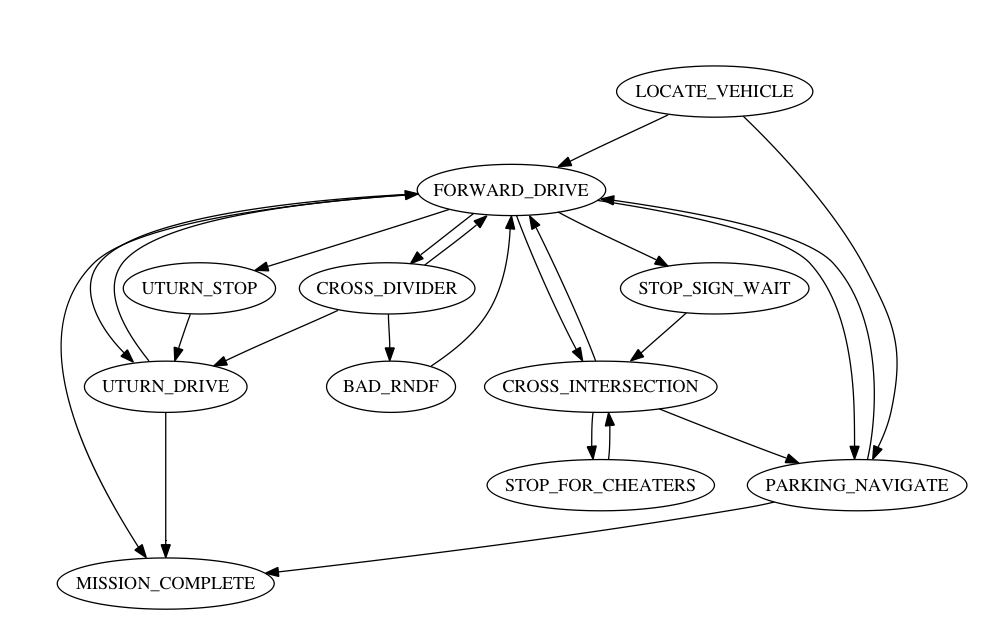

The robotics community outdid itself once again at DARPA's 2007 Urban Challenge. This contest featured all the challenges of the original Grand Challenge, along with a few new ones: the vehicles navigated a simulated urban environment and were required to interact with human-driven vehicles while obeying all traffic laws. Six teams successfully completed the course, with Boss, a car developed at Carnegie Mellon, claiming the prize.

The Urban Challenge participants line up at the starting gate

There's still a lot of work to be done before these vehicles will be mature enough to be let loose on our streets. Real streets contain significantly more obstacles and complexities than the simplified environment found in the 2007 contest, and the most successful vehicles in the Urban Challenge were not designed to cope with pedestrians, bicyclists, traffic lights, or icy roads. And even when the technology is ready for prime time, a variety of economic and political obstacles could delay widespread adoption of self-driving automotive technologies.

Nonetheless, the success of the Challenge series has made it clear that it is only a question of "when," not "if," self-driving automotive technologies will make their way into car showrooms and onto our streets. It's impossible to know exactly when such will arrive, but it's a good bet that a college student entering the workforce today will have a car that can drive him to work before he retires.

Loading comments...

Loading comments...