Back in May, Google augmented its Gemini AI model with SynthID, a toolkit that embeds AI-generated content with watermarks it says are “imperceptible to humans” but can be easily and reliably detected via an algorithm. Today, Google took that SynthID system open source, offering the same basic watermarking toolkit for free to developers and businesses.

The move gives the entire AI industry an easy, seemingly robust way to silently mark content as artificially generated, which could be useful for detecting deepfakes and other damaging AI content before it goes out in the wild. But there are still some important limitations that may prevent AI watermarking from becoming a de facto standard across the AI industry any time soon.

Spin the wheel of tokens

Google uses a version of SynthID to watermark audio, video, and images generated by its multimodal AI systems, with differing techniques that are explained briefly in this video. But in a new paper published in Nature, Google researchers go into detail on how the SynthID process embeds an unseen watermark in the text-based output of its Gemini model.

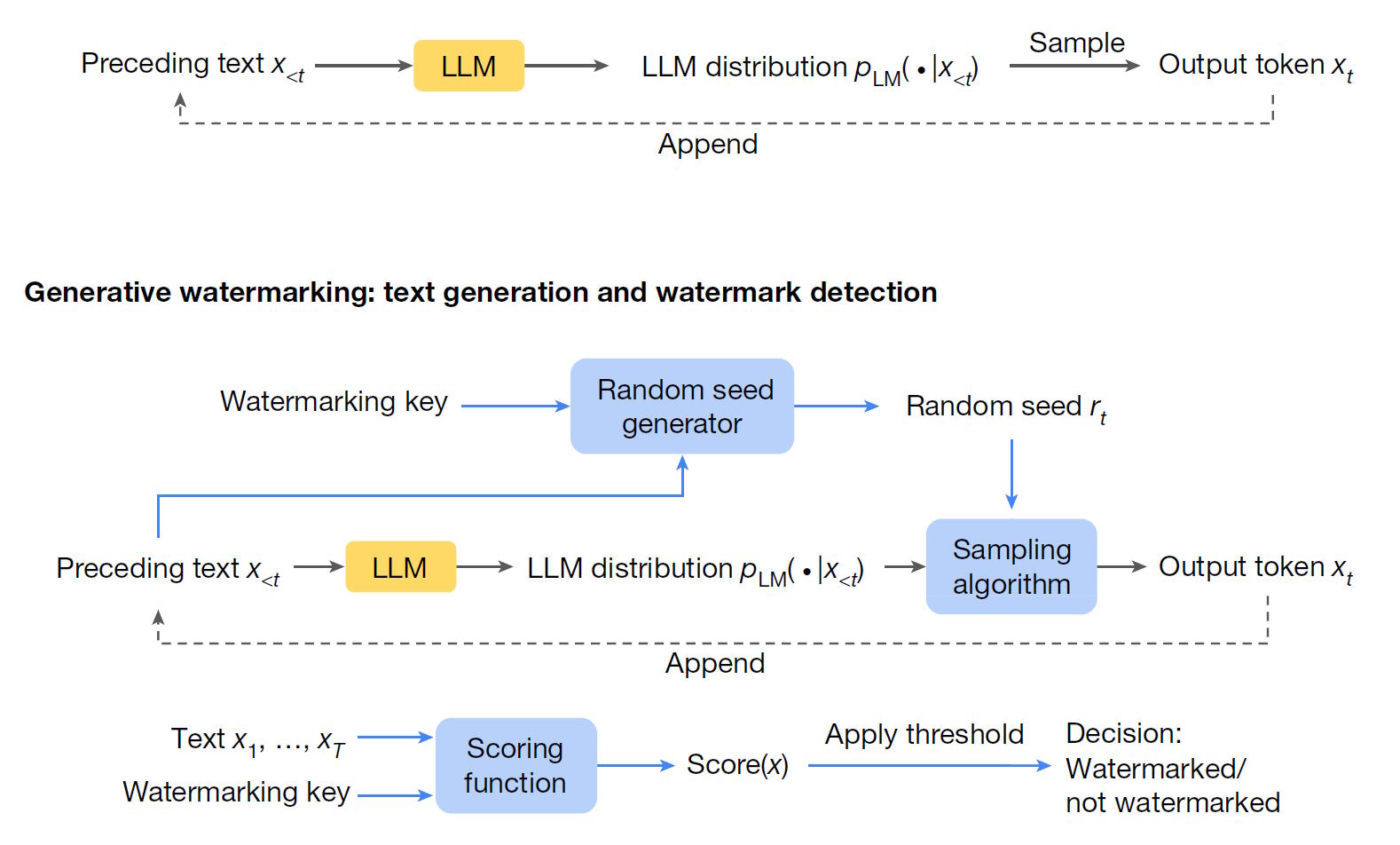

The core of the text watermarking process is a sampling algorithm inserted into an LLM’s usual token-generation loop (the loop picks the next word in a sequence based on the model’s complex set of weighted links to the words that came before it). Using a random seed generated from a key provided by Google, that sampling algorithm increases the correlational likelihood that certain tokens will be chosen in the generative process. A scoring function can then measure that average correlation across any text to determine the likelihood that the text was generated by the watermarked LLM (a threshold value can be used to give a binary yes/no answer).

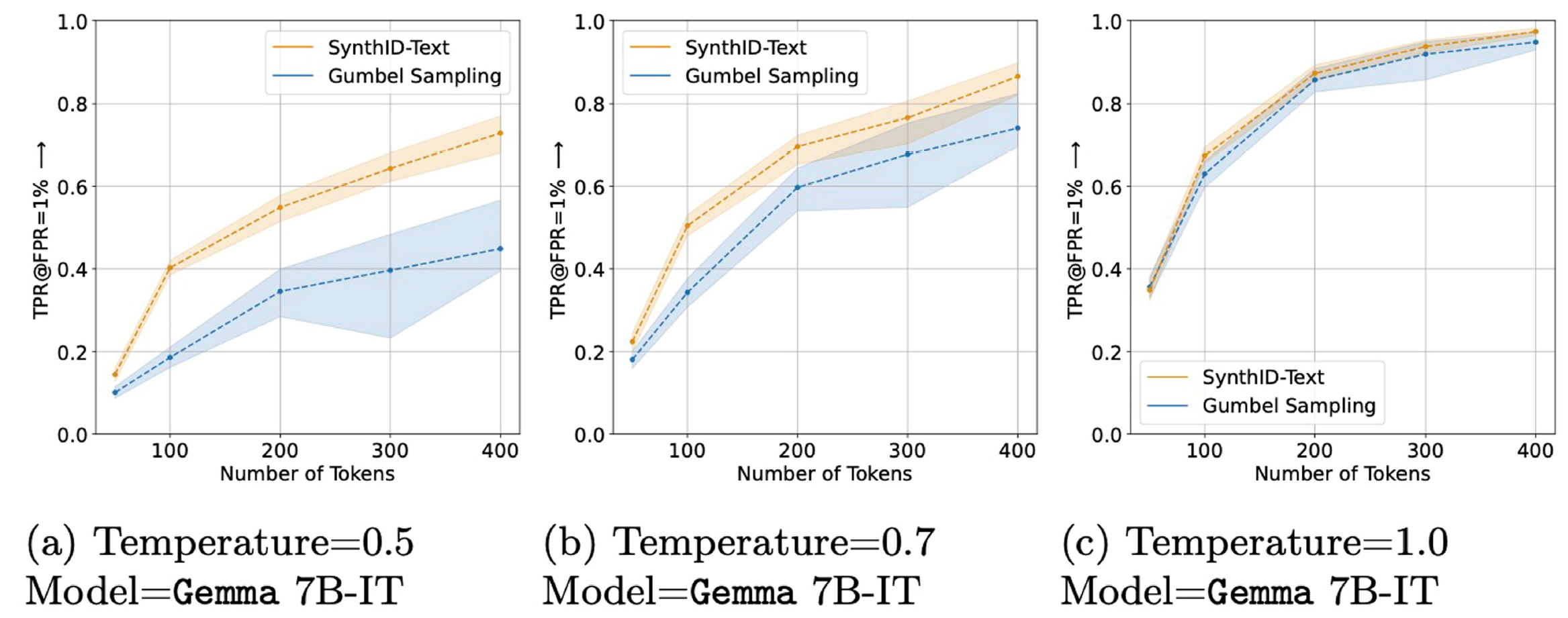

This probabilistic scoring system makes SynthID’s text-based watermarks somewhat resistant to light editing or cropping of text since the same likelihood of watermarked tokens will likely persist across the untouched portion of the text. While watermarks can be detected in responses as short as three sentences, the process “works best with longer texts,” Google acknowledges in the paper, since having more words to score provides “more statistical certainty when making a decision.”

Loading comments...

Loading comments...