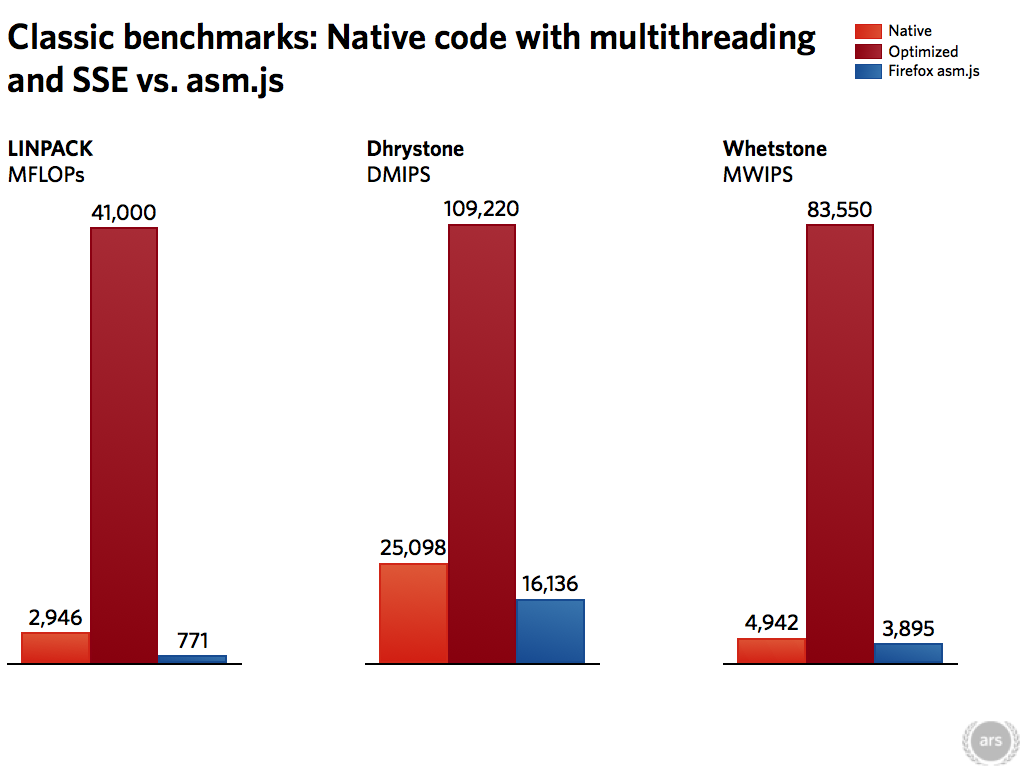

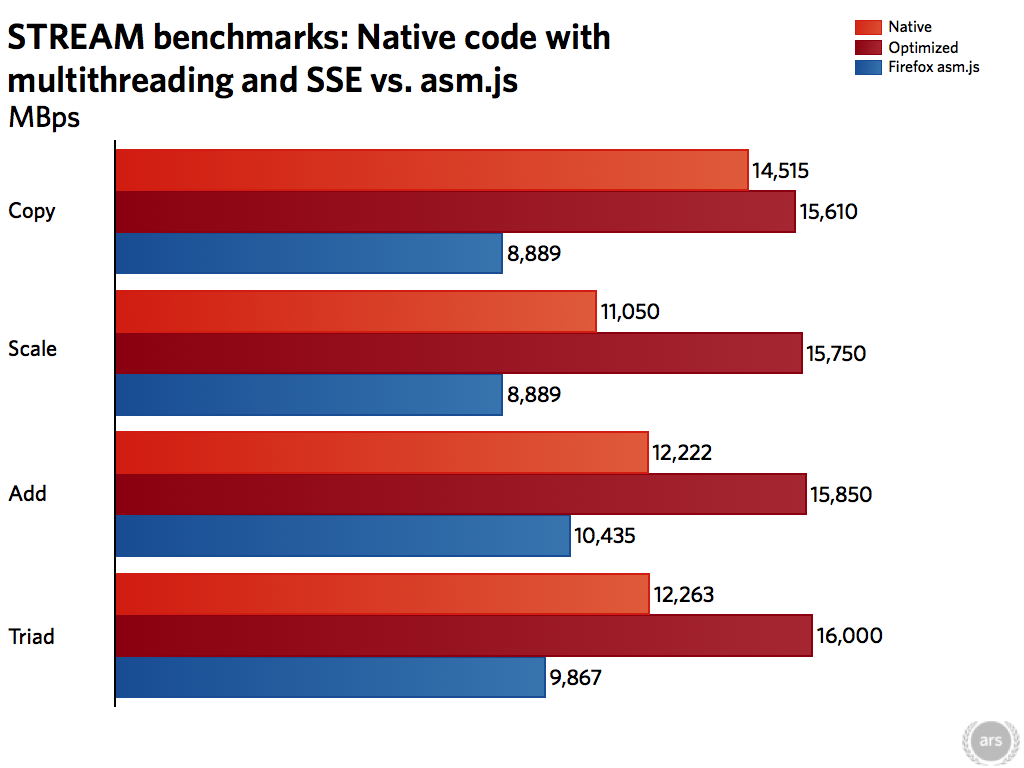

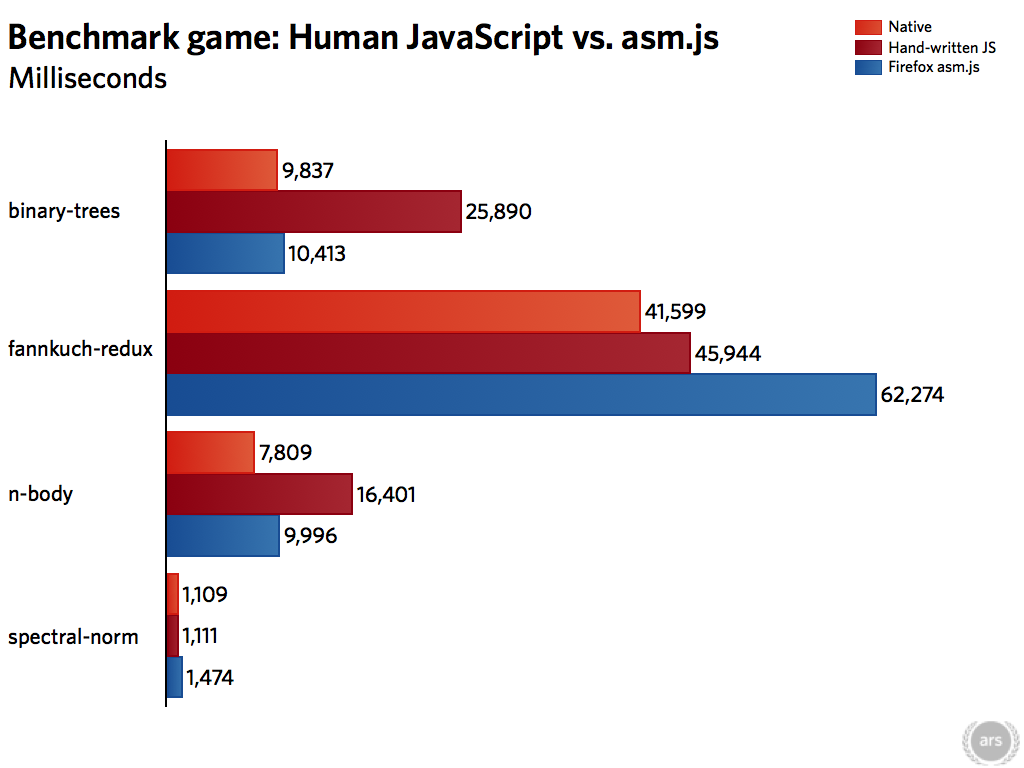

In a bid to make JavaScript run ever faster, Mozilla has developed asm.js. It’s a limited, stripped down subset of JavaScript that the company claims will offer performance that’s within a factor of two of native—good enough to use the browser for almost any application. Can JavaScript really start to rival native code performance? We’ve been taking a closer look.

The quest for faster JavaScript

JavaScript performance became a big deal in 2008. Prior to this, the JavaScript engines found in common Web browsers tended to be pretty slow. These were good enough for the basic scripting that the Web used at the time, but it was largely inadequate for those wanting to use the Web as a rich application platform.

In 2008, however, Google released Chrome with its V8 JavaScript engine. Around the same time, Apple brought out Safari 4 with its Nitro (née Squirrelfish Extreme) engine. These engines brought something new to the world of JavaScript: high performance achieved through just-in-time (JIT) compilation. V8 and Nitro would convert JavaScript into pieces of executable code that the CPU could run directly, improving performance by a factor of three or more.

Mozilla and Microsoft followed suit. Mozilla introduced TraceMonkey in Firefox 3.5 in 2009 and Microsoft released Chakra in 2011.

JIT compilation provided great scope for accelerating the performance of JavaScript programs, but it has its limits. The problem is JavaScript itself. The behavior of the language makes it hard to optimize. In languages such as C and C++, the behavior of a program is baked in when the program is compiled. Languages like Java and C# add a little more flexibility, but most of the time they share that same characteristic. The functions and data that make up a particular class are fixed when the program is compiled.

Loading comments...

Loading comments...