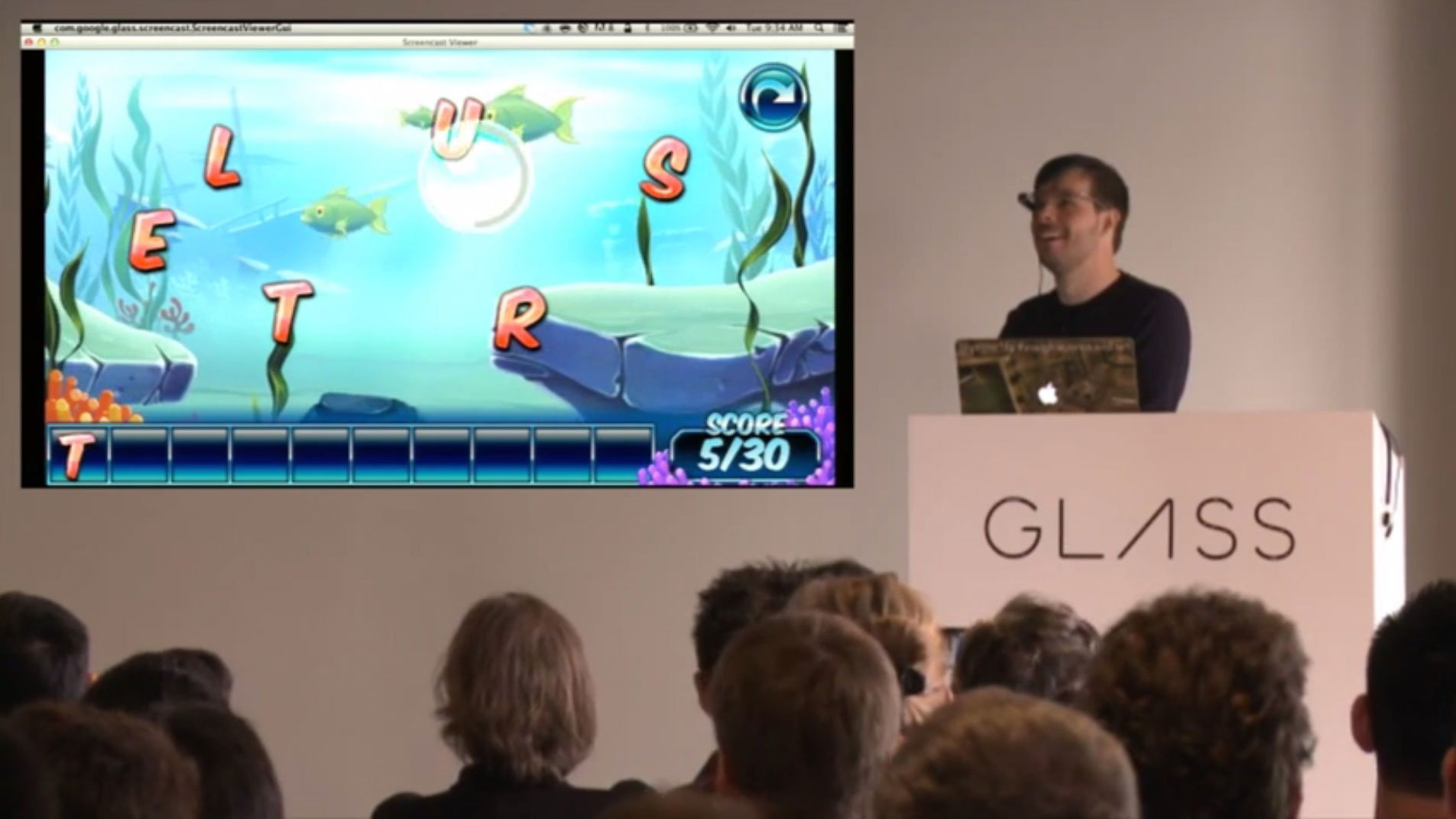

Today Google launched the Glass Development Kit (GDK) “Sneak Preview,” which will finally allow developers to make real, native apps for Google Glass. While there have previously been extremely limited Glass apps that used the Mirror API, developers now have full access to the hardware.

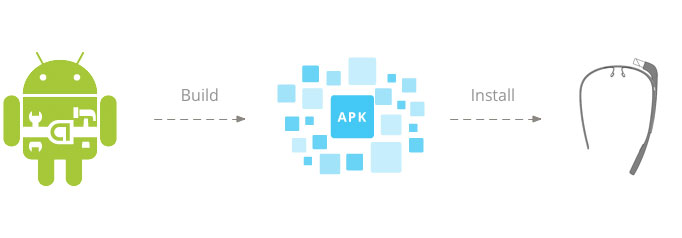

Google Glass runs a heavily skinned version of Android 4.0.4, so Glass development is very similar to Android development. The GDK is downloaded through the Android SDK Manager, and Glass is just another target device in the Eclipse plugin. Developers have access to the Glass voice recognition within their app as an intent, but it looks like only Google can add “OK, Glass” commands to the main voice menu. Apps can be totally offline and can do all their processing on Glass. They can also support background events and have full access to the camera and other hardware.

Update: Documentation for the GDK has come out, and any developer can add a voice trigger to the “Ok Glass” menu. It’s as easy as adding a few XML tags to the strings file. Third party apps are just as accessible as stock apps.

Google showed off a few of the first native Glass apps, and one of the coolest among them was Wordlens, a real-time, augmented-reality translation app. Wordlens works much like it does on the iPhone—foreign-language text targeted by the camera is translated on top of the video feed in real time. This is neat on a smartphone, but on a device like Glass it becomes much more powerful. Just by looking at text and saying “OK, Glass, translate this,” the text on the Glass video feed is translated and placed above the original text. Wordlens’ app uses the accelerometers to keep the virtual text aligned, all while working completely offline.

Loading comments...

Loading comments...